Anti-AI Hallucination: Why Accuracy Must Outweigh Intelligence

Written by Matthew Hale

- What Are AI Hallucinations?

- Types of AI Hallucinations

- Why Accuracy Matters More Than Intelligence

- What Causes AI Hallucinations?

- How AI Systems Reveal Hallucination Risks and the Need for Accuracy Controls

- How to Reduce AI Hallucinations

- Why Businesses Must Care

- Strengthen Your AI Foundation With Ethical, Accurate Practices

- Conclusion: The Path Toward Dependable, Non-Hallucinating AI

You’ve probably had this moment: you ask an AI a simple question, it gives you a perfectly polished answer… and something about it just feels off.

When you check the facts, you realise the answer wasn’t just slightly wrong-it was completely invented.

That’s an AI hallucination, and it’s becoming one of the biggest challenges in modern AI use. As highlighted in the GSDC Studio: AI Implementation Series, even the most advanced tools can produce confident but incorrect outputs-unless they are built with strong controls around accuracy, truthfulness, and reliability.

And as AI becomes part of everyday work-reports, summaries, analysis, and research need systems that prioritise correctness, not just speed or intelligence.

This blog explains why hallucinations happen, why they matter, and how better AI validation and verification practices can help us build more trustworthy AI-step by step and in simple, clear language.

What Are AI Hallucinations?

AI hallucinations occur when a model produces false or misleading information while sounding completely confident. According to IBM, hallucinations appear when an AI “perceives patterns or objects that aren’t really there,” meaning it creates an answer that looks correct but isn’t rooted in reality.

These mistakes can appear in many forms:

- Made-up facts

- Incorrect statistics

- Invented citations or APIs

- Wrong calculations

- Outputs unrelated to the original question

And importantly, AI isn’t doing this on purpose.

AI predicts the “most likely” next word based on patterns it has seen. It doesn’t know the truth-it only knows what looks plausible. When information is missing or unclear, it may fill the gaps with invented details that sound polished.

This is why responsible ai development and strong ai validation and verification processes are essential. Without fact-checking, oversight, and transparency, even the best AI systems can generate mistakes that look perfectly real.Types of AI Hallucinations

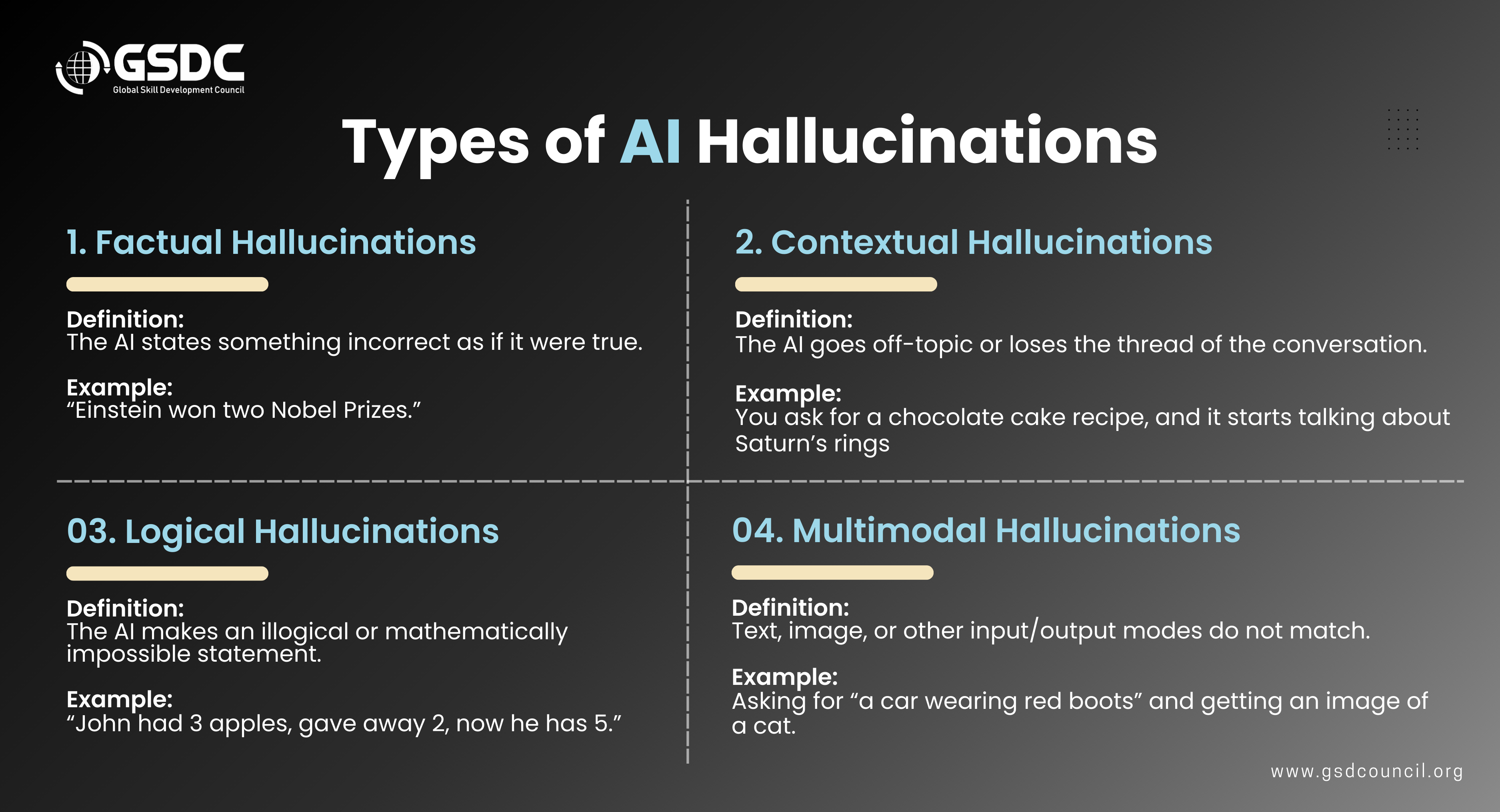

There are four main forms of ai hallucinations, each showing how an AI can confidently produce information that isn’t real. Understanding these types is essential for building trustworthy AI systems and improving overall ai reliability.

1. Factual Hallucinations:

These occur when an AI states something incorrect as if it were true.

Example: “Einstein won two Nobel Prizes,” even though he won only one.

This directly impacts ai accuracy because the system sounds right but isn’t.

2. Contextual Hallucinations

It is a case wherein the artificial intellect goes off the subject matter or confuses the flow of a dialogue.

Example: You ask a question about how to make a chocolate cake, and it's talking about the rings of Saturn out of the blue.

These kinds of errors are the reasons for which we need to have clear transparency and supervision of powerful AI models.

3. Logical Hallucinations:

These appear when AI produces answers that break basic reasoning.

Example: “John had 3 apples, gave away 2, now he has 5.”

This highlights gaps in reasoning, which ai validation and verification must address.

4. Multimodal Hallucinations

These occur when different forms of input-like text and images-do not align.

Example: Asking for “a car wearing red boots” and receiving a picture of a cat.

This makes clear why hallucination-free AI requires stronger cross-modal checks and better ai governance and risk management.

Understanding these categories gives teams the clarity needed to design safer, more responsible AI development practices grounded in truth and accuracy.Why Accuracy Matters More Than Intelligence

AI can sound smart, write smoothly, and imitate expertise. But none of that matters if the information is wrong.

Here’s why ai accuracy, ai truthfulness, and ai reliability matter far more than polished responses:

- Wrong information leads to real-world consequences: In industries such as law, medicine, finance, and engineering, a wrong output can put money at risk, endanger lives, or cause the breaking of laws.

- Artificial intelligence's confidence should not be mistaken for accuracy: Sometimes a model may give a very convincing answer, but that answer may be completely false. This is the main reason why the so-called hallucinations confuse the users.

In 2023, a New York lawyer submitted a legal brief containing several “court cases” that the AI had completely invented. The citations looked credible, but none of them existed, and the attorney was fined once the court uncovered the fabrication.

Incidents like this make one thing very clear: accuracy must outweigh intelligence-always.What Causes AI Hallucinations?

AI hallucinations typically come from five root causes:

1. Training Data Issues: If the data contains gaps, outdated information, or inconsistencies, the model may guess instead of retrieving facts.

2. Weak Factuality Objectives: Most AI models are trained to sound fluent, not necessarily correct. This reduces ai accuracy.

3. Decoding Errors: The model predicts words that “sound right,” even if they’re wrong.

4. Context Limitations: If the model forgets the context, it starts producing unrelated or confused answers.

5. Tool or Integration Failures: If a connected system fails silently, the AI may fabricate a response instead of acknowledging the gap.

These root causes show why strong ai governance and risk management frameworks are essential.How AI Systems Reveal Hallucination Risks and the Need for Accuracy Controls

Different AI systems-especially widely used tools like ChatGPT-show how hallucinations occur and why accuracy safeguards are essential for trustworthy AI systems.

1. General AI Systems Like ChatGPT

General-purpose AI models such as ChatGPT often demonstrate how quickly hallucinations can appear when the system lacks reliable information.

When asked for factual summaries or structured details, these models may generate responses that sound fluent but include incorrect or unverifiable claims.

This is why teams increasingly rely on ai hallucination detection techniques and stronger ai validation and verification workflows when using conversational AI in real tasks.

2. Anti-Hallucination Optimized Systems

Some AI systems are designed with stricter factual controls to reduce hallucinations.

These systems focus on accuracy by:

- Verifying information against multiple credible sources

- Refusing to answer when facts cannot be confirmed

- Returning statements like “Not enough sources found” instead of guessing

These safeguards directly improve ai truthfulness, ai reliability, and ai model transparency, helping organizations build more trustworthy AI systems.

3. AI Systems in Hiring and Screening

AI-powered hiring tools-such as resume screening systems-also show why accuracy matters.

A single hallucinated detail can influence:

- Candidate fairness

- Screening accuracy

- Recruiter decisions

How to Reduce AI Hallucinations

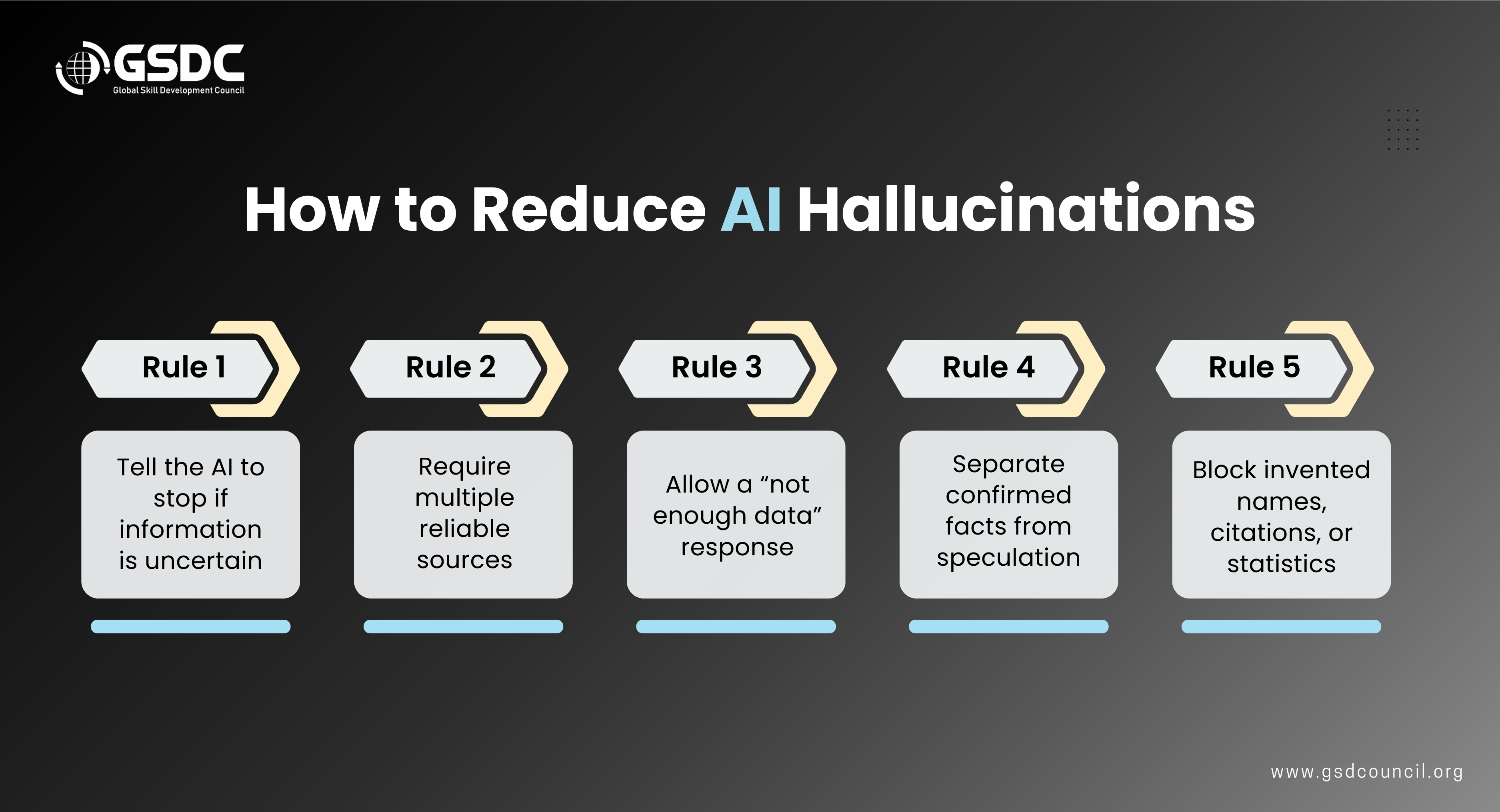

Reducing ai hallucinations isn’t just a technical challenge-it’s a process of teaching AI how to respond responsibly. Here are practical rules that help improve ai accuracy, ai truthfulness, and overall reliability.

Rule 1: Tell the AI to stop if information is uncertain

When a model is instructed not to guess, it avoids generating fabricated details and stays within trustworthy AI systems practices.

Rule 2: Require multiple reliable sources

Asking AI to confirm information using at least three independent sources strengthens ai validation and verification.

Rule 3: Allow a “not enough data” response

AI should be allowed to say, “I don’t know.” This is essential for ai model transparency and prevents false confidence.

Rule 4: Separate confirmed facts from speculation

Requesting a clear distinction between verified facts and unverified assumptions helps maintain ai truthfulness.

Rule 5: Block invented names, citations, or statistics

Preventing the model from creating fictional data reduces hidden risks and strengthens responsible AI development.

Applying these simple rules makes AI outputs safer, clearer, and more aligned with ai governance and risk management principles.

Why Businesses Must Care

AI hallucinations aren’t just technical issues-they create real business risks.

- Reputational Loss: One inaccurate AI-generated statement can damage public trust.

- Legal Exposure: Fabricated facts can violate compliance rules and lead to penalties.

- Operational Waste: Teams spend time correcting errors instead of making progress.

- Safety Risks: In regulated sectors, incorrect AI output can cause real-world harm.

This is why every organization needs strong ai governance and risk management.

Strengthen Your AI Foundation With Ethical, Accurate Practices

As AI becomes part of everyday workflows, professionals need a clear understanding of ai accuracy, ai ethics, and how to prevent ai hallucinations. Only when users understand how to handle dependability, honesty, and ethical AI practices can good tools function effectively.

The Global Skill Development Council (GSDC) supports this need through the GSDC Certified AI Tool Expert, which teaches how AI models work, why hallucinations occur, and how to build trustworthy AI systems. It’s a strong starting point for anyone who wants to use AI responsibly and create safer, more reliable workflows.

Conclusion: The Path Toward Dependable, Non-Hallucinating AI

It will be necessary for AI to incorporate systems that are designed with accuracy, transparency, and strong validation at their core before they can be considered truly reliable. Companies that emphasise accuracy now will be in a better position to ward off risks and keep the trust of their users as regulations and requirements for the use of trustworthy AI increase.

Still, the input of a human being is indispensable even in the presence of sophisticated models. As experts learn the mechanism of hallucinations and use responsible AI practices, they facilitate the process of making AI a reliable partner—one that supports rather than complicates the decision-making process and thus, lessens the uncertainty.

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!