Safe Generative AI Use for Managers Without Data Risks

Written by Rashmi Nadig

- AI Is Already in the Workplace: Approved or Not

- Common AI Mistakes Managers Make

- The Real Risks of Unsafe AI Use

- AI Does Not Replace Privacy Laws

- A Simple Pause That Prevents Most Problems

- What Managers Can Safely Use AI For

- Turning Unsafe Prompts into Safe Ones

- What Managers Should Avoid Using AI For

- Why Human Oversight Is Non-Negotiable

- Generative AI in Risk and Management Certification

- Conclusion

Generative AI has quietly become an integral part of everyday management work, reinforcing why is generative AI important in modern organizations. Managers increasingly rely on Generative AI Use to draft emails, summarise reports, refine tone, structure presentations, and reduce time spent on administrative tasks.

While this shift has happened naturally and often informally, it raises a critical question: not whether AI should be used, but how to ensure the responsible use of generative ai without exposing the organization to unnecessary data risks. AI tools are powerful productivity enablers, yet they lack awareness of privacy laws, confidentiality obligations, and organizational boundaries making data risk management a human responsibility. Without clear guardrails, even well-intentioned usage can introduce serious data governance risks, leading to accidental breaches, regulatory challenges, and erosion of trust.

The encouraging reality is that managing these data risks does not require complex frameworks or heavy documentation. By adopting simple, consistent practices, organizations can ensure the responsible use of generative AI while maintaining efficiency and innovation.

Establishing awareness around data governance risks, reinforcing safe usage habits, and embedding data risk management into daily workflows allows businesses to strike the right balance, leveraging the full potential of Generative AI Use while safeguarding sensitive information.

AI Is Already in the Workplace: Approved or Not

Whether organisations have formally approved AI tools or not, they are already being used, highlighting growing generative AI concerns across workplaces. Managers frequently depend on these tools to accelerate everyday tasks such as drafting, editing, or structuring information. This widespread Generative AI Use often happens informally and without documentation, making it harder to ensure the safe use of generative ai.

This lack of visibility is where risk begins. When no one knows what information was shared, which tool processed it, or whether personal or confidential data was involved, accountability becomes extremely difficult. From a data protection standpoint, invisible AI usage is usage that cannot be justified or defended amplifying generative ai security risks.

The reality is that data protection laws already apply, regardless of how new the technology may seem. Whether it is GDPR, CCPA, LGPD, PDPA, or other privacy frameworks, the core principles remain consistent. AI does not exist outside these regulations simply because it is innovative. This is why addressing generative ai concerns through structured practices is essential.

Common AI Mistakes Managers Make

Most AI-related data protection issues are not intentional they stem from everyday actions that overlook the safe use of generative ai. Some of the most common mistakes include:

- Copying sensitive complaints, HR records, or performance data into AI tools

- Uploading documents containing names, contact details, health data, or investigation details

- Assuming widely used AI tools are private by default

- Trusting AI-generated outputs without proper review or validation

- Skipping initial risk checks when adopting new tools, increasing generative ai security risks

While these actions may seem harmless, they can lead to serious consequences. Once sensitive data leaves controlled environments, it is often impossible to retrieve or secure it making proactive awareness of generative ai concerns and adherence to the safe use of generative ai critical for every organization.

The Real Risks of Unsafe AI Use

The consequences of unsafe AI use are not theoretical. They include:

- Accidental data breaches and unauthorised disclosures

- Complaints or investigations by data protection authorities

- Loss of trust from employees, customers, and partners

- Reputational damage that takes far longer to repair than the time saved

AI tools can process information instantly, but the impact of a mistake can last for years. That is why prevention matters more than perfection. Organisations do not need flawless AI use; they need consistently careful behaviour.

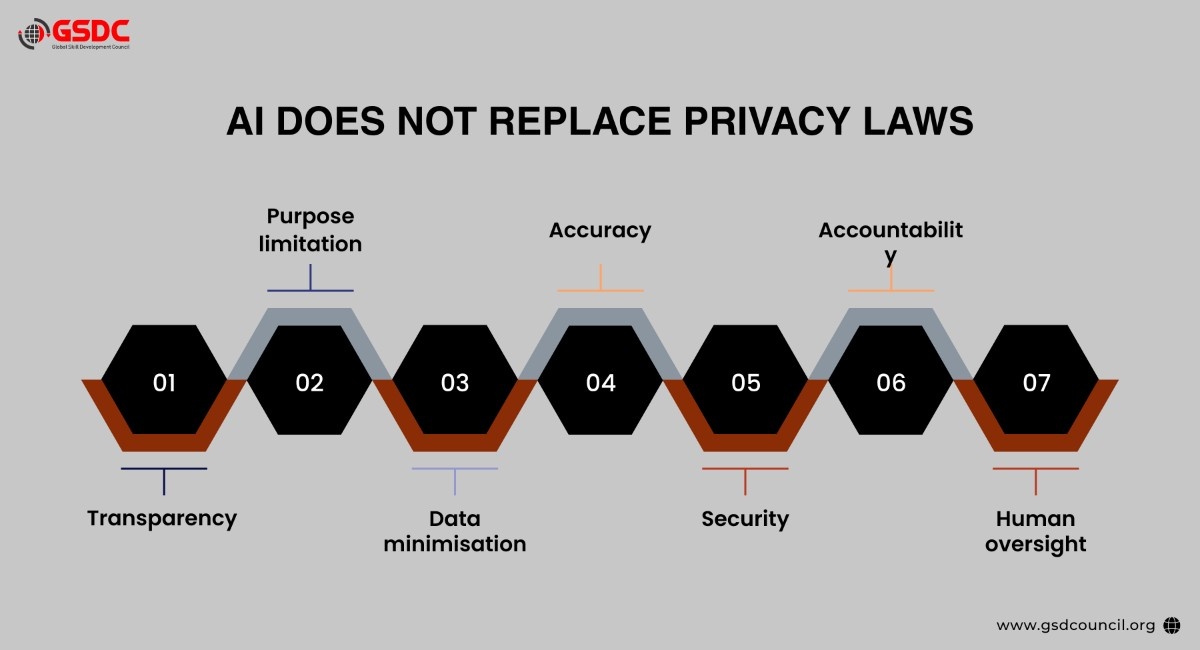

AI Does Not Replace Privacy Laws

A common myth is that AI operates outside privacy regulations. This is incorrect. If personal data is involved, data protection laws apply regardless of how advanced or widely used the tool is.

Across global privacy frameworks, the same foundational principles apply:

- Transparency

- Purpose limitation

- Data minimisation

- Accuracy

- Security

- Accountability

- Human oversight

AI must operate within these principles, not override them. Managers can use these principles as a simple checklist before engaging with any AI tool.

A Simple Pause That Prevents Most Problems

Before using AI, managers should pause for a few seconds and ask themselves:

- Am I using personal or confidential data here?

- Is this information sensitive or high-risk?

- Do I actually need AI for this task, or am I using it purely for convenience?

- Would I be comfortable explaining this use to a regulator?

This brief pause prevents most AI-related data protection issues. AI is not automation; it is a learning system. Unlike a photocopier that simply reproduces what it is given, AI continuously learns from inputs and generates outputs based on patterns, assumptions, and probabilities. That difference matters.

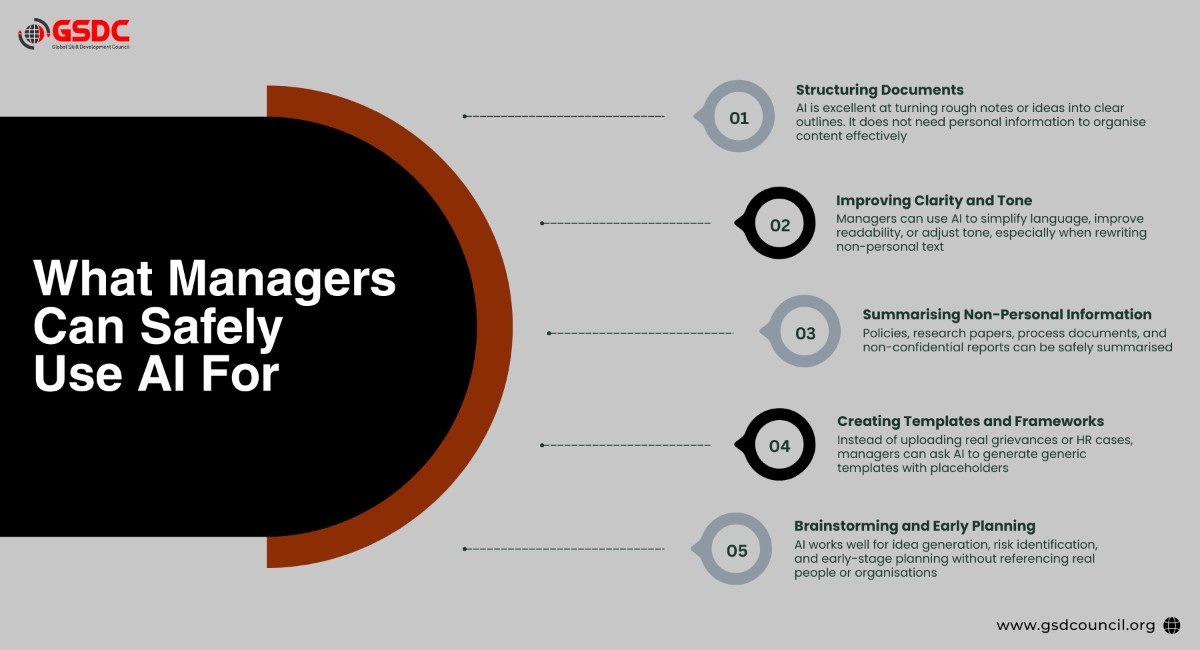

What Managers Can Safely Use AI For

When used correctly, AI is a highly effective management tool, especially when supported by strong generative AI risk management practices. The level of risk remains extremely low when no personal or confidential data is included in prompts, making generative AI data security easier to maintain while still benefiting from efficiency gains.

- Structuring Documents: AI is excellent at turning rough notes or ideas into clear, well-organised outlines. It does not require personal information to deliver value, helping reduce data governance risks.

- Improving Clarity and Tone: Managers can use AI to simplify language, enhance readability, or refine tone when working with non-personal content supporting safer data risk management.

- Summarising Non-Personal Information: Policies, research papers, process documents, and other non-confidential materials can be summarised without compromising generative AI data security.

- Creating Templates and Frameworks: Instead of uploading real grievances or HR cases, managers can ask AI to generate generic templates with placeholders, a crucial step in managing generative AI risk.

- Brainstorming and Early Planning: AI is highly effective for idea generation, risk identification, and early-stage planning without referencing real individuals or organisations, thereby minimizing data governance risks.

The guiding rule is simple: no personal data equals very low risk. By embedding this principle into daily workflows, organizations can strengthen data risk management while ensuring responsible and secure use of AI.

Turning Unsafe Prompts into Safe Ones

Unsafe prompts often include identifiable details. For example:

- Asking AI to draft a performance warning for a named employee

- Requesting a summary of a specific customer complaint with order numbers

Safe prompts remove identifiers and use placeholders instead. For example:

- “Create a neutral template for addressing missed deadlines, with placeholders for names and dates.”

- “Generate a generic complaint response template with follow-up steps.”

This approach keeps managers in control of personal data while still benefiting from AI assistance.

What Managers Should Avoid Using AI For

Managers should not use AI to:

- Process personal, health, HR, or investigation data

- Handle identifiable customer or employee information

- Make automated decisions that affect individuals

- Onboard new AI tools without proper checks

Before introducing any AI tool, especially one that touches personal data, organisations should ensure:

- A Data Protection Impact Assessment (DPIA) is completed

- A Data Processing Agreement (DPA) is in place

- There is clarity on data storage, access, and retention

- The tool does not use prompts or documents for model training without explicit approval

If these safeguards are missing, the tool should not handle sensitive information.

Why Human Oversight Is Non-Negotiable

AI can sound confident and still be wrong. It can hallucinate, inherit bias, rely on outdated information, or miss local policy context. Managers must treat AI outputs as drafts, not decisions.

AI should support thinking, not replace it. Humans remain responsible for judgment, context, and outcomes. When something goes wrong, regulators do not question the AI; they question the people who used and approved it.

Accountability always stays with humans.

Practical Safeguards That Work Everywhere

Effective AI governance does not require heavy paperwork. Practical habits include:

- Keeping prompts generic and de-identified

- Reviewing and sense-checking all outputs

- Logging high-risk AI use

- Consulting data protection teams early

- Maintaining clear lists of approved tools

One simple rule solves most problems:

If you would not email something outside your organisation, do not put it into AI.

Generative AI in Risk and Management Certification

The AI Risk Management Certification by GSDC equips AI managers and business leaders with the practical skills needed to use AI responsibly while managing data and compliance risks.

It helps professionals understand how to integrate AI into decision-making without compromising security, ensuring a balance between innovation and governance.

Key benefits of Generative AI in Risk and Compliance Certification include the ability to identify and mitigate AI-related risks, strengthen data governance and regulatory compliance, and make faster, insight-driven decisions with confidence.

Conclusion

Generative AI is not the enemy; uncontrolled use is. When managers understand the boundaries, AI becomes a powerful asset rather than a liability. Used responsibly, it enables faster drafting, clearer communication, and better structure without exposing personal data or organisational risk.

Safe AI use is not about slowing innovation. It is about protecting people, trust, and the organisation while embracing the benefits of modern technology. With clear guardrails, human oversight, and common sense, managers can use AI confidently, ethically, and safely.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!