DPIAs Made Practical: Managing Privacy Risks in Real Projects

Written by Rashmi Nadig

- What Is DPIA?

- Is a DPIA Only a GDPR Requirement?

- DPIA vs. Security Risk Assessment: Key Differences Between Privacy Risk and Security Risk

- When Do You Need a DPIA?

- Practical DPIA Process for Managing Privacy Risks in Real-World Projects

- Why DPIAs Are Especially Important for AI and Responsible AI Risk Management

- Common DPIA Mistakes That Increase Privacy and Compliance Risk

- Real-World Examples Where DPIAs Are Needed

- How DPIAs Help Organisations

- Building Capability for Responsible AI

- Conclusion: Make DPIAs Part of Everyday Project Planning

- FAQs

As organisations rapidly adopt AI tools, cloud platforms, automation, and digital systems, the way we handle personal data is changing faster than ever. New technology brings efficiency and innovation, but it also introduces new privacy risks.

This is where Data Protection Impact Assessments (DPIAs) become essential.

Many teams still see DPIAs as legal paperwork or a GDPR formality. In reality, a DPIA is a practical risk assessment that helps organisations use data responsibly, protect individuals, and avoid costly mistakes. This article explains what a DPIA is, when it is needed, and how to use it effectively in real-world projects.

What Is DPIA?

A Data Protection Impact Assessment (DPIA) is a simple way to check privacy risks before starting (or changing) a project that uses personal data. It helps teams understand what data is used, what risks it may create for people, and what safeguards are needed to keep that data safe. The goal is not to block projects, but to ensure new systems and tools are designed responsibly from the start.

Is a DPIA Only a GDPR Requirement?

No. While the term “DPIA” comes from the GDPR, the idea of assessing privacy risks before launching high-risk projects exists globally. Under GDPR, organisations must carry out a DPIA when processing is likely to result in a high risk to individuals’ rights and freedoms.

Different regions use different terms for similar assessments, such as:

- Privacy Impact Assessment (PIA)

- Risk Assessment

- Data Protection Assessment

The core principle is the same everywhere: if a project can significantly affect people’s data or rights, the risks should be assessed before go-live. This is especially important for projects involving AI, cloud services, monitoring tools, or large-scale processing.

DPIA vs. Security Risk Assessment: Key Differences Between Privacy Risk and Security Risk

|

Aspect |

DPIA (Data Protection Impact Assessment) |

Security Risk Assessment |

|

Primary focus |

Impact on individuals and their rights |

Technical risks to systems and data |

|

Main objective |

Protect privacy and prevent harm to people |

Protect systems from attacks and breaches |

|

Typical risks assessed |

Unfair treatment, loss of privacy, misuse of personal data, and lack of transparency |

Hacking, malware, vulnerabilities, unauthorised access |

|

Perspective |

Human and regulatory perspective |

Technical and operational perspective |

|

Regulatory driver |

Data protection and privacy laws |

Information security standards and policies |

|

Key stakeholders involved |

Privacy, legal, compliance, business teams |

IT, security, and infrastructure teams |

|

Outcome |

Safeguards to protect individuals’ rights and freedoms |

Controls to strengthen system and data security |

As organisations embed AI into core operations, foundational learning through programmes such as the Data Protection Officer Certification supports a better understanding of privacy, data protection, and responsible AI practices.

When Do You Need a DPIA?

A DPIA is needed when a project is high-risk for individuals. Common triggers include:

- Use of AI or automated decision-making

- Monitoring employees or customers

- Processing sensitive data (health, biometrics, children’s data)

- Large-scale data processing

- Profiling or behaviour tracking

- Cross-border data transfers

- New or intrusive technologies

If you’re unsure, a short screening questionnaire can help decide whether a full DPIA is required.

As organisations build internal capability to manage such risks, initiatives supported by bodies such as the Global Skill Development Council (GSDC) help improve awareness of privacy, data protection, and the responsible use of AI in practice.

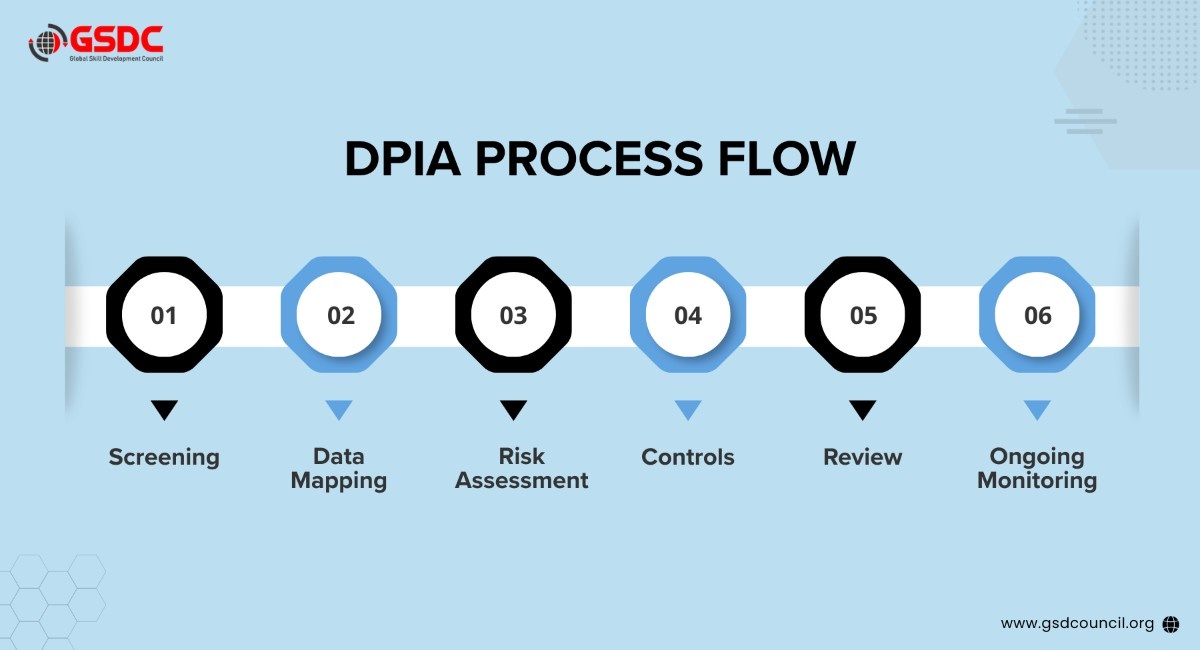

Practical DPIA Process for Managing Privacy Risks in Real-World Projects

A DPIA does not need to be complicated or highly technical. Done in a structured, practical way, it becomes a useful guide to design safer systems from the beginning.

1. Quick Screening

Start with a brief screening to decide whether a full DPIA is required. Ask simple question:

- Does this project use personal data?

- Does this project use personal data?

- Could it significantly affect individuals’ rights or freedoms?

If yes to any, do a full DPIA. This avoids unnecessary work on low-risk projects while ensuring high-risk initiatives are properly assessed.

2. Describe the Project

Document what the system, tool, or process does; who it’s for; and what problem it solves. Explain why personal data is needed and the business outcome. A clear description keeps everyone aligned.

3. Map the Data Flow

Identify how data moves through the system

- Where data is collected from

- Who can access it (internal and external)

- Where it is stored and processed

- Whether it is shared with vendors or transferred across borders

Data-flow mapping often reveals hidden risks (unexpected sharing, unnecessary retention, unclear vendor roles).

4. Identify Privacy and Security Risks

Assess what could go wrong for individuals if something fails or is misused. Typical risks:

- Misuse or unauthorised access

- Breaches or leaks

- Unfair or incorrect automated decisions

- Lack of transparency or control for users

- Unexpected third-party sharing

5. Define Controls and Mitigations

Put practical safeguards in place, e.g.:

- Limit data to what is strictly necessary

- Apply role-based access and least privilege

- Select vendors with strong privacy and security practices

- Disable model training on personal data where possible

- Add human review for AI-generated outputs

- Build these into the design before go-live (not as a later patch).

6. Review and Approve

Privacy, legal, information security, and relevant business leads review the DPIA. This confirms whether risks are mitigated and whether the project can proceed safely. Senior sign-off may be needed where residual risk remains.

7. Keep It Updated

A DPIA is a living document. Review it when systems change, new features are added, vendors change, or AI models are updated. This maintains ongoing accountability.

Why DPIAs Are Especially Important for AI and Responsible AI Risk Management

AI systems bring distinctive risks:

- AI can produce incorrect or misleading results

- Bias in training data can lead to unfair outcomes

- AI systems may be opaque (limited explainability)

- Vendors may train on inputs

- People may over-trust AI outputs

A DPIA helps clarify:

- What data goes into AI tools

- How outputs are used

- Who checks results

- How errors and bias are managed

This ensures AI supports people, rather than silently making decisions that affect them.

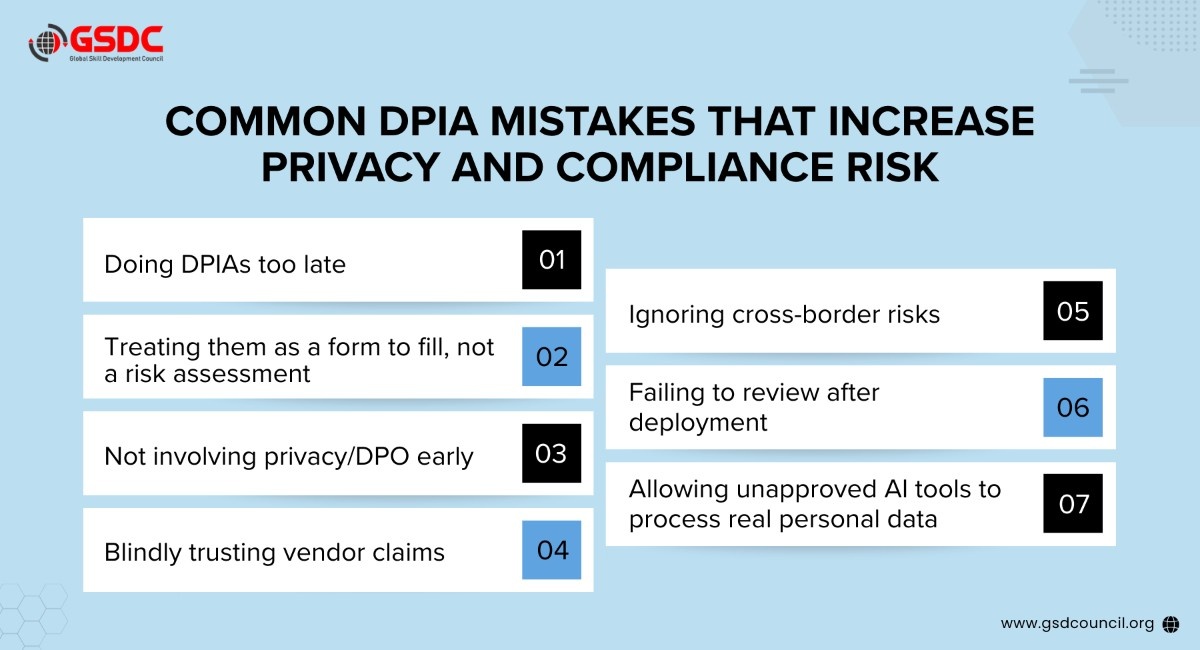

Common DPIA Mistakes That Increase Privacy and Compliance Risk

Real-World Examples Where DPIAs Are Needed

DPIAs are commonly required for AI chatbots handling customer data, CCTV and employee monitoring systems, AI recruitment tools, new CRM platforms, facial recognition, cloud migrations, behavioural analytics, smart buildings/IoT, healthcare risk scoring, and data sharing between organisations.

Rule of thumb: if a system is automated, intrusive, sensitive, large-scale, or cross-border, a DPIA is strongly recommended.

How DPIAs Help Organisations

When done well, DPIAs:

- Protect individuals from harm

- Build trust with customers and employees

- Reduce regulatory and legal risk

- Prevent costly re-work after go-live

- Enable safer and faster innovation

- Demonstrate accountability to regulators and stakeholders

Rather than slowing projects down, DPIAs help teams launch with confidence.

Building Capability for Responsible AI

As organisations scale AI and data-driven systems, managing privacy risk is now a core capability, not merely a compliance task. Effective DPIAs depend on people understanding privacy, governance, and responsible AI, not only on processes.

Support from bodies such as the Global Skill Development Council (GSDC), alongside programmes like the Data Protection Officer Certification, helps build this foundational capability and enables more responsible adoption of AI.

Conclusion: Make DPIAs Part of Everyday Project Planning

DPIAs are not just a compliance requirement; they are a practical way to manage privacy risks in modern digital projects. By starting early and involving the right stakeholders, organisations can prevent issues before they arise, protect individuals’ rights, and enable safer adoption of AI and new technologies. Treated as part of everyday planning, DPIAs help teams innovate with confidence while building trust and accountability.

FAQs

What early checklist should teams use to decide if a DPIA is needed?

Use simple screening questions: Does the project use AI? Does it involve monitoring? Does it process sensitive or large-scale data? Will data be shared externally or across borders? If any answer is yes, a DPIA is likely required.

What are the typical outputs of a DPIA?

A clear project description, mapped data flows, identified risks, mitigation measures, residual risk ratings, and documented sign-off for accountability and audit purposes — plus review triggers.

Is there a standard framework to implement DPIAs?

There is no single global template, but most follow the same structure: screening -> project description -> data mapping -> risk assessment -> mitigation -> approval -> ongoing review.

How does Privacy by Design relate to DPIAs?

Privacy by Design embeds privacy into systems from the start. DPIAs check whether those designs adequately protect individuals. Together they prevent risks, rather than reacting to them later.

How often should DPIAs be reviewed?

Review whenever systems change, new features are added, AI models are updated, vendors change, or new risks emerge. DPIAs are living documents, not one-off exercises.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!