GenAI for Software Delivery: Automate Requirements, Tests & Risk

Written by Matthew Hale

- Why GenAI matters for software delivery?

- Tool patterns and where they fit

- Practical workflow you can replicate

- How to test and validate the models (practical tips)

- Quality and governance software quality assurance in a GenAI world

- Addressing the big question: will AI replace software engineers?

- Risks, guardrails, and best practices

- Suggested starting checklist (quick wins)

- Final thoughts

Newer generative models are revolutionizing the way requirements are written, tests are developed, and risk is evaluated.

During this session, speakers delivered live demonstrations and real-life patterns rather than theory alone, and explained how generative AI in software development can shift work to the left, eliminate rework, and enhance the quality of software in quantifiable ways.

The write-up below is a brief, actionable summary that captures the special techniques, tool selection, and guardrails discussed during the talk.

Why GenAI matters for software delivery?

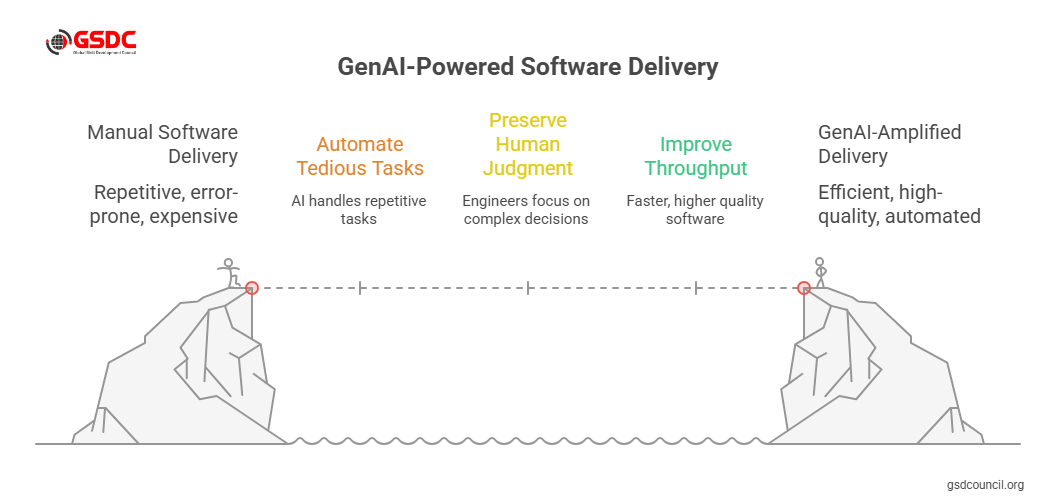

The presenters framed the problem simply: requirements, tests, and risk assessments are repetitive, error-prone, and expensive when done manually.

Using generative AI in software development and AI in software engineering workflows lets teams automate the tedious parts while preserving human judgment for the hard trade-offs.

This improves throughput and software quality without replacing engineers it amplifies them.

Real outcomes shown in the demo

The demo highlighted three practical wins the team achieved within a short cycle:

- Auto-expanding a single high-level requirement into well-formed child requirements and stories.

- Generating test cases (unit, integration, and acceptance) directly from requirements.

- Running an initial risk assessment that flags ambiguous requirements, missing edge cases, and potential security concerns.

These are not theoretical: the presenters showed a working flow that took a top-level requirement and produced a structured backlog entry, a set of test scenarios, and a prioritized list of risks, all ready for an engineer or QA lead to review.

Tool patterns and where they fit

Key tool types and how they were used in the session:

- Software requirements tools with embedded GenAI modules are used to expand, rewrite, and score requirements for clarity and testability.

- Generative model APIs (LLMs) for transforming prose requirements into testable steps and Gherkin-style scenarios.

- Test generation engines that output parameterized test cases and suggested mocks. This is a great example of AI in software testing.

- Risk analysis assistants that use heuristics + LLMs to spot missing constraints, compliance gaps, and ambiguous language.

- CI/CD hooks that run generated tests in a sandbox and report flaky cases back into the toolchain.

Together, these software requirements, tools, and test generators form a pipeline that accelerates sprint readiness and improves traceability.

Practical workflow you can replicate

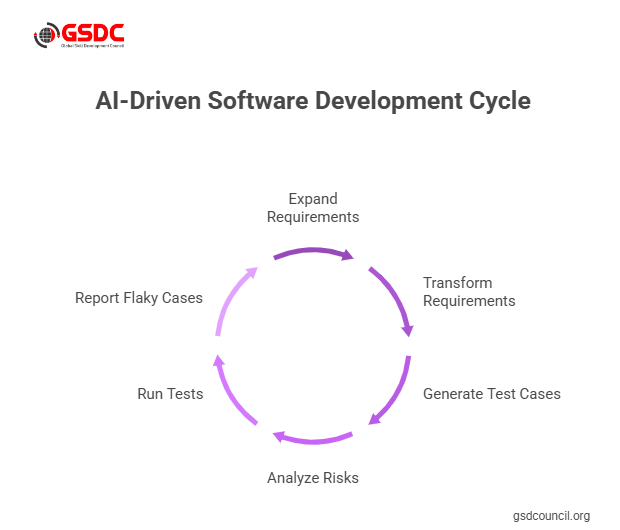

- Import a high-level requirement. The GenAI-assisted requirements tool drafts child requirements and acceptance criteria.

- Auto-generate test cases. Use the model to produce unit and acceptance tests in plain language or test frameworks (example: Gherkin, pytest). This demonstrates real ai in software testing value.

- Run quick static risk checks. Ask the model to produce a prioritized risk list and map risks to requirements.

- Execute generated tests in a sandbox. Failures feed back into the requirement for human refinement.

- Human review & sign-off. Engineers and QA adjust wording, approve tests, and set confidence thresholds before merging.

This loop illustrates how generative AI for software development turns vague requirements into verifiable artifacts fast, while keeping humans in control.

How to test and validate the models (practical tips)

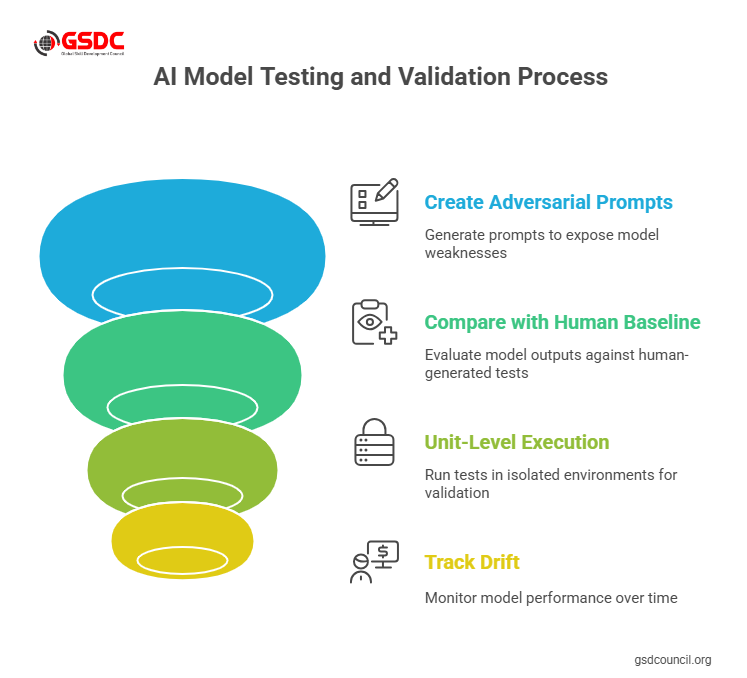

Presenters emphasized validation and gave hands-on suggestions for how to test AI models used in delivery:

- Create adversarial prompts to expose hallucinations and ambiguous outputs.

- Compare generated tests with a human baseline (sample of 50–100 cases) and measure coverage and false positives.

- Use unit-level execution (run generated tests in isolated environments) to validate that the tests are meaningful and not trivially passing.

- Track drift: monitor when model outputs degrade after changes to requirements style or dataset.

These steps make testing AI models an operational practice, not an afterthought.

Quality and governance software quality assurance in a GenAI world

The session repeatedly aligned GenAI work with established software quality assurance disciplines:

- Maintain traceability from requirement → generated test → execution result.

- Enforce access and redaction rules when ingesting proprietary specs.

- Version generated artifacts and prompt templates, and include them in CI/CD pipelines.

These practices ensure that software quality assurance remains rigorous even as automation scales.

Addressing the big question: will AI replace software engineers?

The presenters were clear: tools accelerate routine tasks but do not replace the need for engineers who make design, security, and product trade-offs.

The session reframed the question: AI in software engineering will change roles, shifting human time toward higher-value work design, review, and interpreting edge-case failures rather than routine spec writing.

In short, GenAI augments engineers; it does not remove the need for experienced judgment.

Risks, guardrails, and best practices

- Guard against over-trust. Always require human review of generated requirements and tests before deployment.

- Set confidence thresholds. If the model’s confidence or retrieval relevance is low, route the item for manual inspection.

- Record provenance. Log model prompts, inputs, and outputs for audits and incident analysis.

- Limit scope initially. Start with non-critical modules or internal tools to tune your pipeline.

These guardrails keep GenAI-driven outputs safe and auditable while you iterate.

Suggested starting checklist (quick wins)

- Pilot generative AI for software development on one feature: generate child requirements + tests.

- Add automated execution of generated tests in CI and monitor failures.

- Measure lift: time saved on requirements authoring, increased test coverage, and the number of issues caught earlier.

- Tune prompts and templates; version them in the repo.

Gen AI tools are something that every professional should be exploring and learning more about. Think you have what it takes? Check out GSDC AI Tool Expert Certification for more.

Final thoughts

Stated briefly, generative AI in software creation can shift work to the left, eliminate re-work, and make teams demonstrably quicker and more dependable.

Combined with sound software quality assurance methods and a close approach to model verification, AI in the field of software testing becomes a viable approach to getting more coverage and finding problems sooner.

Begin with a small-scale experience, conduct a pilot test with control, and determine the effect on cycle time, defect rate, and review effort.

Version prompts, generated artifacts, and test runs as versioned deliverables, and maintain people in the loop to design and address edge cases. Do so, and you will make GenAI a part of your delivery toolchain that you count on.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!