ISO 42001 Global Standard for Responsible AI Risk Management

Written by Matthew Hale

- What is ISO 42001?

- Why is ISO 42001 Important?

- Key Lessons for Implementing the ISO 42001 Responsible AI Standard

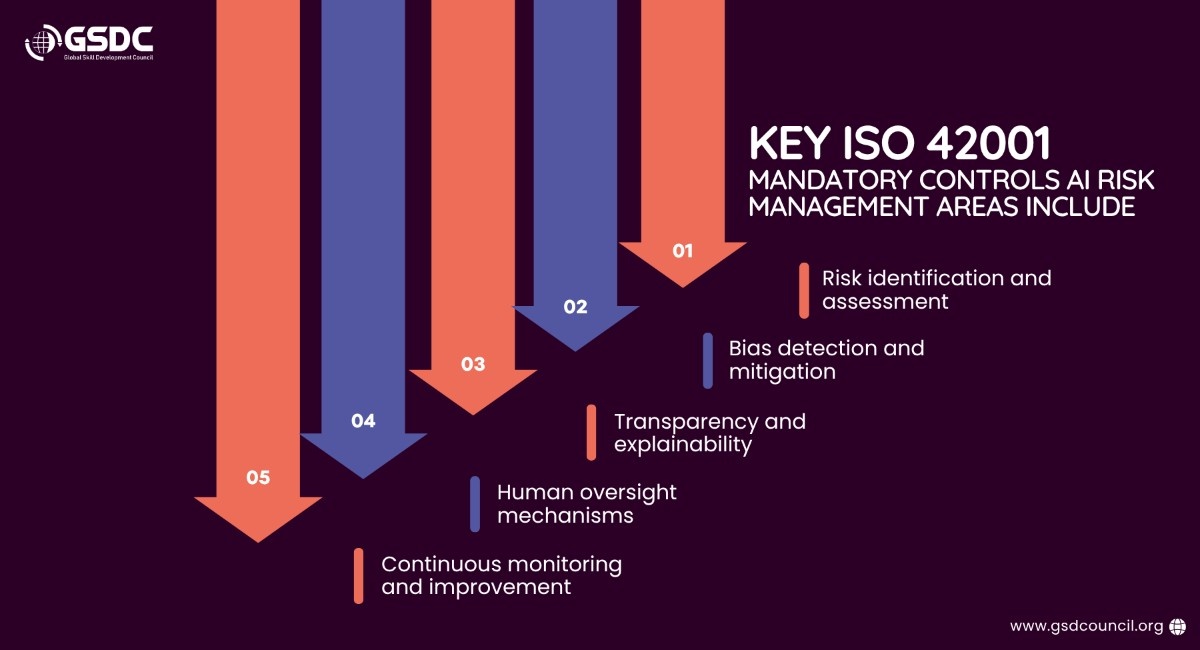

- ISO 42001 Mandatory Controls for AI Risk Management

- Real-World Responsible AI Examples

- AI Risk Management Frameworks: What Makes Them Effective?

- Steps to Get ISO 42001 Certification

- Challenges in ISO 42001 Implementation

- The Future of Responsible AI with ISO 42001

- The Role of Skills in ISO 42001 Adoption

- Conclusion

ISO 42001 is the global standard for responsible AI, helping organizations manage AI systems in a structured and accountable way. It introduces a clear AI risk management framework to ensure AI is developed and used responsibly.

As AI adoption grows, so do concerns around transparency, fairness, and control. Traditional approaches are no longer enough.

This is where ISO 42001 AI risk management plays a key role, helping organizations balance AI benefits and risks while integrating AI risk management frameworks into everyday operations.

What is ISO 42001?

ISO 42001 is recognized as the first international standard developed specifically for AI management systems. It assists in establishing the appropriate processes for governing AI in a manner that balances the advantages of AI with the disadvantages.

At its heart, it provides a structured AI risk management system that ensures AI systems remain transparent, fair, accountable, and secure. ISO 42001 AI risk management has thus become a key aspect of modern enterprise AI strategies.

Why is ISO 42001 Important?

AI risks are not just theoretical; they are actual risks that organizations are facing today. These risks include:

Organizations face:

- Ethical risks, which comprise bias and fairness concerns

- Operational risks, which comprise system failure and hallucination

- Regulatory risks, which comprise increased regulatory requirements

These risks are increasing at an exponential rate. The frequency of AI-related incidents has increased by more than 50% in recent times.

ISO 42001 addresses these challenges with an AI risk management framework, which is simple and effective in addressing AI-related challenges by allowing businesses to become proactive in AI governance as opposed to reactive.

Key Lessons for Implementing the ISO 42001 Responsible AI Standard

Getting ISO 42001 right is not just about checking boxes. It’s also about having a concrete and structured approach, sometimes with expert advice from a Certified ISO 42001:2023 Lead Auditor, to further enhance AI risk management.

1. AI Governance Must Be Built Early

Having governance from the outset of building, and not from the outset of shipping, is more beneficial.

2. Risk Management is Continuous

Having ISO AI risk management is an ongoing process. This process should be constantly monitored and improved.

3. Cross-Functional Collaboration is Critical

Having AI affect law, compliance, and business is critical. Legal, compliance, and business teams should work together for an effective risk management framework.

4. Documentation Drives Accountability

Having adequate and comprehensive documentation is crucial for attaining ISO 42001 certification.

All of these key takeaways demonstrate that having ISO 42001 is not simply about being compliant. It’s about having an enhanced AI risk management process.

ISO 42001 Mandatory Controls for AI Risk Management

Real-World Responsible AI Examples

To get a better understanding of this, here are some examples of responsible AI in the real world:

- Knockri uses hiring tools that filter out visual and vocal cues to reduce biases in hiring processes.

- Cera makes use of AI systems that assist in the healthcare industry, focusing on patient care with the supervision of humans.

- Many financial institutions use AI systems that incorporate bias monitoring tools, focusing on fair lending practices and lowering the risk of discrimination.

Organizations are also increasingly focusing on building internal expertise through frameworks and certifications supported by bodies like the Global Skill Development Council (GSDC), helping teams align with responsible AI practices.

These examples show how organizations that consider the risks and the advantages of using AI systems can exist in the real world.

AI Risk Management Frameworks: What Makes Them Effective?

A risk management framework for AI is a clear, organized way for companies to detect, grade, and manage risk as AI goes from concept to reality and on into the world.

Not all AI risk management frameworks deliver results. The most effective ones focus on structure, consistency, and real-world application.

Integrated into business workflows

Risk management is effective when it is integrated into the day-to-day activities of a company, rather than being treated as a separate box to check off.

Continuous monitoring

Effective risk management frameworks keep an eye on how AI is performing, ensuring that risk management is constantly evolving as the AI evolves.

Aligned with global standards

Using international standards such as ISO 42001 ensures consistency, trust, and easier compliance in any region of the world.

Lifecycle-based approach

Guidance on risk management for AI is available through bodies such as NIST, which reminds us that risk management for AI should be done throughout the entire lifecycle, from design through deployment and on into the world.

Balanced technical and ethical controls

Effective risk management frameworks take into account how the AI system works, as well as how it works in terms of ethics, fairness, and accountability.

This is why ISO 42001 is a great risk management framework for AI, as it offers a world standard for developing effective risk management for AI.

Steps to Get ISO 42001 Certification

The journey to obtain ISO 42001 is a series of steps that will enable your organization to create its AI risk management framework. The steps include:

1. Gap Assessment

Compare your organization’s current state with ISO 42001’s requirements to identify gaps.

2. Define Scope and Governance

Identify areas within your organization that use AI and establish your organization’s governance structure for ISO AI risk management.

3. Framework Implementation

Create or improve your organization’s AI risk management framework to align with ISO 42001’s guidelines.

4. Documentation and Controls

Establish your organization’s policies and ISO 42001’s mandatory controls to ensure consistency with your organization’s AI risk management.

5. Internal Audit

Conduct an internal audit to ensure your organization is ready before undergoing the actual audit.

6. Certification Audit

An independent body will audit your organization’s system to ensure all requirements are met, thereby granting your organization ISO 42001 certification.

By following this series of steps, your organization will be able to create a foundation for ISO’s AI risk management, thereby leading to ISO 42001’s certification.

Challenges in ISO 42001 Implementation

While there are many benefits in implementing ISO 42001 for responsible AI, there are also some challenges that we need to be aware of:

These are the challenges we are facing in implementing ISO 42001. However, in the long term, the benefits are much more significant than the challenges we are facing in implementing ISO 4200

The Future of Responsible AI with ISO 42001

As the integration of AI in businesses continues to rise, it has also become imperative that the adoption of standards such as the ISO 42001 global standard for responsible AI is no longer just an option but the norm.

It is becoming the standard for the development of the future of AI risk management framework strategies in the field of AI.

The Role of Skills in ISO 42001 Adoption

Adoption of the global standard on responsible AI, ISO 42001, is not entirely dependent on organizational policy and procedures. Instead, it is dependent on the skills that an organization has. Much of the challenge in implementing an ISO on AI risk management is in the implementation and understanding of the same at the governance level.

However, with many organizations seeking credentials such as the Certified ISO 42001:2023 Lead Auditor offered by the Global Skill Development Council (GSDC), professionals are able to implement an AI risk management framework in an effective manner. Skills are essential in ensuring that an organization is compliant and that the adoption of responsible AI is done with confidence.

Conclusion

AI is no longer just about innovation; it is about responsibility.

By using the ISO 42001 responsible AI standard, organizations can go beyond the experimentation phase and develop robust and reliable AI systems.

The real benefit is found in the balance between the risks and the benefits of AI and the development of the AI risk management framework, which the ISO 42001 framework can facilitate.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!