How Autonomous AI Agents Operate in Business Environments Without Human Micromanagement?

Written by Vidura Bandara Wijekoon

- The Evolution of AI Systems: From Chatbots to Autonomous Agents

- The Micromanagement Trap in AI Systems

- Understanding the Anatomy of Autonomous AI Agents

- Moving Beyond Prompt-Based Systems

- Building Operational Foundations for AI Agents

- Governance as Code: Ensuring Safe AI Operations

- Agent Identity

- AI Safety Mechanisms and Guardrails

- Human in the Loop vs Human on the Loop

- The Future: Multi-Agent AI Systems

- Industries with High AI Governance Requirements

- How Does the GSDC Certified Agentic AI Professional Certification Help Professionals?

- Conclusion

Artificial Intelligence is evolving rapidly, moving from simple automation tools to intelligent systems capable of making decisions and executing complex tasks. One of the most significant developments in this transformation is the rise of autonomous AI agent systems designed to operate independently, adapt to changing environments, and perform business operations without constant human supervision.

The AI agent market is projected to grow from $7.8 billion in 2025 to $52.6 billion by 2030 at a 46.3% CAGR, and Gartner forecasts that 40% of enterprise applications will embed task-specific AI agents by end of 2026, up from less than 5% in 2025 However, the challenge organizations face is not simply building AI agents, but scaling them effectively without falling into the trap of human micromanagement. Many companies deploy AI systems but still require constant monitoring and approvals, which slows innovation and reduces efficiency.

In a recent webinar titled “How Autonomous AI Agents Operate in Business Environments Without Human Micromanagement,” experts explored how organizations can build intelligent AI ecosystems that operate efficiently while maintaining safety, governance, and trust.

This blog explains the key concepts behind autonomous AI agents, their architecture, governance requirements, and how businesses can scale them responsibly.

The Evolution of AI Systems: From Chatbots to Autonomous Agents

To understand what autonomous AI agents are, it is important to examine the evolution of AI-driven systems and how they have progressed over time. This journey also helps explain what autonomous AI is and why it is becoming increasingly important in modern organizations.

Early AI implementations were mostly limited to chatbots. These systems were designed to handle relatively simple and repetitive tasks such as answering frequently asked questions, providing support information, or retrieving data from knowledge bases. While useful, they operated within narrow boundaries and could not make broader decisions or act independently.

The next stage introduced AI copilots, which work alongside humans rather than replacing them. Copilots assist professionals by generating recommendations, summarizing information, supporting productivity, and helping with decision-making. For example, developers may use coding assistants while analysts rely on AI-powered data tools to improve efficiency and insight.

Today, organizations are increasingly moving toward autonomous agents. Unlike chatbots or copilots, these AI systems are designed to independently perform tasks, coordinate workflows, make context-aware decisions, and interact with other digital systems. This is where the discussion expands into what autonomous systems are, as these technologies can operate with a higher degree of independence and adaptability.

For example, an AI agent in HR could:

- Analyze resumes

- Screen job candidates

- Schedule interviews

- Communicate with hiring managers

This progression from chatbot to copilot to autonomous agents marks a major shift in enterprise technology. AI is no longer being used only as a support tool; it is increasingly becoming part of the organization’s operational infrastructure. Understanding what autonomous AI is and how autonomous AI agents function is essential for businesses preparing for the next phase of intelligent automation.

The Micromanagement Trap in AI Systems

While AI agents promise greater automation and efficiency, many organizations fall into what experts often describe as the micromanagement trap. This happens when businesses invest in AI systems but still require humans to constantly monitor, validate, and approve nearly every action. Although human oversight remains essential, excessive supervision can significantly reduce the real AI benefits in business.

Over-controlling AI systems can create several operational challenges, including:

- Slower decision-making

- Increased operational costs

- Reduced productivity

- Limited scalability

As AI innovation continues to accelerate, the future of AI in business will increasingly depend on how effectively organizations balance automation with control. New AI models, research developments, and enterprise tools are emerging almost every week. In fast-changing business environments, organizations that rely too heavily on manual approvals for every AI-driven action may struggle to innovate at speed and maintain competitive advantage.

At the same time, removing human oversight entirely can also create serious concerns related to risk and governance. Without proper controls, organizations may face issues such as:

- Ethical violations

- Security vulnerabilities

- Incorrect decisions

- Hallucinated outputs

This is especially important when AI is being deployed across different types of business environments, where governance expectations, operational complexity, and risk tolerance may vary. The real solution does not lie in choosing between full automation or complete control it lies in building the right balance between autonomy, accountability, and governance.

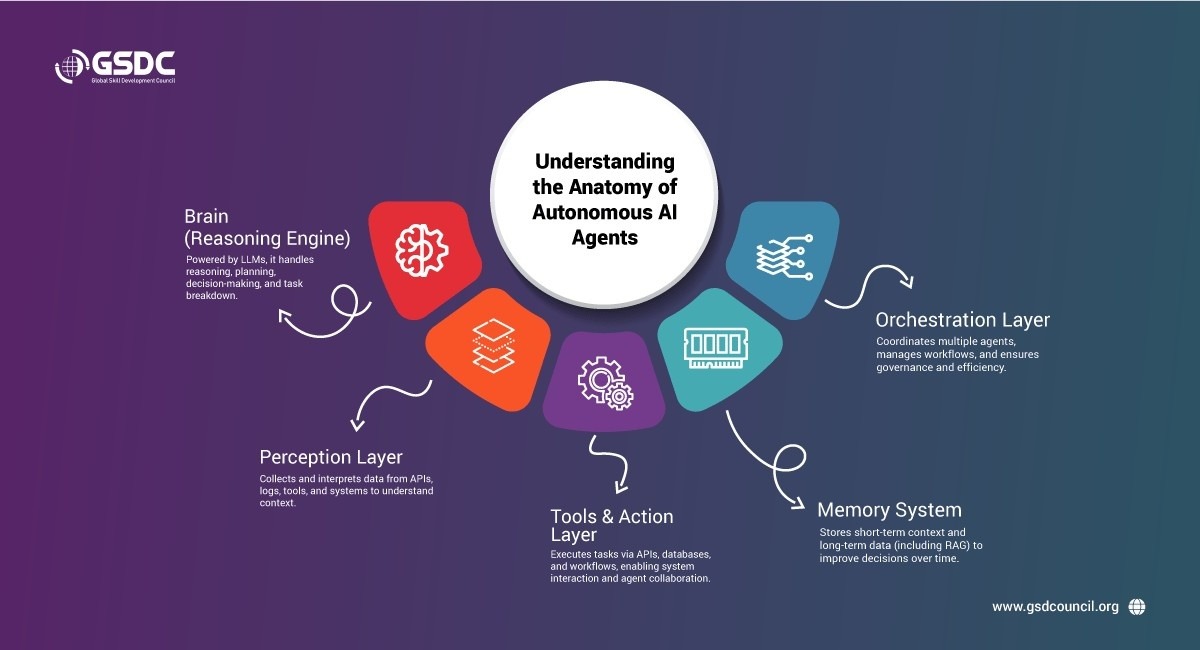

Understanding the Anatomy of Autonomous AI Agents

Autonomous agents are designed to operate in dynamic environments by perceiving information, making decisions, and performing actions.

A simplified architecture of an AI agent typically includes four key components.

1. Brain (Reasoning Engine)

The brain of an autonomous agent is usually powered by Large Language Models (LLMs) or other AI models.

This component handles:

- Reasoning

- Planning

- Decision-making

- Task decomposition

It enables the agent to understand instructions and determine the best course of action.

2. Perception Layer

Perception allows the AI agent to observe and interpret the environment.

Examples of perception sources include:

- Application logs

- APIs

- enterprise platforms

- project management tools

- system data streams

Through these inputs, the agent gathers contextual information necessary to make decisions.

3. Tools and Action Layer

The action layer enables the agent to interact with systems and execute tasks.

Typical capabilities include:

- API calls

- Database queries

- Workflow automation

- Task execution in enterprise software

Modern frameworks also enable agent-to-agent communication, where multiple agents collaborate to complete complex tasks. This communication should occur via approved interaction graphs, pre-defined, governed pathways that prevent ad hoc peer-to-peer exchanges and maintain audit accountability.

4. Memory System

Memory enables agents to retain information over time.

Memory may include:

- short-term task context

- long-term historical data

- vector databases for knowledge retrieval

- RAG (a retrieval mechanism that grounds agent responses in external knowledge stores, reducing hallucinations)

With memory capabilities, agents can improve decision-making by referencing past actions and outcomes.

5. Orchestration Layer

The orchestration layer acts as the control plane that coordinates multiple specialized agents. It decides which agent handles a given task, in what order, and manages sequential, parallel, or conditional routing. Without orchestration, multiple agents risk duplication, conflict, and operational chaos. The orchestrator also monitors progress, resolves inter-agent conflicts, and enforces enterprise-wide governance policies during execution.

Moving Beyond Prompt-Based Systems

Many early AI applications rely heavily on prompts. However, prompt-based systems alone are insufficient for enterprise automation.

Businesses require deterministic logic and structured workflows, especially when handling sensitive processes such as financial transactions or customer refunds.

Instead of relying purely on prompts, organizations are now integrating Standard Operating Procedures (SOPs) into AI workflows.

For example:

If a refund request exceeds a predefined threshold:

- The system checks the fraud score

- If the fraud score is high, the agent escalates the request

- If it is low, the refund is automatically processed

In practice, this transition isn't binary. Modern systems use confidence-threshold-based escalation: the agent auto-approves decisions only above a defined confidence score, and routes below that threshold to human reviewers. This creates a spectrum of autonomy rather than a hard on/off switch.

Embedding business rules into AI systems ensures predictability, accountability, and reliability.

Building Operational Foundations for AI Agents

For AI agents to operate autonomously in business environments, they must be built on a strong operational foundation.

Cloud platforms often provide the infrastructure needed to support AI agent workflows.

A typical architecture may include:

- User input from an application or portal

- AI gateway for processing requests

- orchestration frameworks that coordinate agent workflows

- memory systems and databases

- execution layers that interact with enterprise tools

This architecture forms a closed-loop system, where the AI agent continuously processes information, performs tasks, and updates system states.

However, closed-loop systems alone are not enough for full autonomy.

Governance as Code: Ensuring Safe AI Operations

Autonomous systems must operate within strict governance frameworks to prevent misuse, errors, and security risks, especially as organizations increasingly explore the future of AI in business and the expanding role of intelligent automation. A modern approach known as Governance as Code embeds policies directly into AI workflows, helping organizations maximize AI benefits in business while maintaining control and accountability.

A key implementation pattern is the Agent Registry, a centralized record of each agent's identity, approved models and prompts, authorized tools, and risk tier classification. The more precise technical term borrowed from DevSecOps is Policy-as-Code, where governance rules are version-controlled, machine-readable, and enforced automatically at runtime rather than manually reviewed.

This approach is becoming increasingly important for managing risk and governance across different types of business environments and evolving business environments where AI systems must operate responsibly at scale.

Agent Identity

An emerging 2026 governance priority is assigning unique, auditable identities to AI agents separate from the human users who deploy them. A 2026 Cloud Security Alliance survey found that only 23% of organizations have a formal enterprise-wide strategy for agent identity management. Without it, agents share human credentials and API tokens, creating critical security vulnerabilities. Agent identity infrastructure enables dynamic authentication, runtime authorization, and continuous traceability across all systems the agent touches.

These policies ensure that agents follow rules related to:

- ethics

- compliance

- security

- privacy protection

For example, an AI system managing patient records in a healthcare environment must follow strict data protection rules. Governance policies ensure that sensitive information is handled responsibly.

Governance frameworks also help protect systems from cybersecurity threats and adversarial attacks, which are growing concerns in AI deployments.

AI Safety Mechanisms and Guardrails

To operate safely without micromanagement, AI systems must include built-in safety mechanisms.

These include:

- circuit breakers that stop dangerous actions

- guardrails that restrict unsafe outputs

- monitoring systems that track AI behavior

- traceability logs that record system activity

Observability systems that capture full decision lineage, from input through intermediate agent reasoning to final action, enabling forensic-grade audit trails and SLA dashboards. This is particularly critical for EU AI Act compliance in high-risk deployments.

Traceability is especially important because it allows organizations to review AI decisions and identify potential issues.

Without traceability, it becomes difficult to audit AI systems or understand how specific outcomes were generated.

Human in the Loop vs Human on the Loop

A major shift in AI governance involves transitioning from Human in the Loop (HITL) to Human on the Loop (HOTL).

Human in the Loop means humans review or approve every AI decision.

Human on the Loop means humans supervise the system at a higher level and only intervene during critical situations.

This approach significantly reduces operational overhead while maintaining accountability.

For example, in an autonomous software development environment:

- AI agents may write code

- generate test cases

- fix bugs

- create pull requests

Human reviewers only step in to approve the final version before deployment.

The Future: Multi-Agent AI Systems

The future of AI lies in multi-agent systems, where multiple specialized agents collaborate to perform complex tasks.

Examples of specialized agents include:

- research agents

- coding agents

- testing agents

- data analysis agents

Together, these agents create a distributed ecosystem capable of handling enterprise-scale operations.

However, these systems must still follow strict governance frameworks to ensure ethical and secure operation.

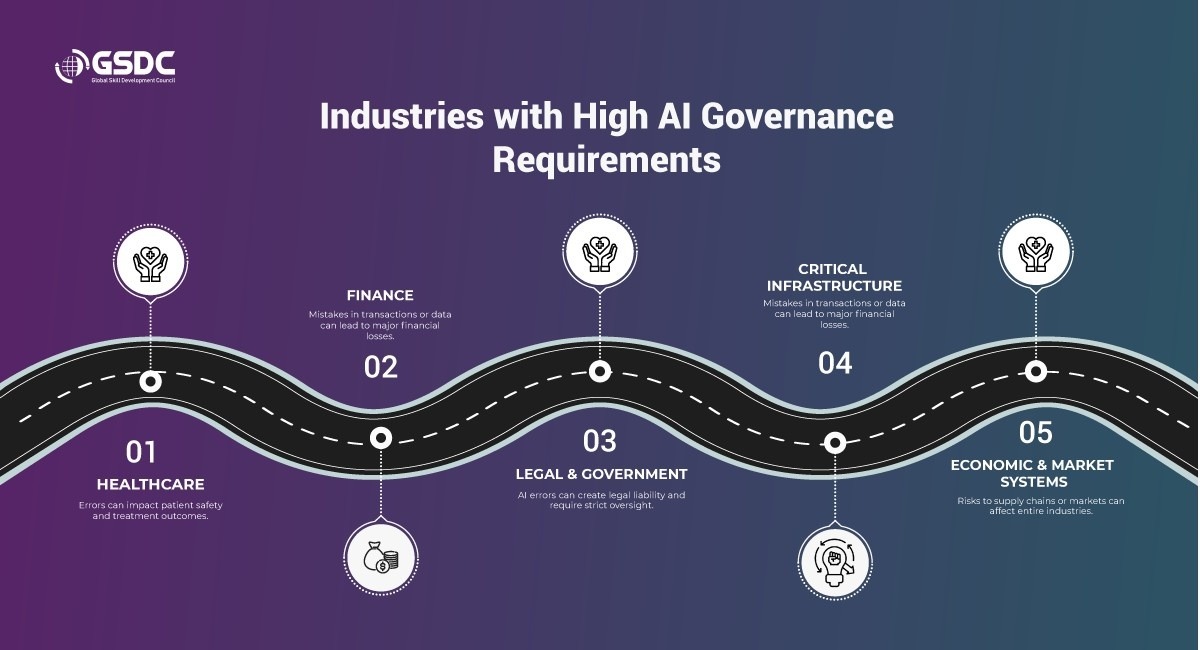

Industries with High AI Governance Requirements

Certain industries require stronger oversight due to the sensitive nature of their data.

These include:

Healthcare

Medical data is highly sensitive. AI errors in healthcare systems could affect patient safety and treatment outcomes.

Finance

Financial systems handle large transactions and sensitive financial records. Even small errors can result in significant financial losses.

Legal and Government

AI errors in public sector or legal systems create civil liability and are classified as high-risk under EU AI Act mandates, requiring strict human oversight and explainability requirements.

Critical Infrastructure (energy, telecom)

Increasingly regulated under AI safety frameworks, given the potential for cascading failures if autonomous agents make incorrect decisions in grid management or network operations

Economic and Market Systems

Market data, supply chains, and economic infrastructure also require strict safeguards because manipulation could disrupt entire industries.

Organizations operating in these sectors must prioritize AI ethics, governance, and security.

How Does the GSDC Certified Agentic AI Professional Certification Help Professionals?

Agentic AI is reshaping how professionals and organizations use artificial intelligence by moving beyond passive tools toward systems that can plan, decide, and execute tasks with greater autonomy. This shift enables businesses to improve productivity, accelerate decision-making, streamline operations, and build more intelligent workflows across departments and functions.

As adoption grows, professionals need more than just awareness; they need practical knowledge of how Agentic AI works in real business environments. The GSDC Certified Agentic AI Professional certification helps learners understand the real-world application of Agentic AI, including implementation strategies, governance considerations, enterprise use cases, and the operational impact of autonomous AI systems.

This Certified Agentic AI Professional certification is especially valuable for professionals who want to stay relevant in the rapidly evolving AI landscape, contribute to AI-driven transformation, and build future-ready capabilities for 2026 and beyond. It serves as a strong credential for those aiming to lead innovation responsibly and apply Agentic AI with both strategic and practical confidence.

Conclusion

Autonomous AI agents represent the next phase of enterprise automation. They have the potential to transform business operations by performing complex tasks, improving productivity, and enabling scalable digital workflows.

However, building effective AI agents requires more than powerful models. Organizations must focus on:

- structured system architecture

- governance frameworks

- safety guardrails

- self-healing capabilities

- Human-on-the-Loop supervision with defined escalation authority and confidence thresholds

The future of AI will not eliminate human involvement. Instead, humans will transition from micromanagers to strategic supervisors, ensuring AI systems operate responsibly while unlocking their full potential.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!