Ethical AI in Compliance Systems: Legal and Moral Challenges

Written by Emily Hilton

- What Is Ethical AI? Understanding the Concept

- Why Ethics Matters in AI Compliance Systems

- The Legal Landscape: Obligations and Challenges

- Moral Challenges of AI in Compliance

- Building an Ethical AI Framework

- Ethical AI Guidelines and Best Practices

- Certification and Skill Development

- Integrating Ethical AI into Organizational Culture

- Conclusion

In a time when artificial intelligence is a factor in practically every aspect of life, from business to governance and even society, the question of how to combine technology and ethics has become a primary issue. Having advanced algorithms at their disposal, companies are able to make faster decisions, thus taking the lead in managing their risks, and eventually, they will be able to reduce the number of cases in which they face regulatory scrutiny.

However, the benefits of AI come along with some very important questions: What does compliance mean for AI ethics? How do companies see the positive side of innovation when their reputation is at stake? And what forms of guidance, such as frameworks and certifications, are available for ethical AI in compliance systems?

This blog discusses the current situation of ethical AI, the legal and moral dilemmas that organizations are going through, and how they can win the public's trust and make their systems sustainable by exhibiting a strong commitment to AI ethical compliance.

What Is Ethical AI? Understanding the Concept

At its core, what is ethical AI? Ethical AI refers to the responsible design, development, deployment, and governance of AI systems in a manner that respects human rights, upholds legal standards, and supports societal well-being. It means ensuring transparency, fairness, accountability, and privacy while mitigating harm and embedding respect for human values at every stage of the AI lifecycle. In compliance systems, ethical AI isn’t just a technical requirement; it’s a moral imperative that preserves trust and legitimacy in automated decision-making.

AI systems can affect lives in profound ways, from determining creditworthiness and healthcare outcomes to enforcing laws and regulations. Without ethical guardrails, AI may entrench biases, breach privacy, or operate outside legal boundaries, undercutting both societal trust and organizational integrity.

Why Ethics Matters in AI Compliance Systems

Compliance systems are designed to ensure that processes align with legal and regulatory obligations. When AI is integrated into these systems, ethical considerations are no longer optional; they are essential. Incorporating AI ethical compliance helps organizations not only meet regulatory mandates but also align technology with core ethical principles like fairness, transparency, and accountability.

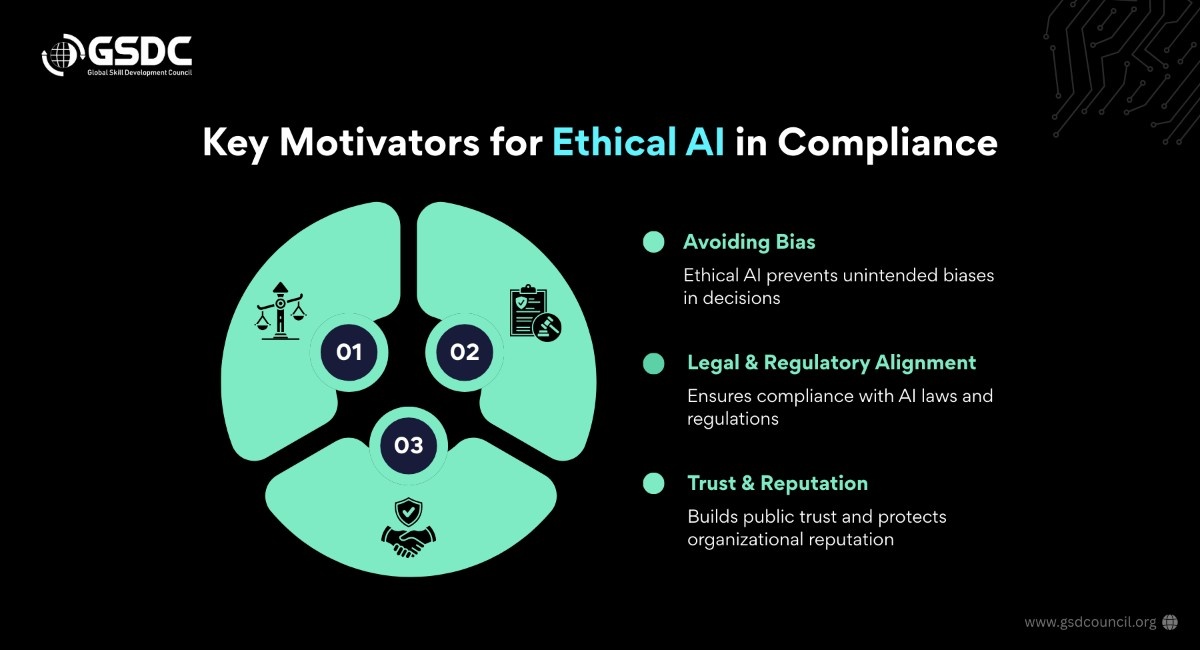

Key Motivators for Ethical AI in Compliance

- Avoiding Bias and Discrimination: AI decision-making can reflect unintended biases present in training data or model structures. Ethical AI frameworks help identify and mitigate such biases before they result in unlawful or unjust outcomes.

- Legal and Regulatory Alignment: Regulatory regimes like the European Union’s AI Act and global data protection laws increasingly demand explainable and accountable AI systems. Ethical AI frameworks support compliance with these emerging standards.

- Trust and Reputation: Public trust in AI technologies depends on transparent, trustworthy behavior. Demonstrating ethical compliance avoids reputational risk and builds confidence among consumers, partners, and regulators.

From an organizational perspective, ethical AI is not merely about risk avoidance; it’s about fostering responsible innovation. As AI continues to automate complex domains, ethics becomes the lens through which technology can serve society without causing harm.

The Legal Landscape: Obligations and Challenges

Lawmakers around the world are rapidly responding to the growth of AI. This evolving legal landscape adds complexity to compliance systems, prompting organizations to ask: How do we implement AI that complies with both existing law and ethical standards?

Global Regulatory Frameworks

The governmental institutions and regional alliances have either established or are in the process of establishing detailed AI legislation. As an instance:

- European Union: The AI Act of the EU categorizes AI technologies based on the level of risk entailed and imposes very stringent requirements for high-risk applications, which include tracking, openness, and human involvement.

- United States: The Federal Trade Commission and similar agencies are giving priority to honesty and fairness in consumer products powered by AI.

- India: Issues of policy are coming up, emphasizing the need for transparency, accountability, and mitigation of the adverse impacts of AI systems on the disadvantaged groups.

The said regulatory mechanisms make it a must that the businesses not only be in control of the data protection and compliance with algorithms but also be in good standing with the ethical codes. To be able to traverse this territory, one requires the input from the legal field, the technical field, and a proper ethical AI framework that is in line with the aims of the organization.

Legal Risks and Liability

AI-created choices may result in legal responsibilities if the system does not fulfill the legal compliance criteria. For example:

- In case an AI system illegally differentiates between people according to their characteristics, the company will get sued under the law that prohibits discrimination.

- A machine that cannot be explained or supervised by a human may incur penalties from the regulators in the finance or healthcare sectors.

- Malfunctioning automation, which causes injury, could result in the company being charged under product liability or tort law.

The risk of legal issues raises the necessity of incorporating ethical points of view into risk management and compliance systems, not merely as best practices but as legal protections.

Moral Challenges of AI in Compliance

While legal compliance addresses external obligations, moral challenges focus on what organizations should do, beyond what they must do. These challenges spring from the profound social impact of automated decisions and the ethical expectations of stakeholders.

Human Agency vs. Automated Authority

One central moral concern is the degree to which AI ought to replace or augment human decision-making. Ethical AI systems should support human autonomy, not diminish it. Instead of removing human oversight, AI should empower humans to make better, informed choices, especially in compliance decisions with ethical consequences.

Fairness and Equity

AI systems can inadvertently reinforce systemic inequalities. For example, automated risk assessments in credit scoring or criminal justice can disproportionately harm historically marginalized communities if not carefully designed. Ethical frameworks insist that fairness be deliberated, tested, and safeguarded.

Transparency and Explainability

Opaque “black box” AI models pose moral questions about accountability and trust. Stakeholders have a right to understand how significant decisions are made. This demands transparent mechanisms for reporting, auditing, and explaining AI behavior to affected parties.

Human Values and Cultural Contexts

Moral challenges are not monolithic; they vary across cultural and societal contexts. AI systems deployed globally must respect diverse norms and values, which stresses the importance of local ethical evaluation and stakeholder involvement.

Download the checklist for the following benefits:

Navigate regulations with confidence

Tools, tips & governance best practices inside

📥 Get your free copy now

Building an Ethical AI Framework

A well-structured ethical AI framework guides organizations to integrate ethics into governance practices effectively. It clarifies principles, assigns responsibilities, and establishes processes for ongoing ethical evaluation.

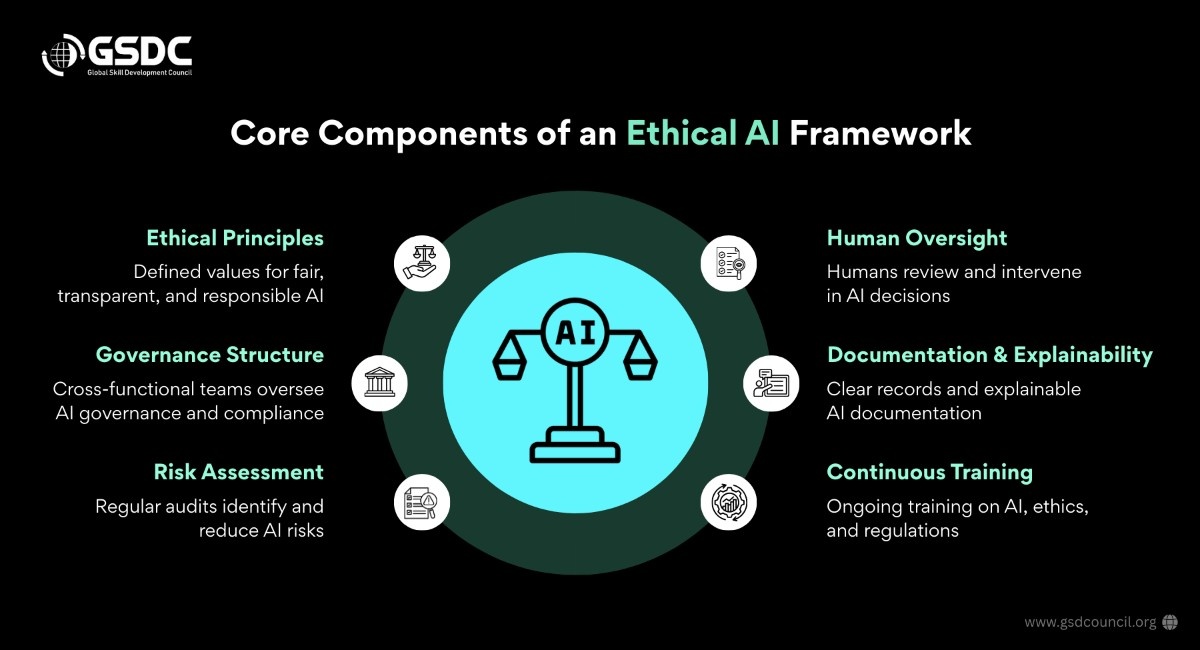

Core Components of an Ethical AI Framework

- Ethical Principles: Clearly articulated values such as fairness, transparency, accountability, and privacy are often derived from established ethical principles of AI 80 guidelines from international bodies.

- Governance Structure: A cross-functional governance team that oversees compliance and ethical review.

- Risk Assessment: Regular audits of AI systems to identify and mitigate ethical risks.

- Human Oversight: Defined roles for human review and intervention in automated decisions.

- Documentation and Explainability: Maintain records, model cards, and explainability reports for both internal use and regulatory review.

- Continuous Training: Ongoing education and training for technical and compliance teams to stay updated on best practices and legal updates.

Importantly, an ethical AI framework is not static. As ethical norms evolve and as legal standards such as the EU AI Act and data privacy laws mature, organizations must regularly update their frameworks to reflect new expectations.

Ethical AI Guidelines and Best Practices

Adopting AI ethical guidelines 250 helps mainstream ethical thinking into everyday practices. These guidelines typically cover:

- Fairness and Non-Discrimination: Use diverse datasets and test for bias continuously.

- Privacy Protection: Ensure AI respects individual privacy rights and data protection laws.

- Transparency: Implement too ls for explainable AI and clear communication.

- Accountability: Assign clear ownership for ethical compliance and outcomes.

- Safety and Security: Protect AI systems from misuse, manipulation, or adversarial attacks.

Combining these guidelines with governance policies ensures that organizations are equipped to handle both external audits and internal ethical reviews.

Certification and Skill Development

One tangible way organizations demonstrate commitment to ethical AI is through certifications and training that underpin AI ethical compliance and governance maturity.

Risk Compliance Certification and Training Programs

Certifications offer structured learning and validation of expertise. GSDC’s Risk Compliance Certification includes a broad syllabus that covers risk management, compliance frameworks, and regulatory expectations for AI technologies.

The Certified Generative AI in Risk & Compliance Program is an advanced certification focused on generative AI, a rapidly expanding class of AI tools emphasizing ethical, legal, and governance considerations. These programs equip professionals with actionable insights into risk assessment, policy design, and governance mechanics.

Pursuing such credentials signals to stakeholders that an organization or individual prioritizes both legal compliance and ethical AI governance.

Integrating Ethical AI into Organizational Culture

Embedding AI ethics into organizational culture is as important as technological implementation. Leadership should set the tone by prioritizing ethical considerations in strategic planning, budgeting for governance resources, and incentivizing ethical innovation.

Cross-Functional Collaboration

AI ethics in compliance can’t be siloed. It requires collaboration across legal, technical, operational, and ethical domains. Interdisciplinary teams can preemptively identify risks, balance conflicting priorities, and ensure ethical AI practices are synchronized with business goals.

Stakeholder Engagement

Engaging stakeholders such as end users, regulatory bodies, and independent ethicists enriches ethical evaluation by surfacing diverse perspectives. This inclusive approach enhances legitimacy and trust.

Conclusion

The integration of ethical AI into compliance systems is no longer optional; it’s a business and societal necessity. Ethical AI not only strengthens legal compliance but also enriches organizational trust, drives responsible innovation, and aligns technology with human values.

By understanding what is ethical AI is, building comprehensive ethical frameworks, adhering to AI ethical guidelines 250, and pursuing professional development such as risk compliance certification and specialized programs like the Certified Generative AI in Risk & Compliance program, organizations can navigate the complex and evolving landscape of AI governance. Ethical AI is more than a set of rules it is a commitment to harness the power of AI in a way that respects human dignity, minimizes harm, and advances the common good.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!