Selecting the Right AI Governance Metrics Under ISO 42001

Written by Maya Mishra

- Understanding ISO 42001: The Foundation of AI Governance

- What Is AI Governance and Why Does It Matter?

- Core Requirements Under ISO 42001

- Why Metrics Are the Backbone of AI Governance

- The A–Z OCR Model: A Three-Layer Metric Framework

- Aligning Metrics with Business Objectives

- The Role of Continuous Improvement

- Why Earn the GSDC Certified ISO 42001 Lead Auditor in 2026?

- Conclusion:

As organizations accelerate their adoption of artificial intelligence, the conversation is no longer just about innovation; it’s about responsible AI and sustainable growth. Businesses want to deploy AI quickly to gain a competitive advantage, yet they must also manage risks such as bias, data misuse, security vulnerabilities, and increasing regulatory scrutiny. To address these challenges effectively, organizations need a strong AI governance framework that ensures AI systems are developed, deployed, and monitored responsibly.

This is where the ISO 42001 standard, the world’s first Artificial Intelligence Management System (AIMS) standard, plays a critical role. Designed to help organizations establish structured governance, accountability, and risk management for AI, ISO 42001 AI governance provides a practical foundation for aligning innovation with trust, ethics, and compliance.

The webinar “Selecting the Right AI Governance Metrics Under ISO 42001” highlights how organizations can move beyond theoretical governance models and implement measurable, evidence-based metrics that support transparency, safety, compliance, and business value. By focusing on the right performance indicators, businesses can strengthen their AI governance framework, demonstrate commitment to responsible AI, and ensure that AI initiatives deliver outcomes that are not only innovative but also secure, fair, and aligned with organizational objectives.

Understanding ISO 42001: The Foundation of AI Governance

ISO 42001 provides a structured management system for governing AI across its entire lifecycle from design and procurement to deployment, monitoring, and continuous improvement. Like other ISO standards, it follows a Plan–Do–Check–Act (PDCA) model, ensuring AI initiatives remain aligned with business objectives while continuously managing risks.

The goal of ISO 42001 is not to slow innovation but to enable organizations to:

- Deploy AI confidently with proper controls in place

- Build trust among customers, regulators, and stakeholders

- Ensure transparency in how AI decisions are made

- Reduce operational, ethical, and legal risks

- Accelerate adoption through structured governance

In an AI-driven economy, trust becomes a competitive differentiator. Organizations that can demonstrate responsible AI practices are more likely to gain customer confidence and regulatory acceptance.

What Is AI Governance and Why Does It Matter?

AI governance refers to the policies, accountability structures, risk controls, and monitoring mechanisms that ensure AI systems operate ethically, securely, and effectively.

Unlike traditional IT governance, AI governance must address unique challenges:

- Algorithmic bias and fairness risks

- Model hallucinations or inaccuracies

- Data privacy and ownership concerns

- Security vulnerabilities in AI pipelines

- Lack of explainability in automated decisions

For example, if an AI-powered hiring system repeatedly rejects certain candidates, stakeholders will demand to know whether the algorithm is fair, transparent, and accountable. Similarly, customers increasingly question how their personal data is used when AI drives recommendations or targeted advertising. These growing concerns highlight the importance of applying strong AI governance principles such as fairness, accountability, transparency, privacy, and risk management across every stage of the AI lifecycle.

Effective governance answers these concerns with evidence, controls, and measurable performance indicators. This is exactly where ISO/IEC 42001 becomes essential, providing organizations with a structured framework to govern AI systems responsibly, align with ethical expectations, and demonstrate compliance in an increasingly AI-driven world.

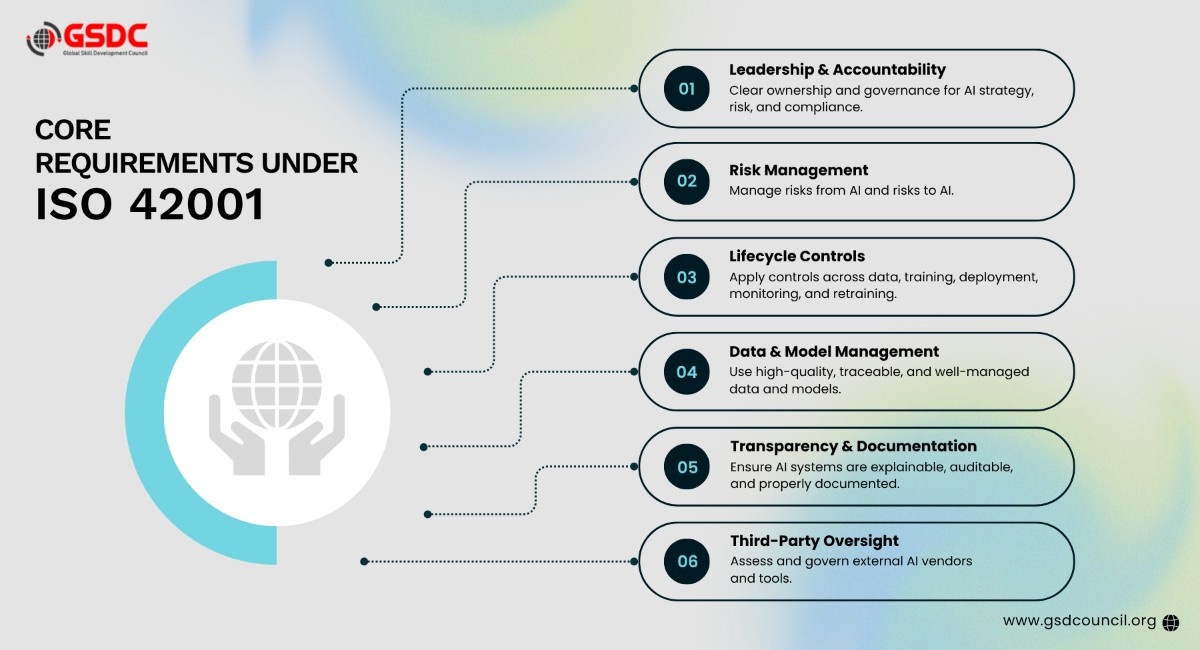

Core Requirements Under ISO 42001

ISO 42001 establishes mandatory management system requirements that organizations must implement, including:

1. Leadership and Accountability

AI governance must have clear ownership. Many organizations are now introducing roles such as Chief AI Officer (CAIO) to oversee AI strategy, risk, and compliance.

2. Risk Management

Organizations must identify two types of risk:

- Risks from AI (bias, discrimination, data leakage)

- Risks to AI (model failure, security threats, misconfiguration)

3. Lifecycle Controls

Controls must be embedded across the AI lifecycle:

- Data acquisition and validation

- Model training and testing

- Deployment and monitoring

- Continuous evaluation and retraining

4. Data and Model Management

High-quality, sanitized, and traceable data is essential. Poor data leads directly to poor AI decisions.

5. Transparency and Documentation

AI systems must be explainable, auditable, and supported by clear documentation for internal and external stakeholders.

6. Third-Party Oversight

Organizations increasingly rely on external AI vendors or open-source tools, making supplier governance and due diligence critical.

Why Metrics Are the Backbone of AI Governance

Governance without measurement is ineffective. AI governance must go beyond policies and intentions; it requires organizations to translate objectives, risks, and expected outcomes into clear, quantifiable metrics. This is a core expectation of ISO 42001, which encourages organizations to establish measurable controls and performance indicators that can validate whether AI systems are operating responsibly and effectively.

Metrics help organizations:

- Verify whether AI is delivering intended business value

- Detect early signs of model drift or failure

- Demonstrate compliance during audits

- Enable evidence-based decision-making

- Provide transparency to leadership and regulators

In practice, these metrics act as essential AI governance tools, helping organizations monitor performance, accountability, fairness, and risk throughout the AI lifecycle. They also strengthen ISO AI risk management efforts by making it easier to identify vulnerabilities, assess control effectiveness, and respond proactively before issues escalate.

For example, a company aiming to increase production by 30% using AI must measure whether deployed AI systems are secure, reliable, compliant, and genuinely contributing to that business objective. Without measurable outcomes, even the most advanced AI initiatives can fall short of both performance and governance expectations.

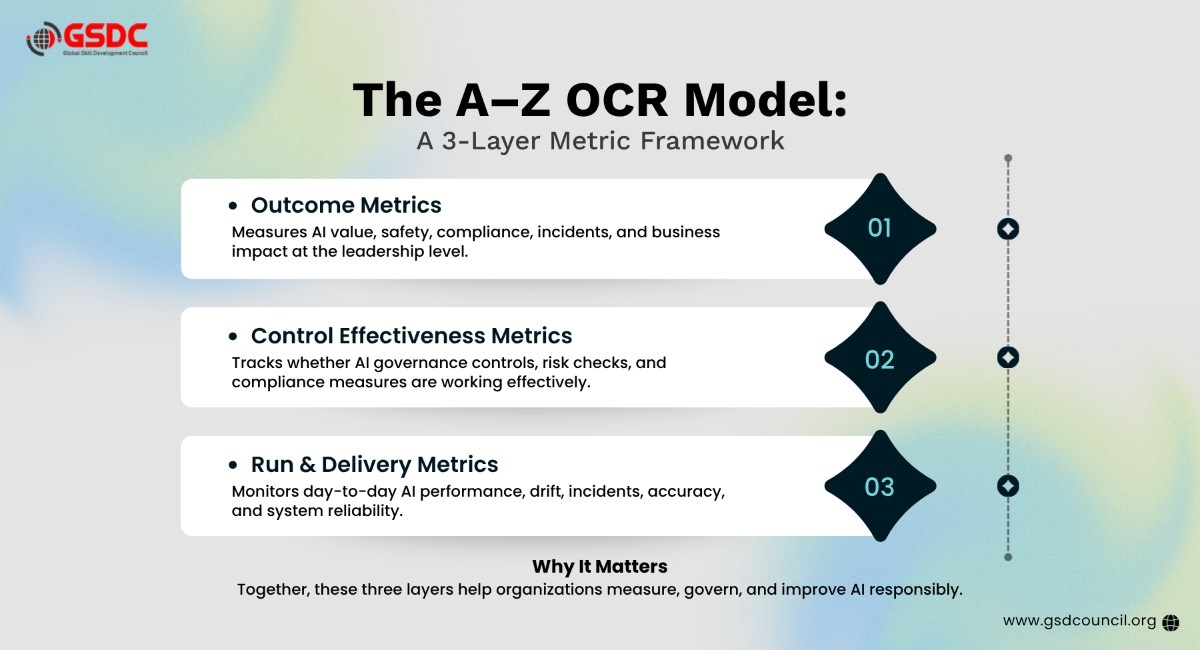

The A–Z OCR Model: A Three-Layer Metric Framework

The webinar introduced a practical framework, the A–Z OCR Model, which organizes AI governance metrics into three interconnected layers tailored to different stakeholders.

1. Outcome Metrics (Board-Level View)

These metrics answer the leadership’s primary question:

“Are we safe, compliant, and gaining value from AI?”

Examples include:

- Percentage of AI use cases formally classified and approved

- Number of high-severity AI incidents

- AI risk posture relative to organizational risk appetite

- Regulatory non-conformities identified during audits

- Business value realized versus expected outcomes

- AI-attributed customer harm rates

These indicators provide strategic assurance and support executive decision-making.

2. Control Effectiveness Metrics (Governance Layer)

This layer evaluates whether governance controls are functioning properly.

Typical measures include:

- AI impact assessment completion rate

- Control testing pass rates (>90% recommended)

- Fairness and bias validation success for high-risk models

- Data governance compliance (lineage, retention, access control)

- Third-party assurance and vendor due diligence coverage

- Monitoring enablement across production AI systems

These metrics provide assurance that policies are not just documented but operationalized.

3. Run and Delivery Metrics (Operational Layer)

These metrics focus on day-to-day AI system performance.

Examples include:

- Model drift detection and response times

- Mean time to detect (MTTD) and respond (MTTR) to AI incidents

- System latency and availability

- Accuracy and retraining frequency

- Monitoring of misuse or anomalous behavior

Operational metrics feed upward into governance dashboards, ensuring continuous improvement.

Aligning Metrics with Business Objectives

One of the most critical lessons from ISO 42001 is that metrics must align with organizational goals. There is no universal set of KPIs; each organization must tailor its metrics based on:

- Industry risk profile (healthcare vs. retail vs. manufacturing)

- Regulatory exposure

- AI maturity level

- Nature of AI use cases

Metrics should always define:

- What is being measured

- How it is calculated

- Target thresholds

- Monitoring frequency

- Ownership and escalation pathways

- Evidence sources (logs, dashboards, approvals)

This ensures accountability and auditability.

The Role of Continuous Improvement

ISO 42001 treats AI governance as a dynamic process. Metrics are not static; they form feedback loops that refine models, controls, and strategy.

For example:

- Rising incident trends may trigger retraining or redesign

- Poor value realization may require reassessing AI use cases

- Audit findings may lead to stronger supplier controls

This creates a self-correcting governance ecosystem, where insights from metrics strengthen both compliance and innovation.

Why Earn the GSDC Certified ISO 42001 Lead Auditor in 2026?

In 2026, as AI regulations, risk expectations, and governance demands continue to grow, the GSDC’s Certified ISO 42001 Lead Auditor helps professionals build practical expertise in auditing AI Management Systems, assessing AI risks, and validating responsible AI controls.

GSDC’s certificate strengthens your credibility in AI governance, supports compliance readiness, and prepares you to lead trustworthy, measurable, and standards-aligned AI assurance initiatives across organizations.

Conclusion:

AI governance under ISO 42001 is not just about compliance; it is about building trustworthy, measurable, and accountable AI systems that support long-term business success. A strong AI governance framework helps organizations move beyond policy statements and implement practical oversight mechanisms that ensure AI remains aligned with ethical, operational, and regulatory expectations.

By selecting the right metrics aligned to risk, performance, and business outcomes, organizations can strengthen responsible AI practices and ensure that AI systems deliver value without compromising fairness, transparency, or accountability. This approach is central to the ISO 42001 standard, which enables businesses to establish structured governance processes and continuous monitoring for AI initiatives.

Ultimately, effective ISO 42001 AI governance allows organizations to foster innovation with confidence, improve stakeholder trust, and continuously enhance AI effectiveness while maintaining safety, transparency, and regulatory readiness in an evolving digital landscape.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!