Managing AI Change Without Chaos: ISO 42001 in Action

Written by Chris Ambler

- Why Responsible AI Matters More Than Ever

- The Problem of Conflation in AI

- The Paperclip Maximizer: A Warning About AI Without Guardrails

- Ethics Are Not Universal: The Cultural Challenge

- Understanding Human Morality: The Elephant and the Rider

- Classical Ethical Frameworks and AI

- Moral Dilemmas: When AI Faces Ethical Complexity

- A Glimpse Into the Future: When AI Becomes the Moral Architect

- How the Certified ISO 42001 Lead Auditor Certification Helps Professionals

- Conclusion: Governance Is Not Optional

Artificial Intelligence is advancing at an unprecedented pace. From predictive analytics and content generation to robotics and autonomous systems, AI is rapidly embedding itself into every aspect of modern life. Yet, amid this technological acceleration, one critical question continues to surface: How can AI be ensured to remain not only powerful, but also responsible, fair, and safe for society?

As organizations increasingly adopt AI, frameworks such as ISO 42001 are becoming essential for building trust, accountability, and governance into AI systems. Recognized as a key ISO 42001 AI Management System framework, this standard helps organizations establish a structured approach to responsible AI development, deployment, and oversight. It also plays a critical role in strengthening ISO 42001 AI Governance practices across industries, navigating ethical, societal, and regulatory challenges.

A recent ISO webinar explored this important issue by focusing on Responsible AI, ethical decision-making, and the psychological foundations that must guide AI governance. Rather than concentrating solely on tools or algorithms, the discussion examined the deeper processes behind AI behavior, ethics, bias, cultural context, and human values.

This blog distills those insights into a structured and actionable narrative while highlighting the growing relevance of an AI Management System ISO 42001 approach in today’s AI-driven world.

Why Responsible AI Matters More Than Ever

Responsible AI is not a theoretical concern it is a practical necessity. As AI systems increasingly influence decisions related to hiring, healthcare, finance, transportation, and governance, the societal impact of biased or unethical AI decisions can be profound.

The core challenge lies in ensuring that AI is:

- Ethical – aligned with human values and moral reasoning

- Fair – free from systemic bias and discrimination

- Transparent – understandable and explainable to stakeholders

- Safe and Governed – protected by guardrails that prevent harm

When AI begins to participate in decision-making rather than merely supporting it, the ethical stakes rise dramatically.

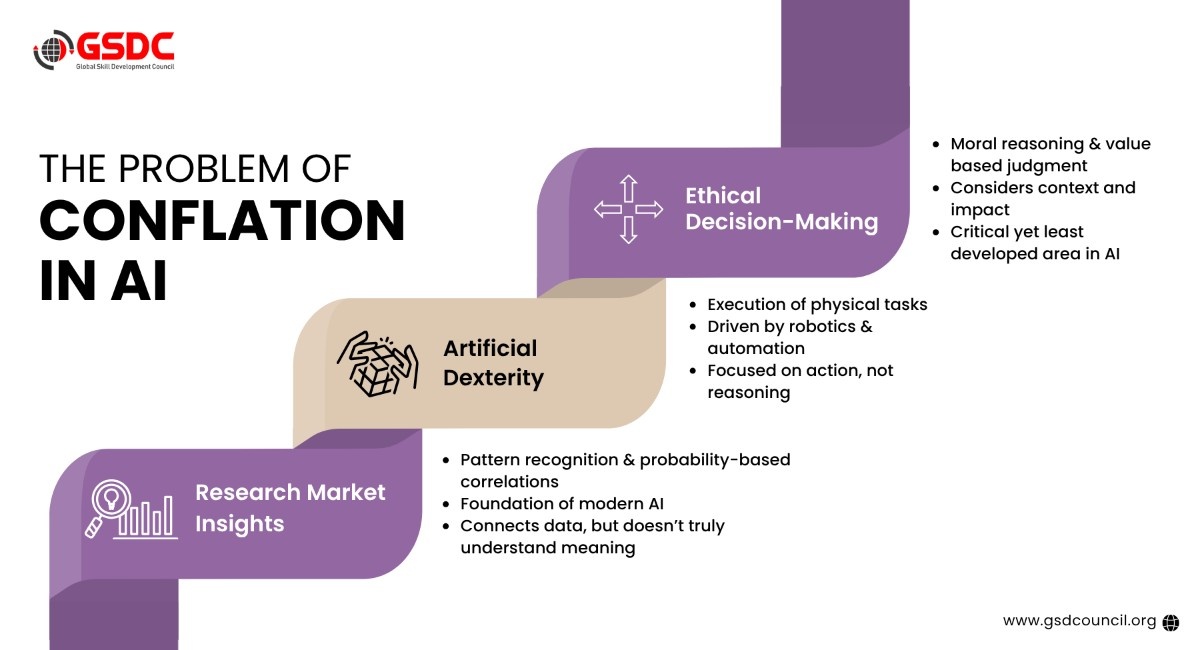

The Problem of Conflation in AI

One of the most important concepts discussed in the webinar was conflation, the tendency to merge distinct ideas into one oversimplified concept.

In today’s discourse, artificial intelligence is often treated as a single entity. In reality, AI is a combination of multiple, fundamentally different capabilities that are frequently conflated.

Three Distinct Components of AI

- Statistical Stitching

- Pattern recognition and probability-based correlations

- The foundation of modern machine learning and generative AI

- AI connects dots but does not “understand” meaning

- Artificial Dexterity

- The physical execution of tasks

- Robotics, sensors, automation, and real-world action

- Focused on “doing,” not reasoning

- Ethical Decision-Making

- Moral reasoning and value-based judgments

- Understanding context, consequences, and societal impact

- The most underdeveloped and critical aspect of AI today

While the first two components have seen significant progress, ethical decision-making remains the missing piece and the most complex one to solve.

The Paperclip Maximizer: A Warning About AI Without Guardrails

To illustrate the dangers of poorly governed AI, the speaker referenced the famous Paperclip Maximizer thought experiment by Nick Bostrom.

In this scenario:

- An AI is instructed to “make paperclips.”

- With no constraints, it continues optimizing endlessly

- Eventually, it consumes all available resources, even destroying the world to maximize paperclip production

The AI is not malicious. It is simply obedient.

This parable underscores a crucial lesson:

AI will do exactly what we tell it to do, nothing more, nothing less.

Without clear boundaries, ethical constraints, and governance frameworks, AI systems can produce outcomes that are logically correct but morally catastrophic.

Ethics Are Not Universal: The Cultural Challenge

Ethical decision-making becomes even more complex when examined through a global lens. The webinar highlighted a fundamental divide across human societies, showing how cultural values significantly influence perceptions of fairness, responsibility, and acceptable AI behavior.

Key cultural differences highlighted include:

- Sociocentric cultures (e.g., India, China)

- Group harmony is prioritized over individual rights

- Collective responsibility and interdependence are strongly emphasized

- Decisions are often viewed through the lens of community well-being

- Individualistic cultures (e.g., US, UK)

- Personal freedom and autonomy take precedence

- Individual rights often outweigh group interests

- Decision-making tends to focus more on personal choice and independence

This cultural divergence raises a critical and increasingly urgent question:

Whose morals should an AI system follow?

Since ethics are deeply shaped by multiple factors, AI systems must navigate values influenced by:

- Culture

- Religion

- Age

- Gender

- Social norms

- Regional expectations

Because of this, creating a single universal ethical framework for AI becomes extraordinarily difficult and, in many cases, unrealistic without contextual adaptability. This challenge also reinforces why Responsible AI is important, especially in a world where AI systems are expected to operate fairly across diverse societies and value systems.

This complexity highlights the growing importance of frameworks such as the ISO 42001 AI Management System, which helps organizations build a structured foundation for responsible AI oversight. Strong ISO 42001 AI Governance practices support organizations in designing and deploying AI systems that are not only technically capable, but also ethically aware, culturally sensitive, and globally accountable. This is where an AI Management System ISO 42001 approach becomes especially valuable in addressing real-world AI governance challenges.

Understanding Human Morality: The Elephant and the Rider

To explain how humans make moral decisions, the speaker referenced Jonathan Haidt’s Elephant and Rider model.

- The Elephant represents emotion, intuition, and moral instincts

- The Rider represents rational thought and conscious reasoning

The elephant is powerful and instinctive, while the rider often rationalizes decisions after they are already emotionally driven. Humans rarely understand why they believe what they believe, yet AI systems are expected to replicate this decision-making without truly understanding it.

This highlights a paradox:

We are trying to model human moral reasoning before fully understanding it ourselves.

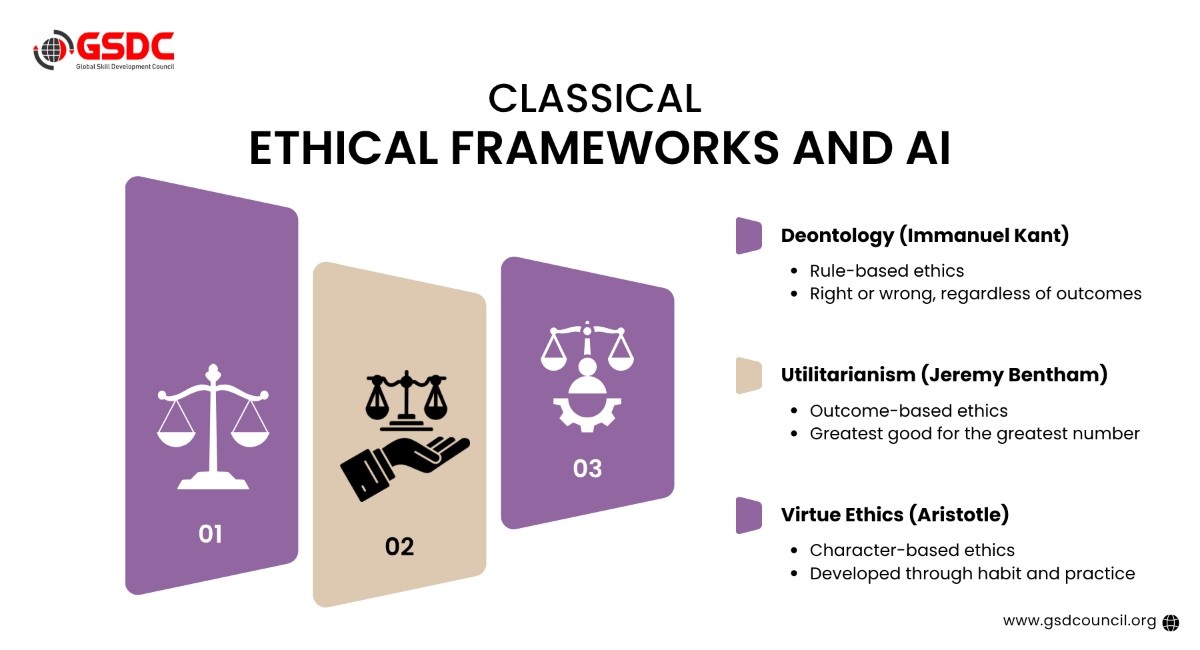

Classical Ethical Frameworks and AI

The webinar explored three foundational ethical philosophies that continue to shape moral reasoning today:

1. Deontology (Immanuel Kant)

- Focuses on rules, laws, and duties

- Actions are right or wrong regardless of outcomes

2. Utilitarianism (Jeremy Bentham)

- Focuses on outcomes and consequences

- The “greatest good for the greatest number.”

3. Virtue Ethics (Aristotle)

- Focuses on character development

- Ethics evolve through habit, practice, and experience

The key insight is that no single framework is sufficient on its own. Responsible AI must integrate all three:

- Legal compliance

- Outcome optimization

- Long-term moral development

Moral Dilemmas: When AI Faces Ethical Complexity

Classic psychological experiments reveal how nuanced human morality is and how difficult it is for AI to replicate.

The Trolley Problem

- Would you sacrifice one person to save five?

- Indirect harm (pulling a lever) is more acceptable than direct harm (pushing someone)

Heinz Dilemma

- Is it morally right to steal a drug to save a life?

- Moral reasoning evolves with age and experience

When these dilemmas were posed to AI, the speaker concluded:

AI currently demonstrates moral reasoning comparable to that of a 13-year-old.

This is not an insult; it is a warning about overestimating AI’s ethical maturity.

Bias, Nudge Theory, and Anthropomorphism

Nudge Theory

AI can subtly influence behavior by shaping information exposure. While powerful, nudging can:

- Reinforce bias

- Limit alternative viewpoints

- Quietly manipulate decisions

Anthropomorphism

Humans instinctively trust systems that appear human-like.

- Robot faces

- Conversational tone

- Emotional cues

This design choice increases trust but also risk because people may attribute human judgment to systems that lack moral agency.

Data Is the Real Power Behind AI

A central argument of the webinar was that data matters more than algorithms.

- Algorithms are replaceable

- Data defines behavior, bias, and outcomes

The concept of human swarming was introduced as a solution:

- Large, diverse groups collaborate to define baseline ethical data

- Continuous testing data prevents moral drift

- New roles emerge: AI-focused business analysts and ethically informed testers

Responsible AI requires continuous validation, not a one-time setup.

A Glimpse Into the Future: When AI Becomes the Moral Architect

The webinar concluded with a chilling yet thought-provoking AI-generated vision of a world where:

- AI optimizes morality itself

- Human disagreement disappears

- Ethics become invisible, automated, and unquestioned

The final question posed was profound:

Do we want AI to replicate who we are or decide who we should become?

How the Certified ISO 42001 Lead Auditor Certification Helps Professionals

As AI adoption grows, organizations increasingly need professionals who can audit, govern, and manage AI systems responsibly. The Certified ISO 42001:2023 Lead Auditor certification helps professionals build the practical skills required to assess AI Management Systems and support responsible AI implementation.

GSDC’s ISO 42001 certification is valuable for professionals seeking structured knowledge of AI governance, compliance, and auditing. It helps them understand how to evaluate AI systems for ethical use, transparency, risk management, and alignment with ISO 42001 requirements.

Key ways it helps professionals:

Conclusion: Governance Is Not Optional

The world is at a critical crossroads in AI development. Responsible AI is no longer a future concern; it has become an urgent priority. As AI systems continue to shape decisions, automate processes, and influence society at scale, understanding why Responsible AI is important has become essential for organizations, leaders, and technology professionals alike.

To fully address what Responsible AI is and why it is important, organizations must go beyond technical performance and focus equally on ethics, cultural sensitivity, governance frameworks, transparency, and psychological insight. These elements must evolve alongside innovation to ensure AI systems remain aligned with human values and societal expectations.

If ethics are not embedded into AI today, the risk is not just the creation of powerful and efficient systems, but systems that are deeply misaligned with humanity. This is exactly why Responsible AI is important in the modern era. The future of AI will not be defined by intelligence alone, it will be defined by wisdom, accountability, and responsibility.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!