Designing an AI Governance Operating Model Aligned to ISO 42001

Written by Paul Crafer

- Understanding ISO/IEC 42001 as a Governance Baseline

- Why ISO 42001 Is Flexible by Design

- What AI Governance Really Means

- The Plan–Do–Check–Act Model in ISO 42001

- A Three-Floor Model for AI Governance

- Moving Beyond Compliance: Continuous Improvement

- The Role of Governance Frameworks in Operationalization

- Master AI Governance with the GSDC’s Certified ISO 42001 Lead Auditor Certification

- Final Thoughts

As organizations increasingly rely on artificial intelligence to drive business value, the need for strong, structured AI governance has never been more urgent. AI systems now influence hiring decisions, customer interactions, credit approvals, healthcare diagnostics, and operational efficiency. Without effective governance, these systems can introduce significant legal, ethical, and operational risks.

ISO/IEC 42001:2023 is the world’s first international management system standard specifically focused on artificial intelligence. While it is a landmark development, it is important to understand what ISO 42001 does and does not provide. More importantly, organizations must know how to translate the standard into a practical, scalable AI governance operating model.

This article explores how to design such a model, how ISO 42001 fits into the broader regulatory landscape, and how organizations can operationalize AI governance rather than treating it as a documentation exercise.

Understanding ISO/IEC 42001 as a Governance Baseline

ISO 42001 is best understood as a foundational governance framework. It provides structure, accountability, and repeatability for managing AI systems across their lifecycle from design and development to deployment, monitoring, and decommissioning.

However, ISO 42001 is voluntary, not a legal mandate. This distinguishes it from regulations such as the EU AI Act, which imposes legally binding requirements and penalties. While ISO 42001 supports risk management and responsible AI practices, certification alone does not automatically ensure compliance with regional AI laws, particularly for high-risk AI systems.

In practice, ISO 42001 acts as a baseline:

- It establishes common governance language and processes

- It enables consistency across teams and departments

- It supports risk-aware decision-making at scale

Organizations should view it as the ground floor upon which additional legal, ethical, and sector-specific requirements can be built.

Why ISO 42001 Is Flexible by Design

A common critique of ISO 42001 is that it does not prescribe exactly how organizations should govern AI. This is intentional.

As a management system standard, ISO 42001 focuses on structure over content. It defines what needs to exist: policies, processes, roles, and controls, but leaves how those elements are implemented to the organizational context. This flexibility allows the standard to be applied across industries, geographies, and AI use cases.

Clause 4.1, which requires organizations to understand their internal and external context, is central to this approach. It ensures AI governance is tailored rather than one-size-fits-all, making the standard adaptable for both multinational enterprises and smaller organizations.

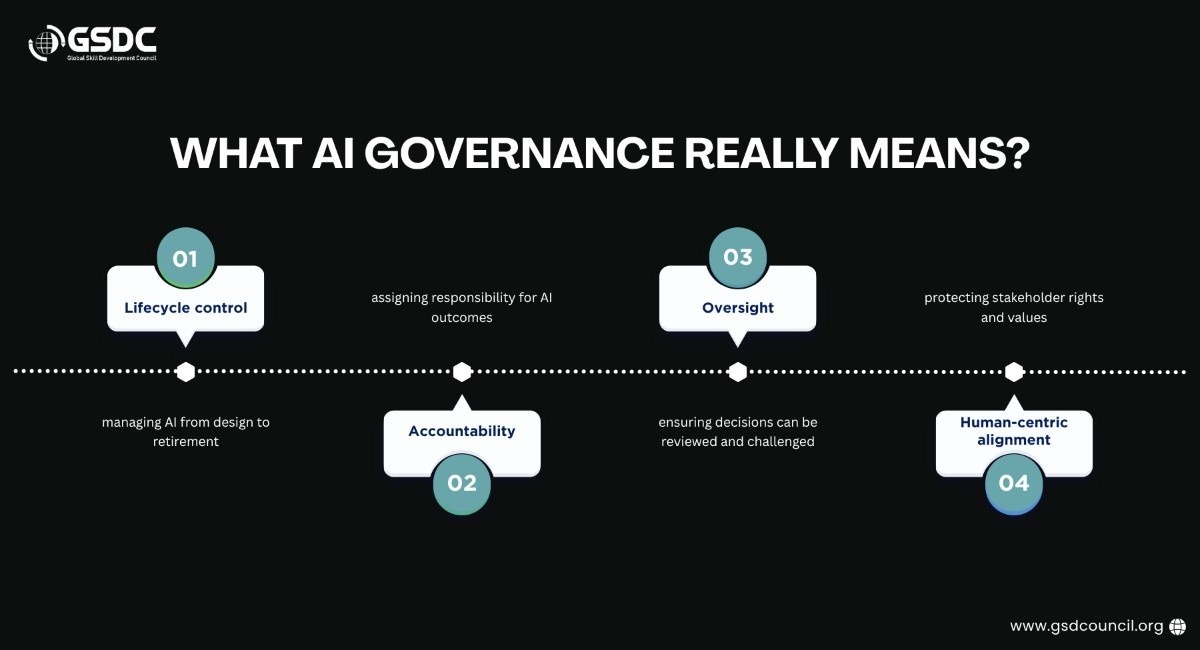

What AI Governance Really Means

Before governance can be operationalized, organizations must agree on what AI governance actually is.

Effective AI governance is not limited to policies or ethics statements. It must be actively enforced, embedded into operations, and driven from leadership. Without executive commitment, governance efforts risk becoming disconnected from real-world AI deployment.

The Plan–Do–Check–Act Model in ISO 42001

ISO 42001 follows the familiar Plan–Do–Check–Act (PDCA) cycle used across ISO management systems. This approach enables continuous improvement rather than static compliance.

- Plan (Clauses 4–7): Define context, policies, roles, and objectives

- Do (Clause 8): Implement AI governance controls

- Check (Clause 9): Monitor, measure, and audit performance

- Act (Clause 10): Improve effectiveness over time

Rather than a rigid linear process, PDCA allows organizations to mature governance capabilities incrementally, addressing different components in parallel where appropriate.

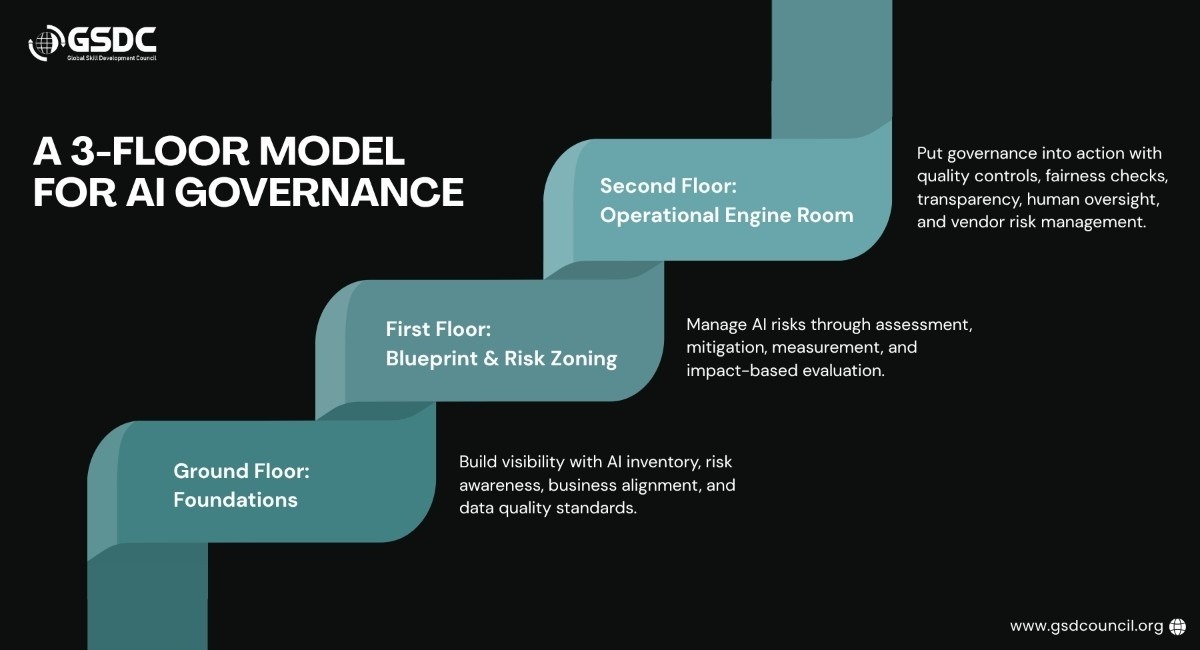

A Three-Floor Model for AI Governance

To make ISO 42001 more tangible, AI governance can be visualized as a three-floor operating model.

Ground Floor: Foundations

This level establishes visibility and alignment. Key activities include:

- Creating a comprehensive AI systems inventory

- Identifying shadow AI and vendor dependencies

- Conducting regulatory gap analyses

- Aligning AI strategy with business objectives

- Defining data quality requirements

Without knowing which AI systems exist and how they are used, governance cannot succeed. The AI systems list is the starting point for accountability and oversight.

First Floor: Blueprint and Risk Zoning

Once foundations are in place, governance moves into structured risk management.

ISO 42001 requires organizations to:

- Identify and analyze AI risks

- Define treatment and mitigation strategies

- Monitor effectiveness using measurable indicators

A major challenge at this stage is shifting from measuring activity (e.g., number of assessments completed) to measuring outcomes (e.g., reduced harm, improved fairness, stable residual risk).

Supplementary standards such as ISO/IEC 23894 (AI risk management) and ISO/IEC 42005 (AI impact assessments) can help close this measurement gap by focusing on real-world stakeholder impact.

Second Floor: Operational Engine Room

This is where governance becomes fully operational.

At this level, organizations focus on:

- Data quality enforcement

- Bias and fairness performance thresholds

- Transparency and explainability for users

- Human-in-the-loop controls

- Supplier and third-party AI risk management

Third-party AI presents one of the highest governance risks. Many organizations rely on vendor-provided AI models but fail to assess lifecycle governance, model updates, or training data risks. ISO 42001 makes it clear that responsibility does not stop at contractual boundaries. If an organization relies on AI output, it inherits accountability for its impact.

Material changes, such as vendor model upgrades or shifts from rule-based to probabilistic AI, must trigger reassessment and change management processes.

Moving Beyond Compliance: Continuous Improvement

While ISO 42001 emphasizes continual improvement, advanced optimization often goes beyond the standard’s scope. At higher maturity levels, organizations begin focusing on:

- Advanced AI literacy and training

- Automation bias awareness

- Ethical decision-making frameworks

- Organization-wide cultural alignment

At this stage, AI governance becomes a competitive advantage rather than a compliance obligation.

The Role of Governance Frameworks in Operationalization

One of the biggest challenges with AI governance is fragmentation. Security, privacy, legal, compliance, and AI teams often work in silos, even when addressing the same standard.

Unified governance frameworks help organizations:

- Cross-map multiple standards and regulations

- Reduce duplicated effort

- Improve audit readiness

- Translate governance theory into daily operations

Such frameworks do not replace ISO 42001 but instead operationalize it, turning governance requirements into actionable controls that scale across teams and systems.

Master AI Governance with the GSDC’s Certified ISO 42001 Lead Auditor Certification

The Certified ISO 42001 Lead Auditor program of GSDC is designed to equip professionals with the expertise to audit, implement, and enhance AI Management Systems (AIMS) in alignment with global standards. It focuses on building strong AI governance capabilities, ensuring organizations adopt AI responsibly, ethically, and in compliance with the ISO/IEC 42001:2023 framework.

Key Objectives of the Program:

- Understand the structure and requirements of ISO/IEC 42001:2023 for AI Management Systems

- Develop skills to audit AI systems for compliance, ethics, and transparency

- Identify and manage AI-related risks, controls, and governance frameworks

- Learn to plan, conduct, and close AI audits following global standards (ISO 19011, ISO 17021)

- Ensure organizations meet certification requirements and improve AI system performance

Build capabilities to recommend corrective actions and foster continuous improvement

Final Thoughts

ISO/IEC 42001 provides a strong foundation for AI governance, but its true value lies in how it is implemented. Organizations that treat it as a checkbox exercise miss its potential. Those that align it with risk management, operational controls, and continuous improvement can create AI systems that are not only compliant but also trustworthy, resilient, and ethically aligned.

Effective AI governance is not about slowing innovation; it is about ensuring innovation is safe, accountable, and sustainable.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!