AI Agent for Automated Test Case Generation

Written by Mansi Kapoor

- The Testing Challenge in Modern Software Engineering

- What is an AI Agent for Automated Test Case Generation?

- Architecture of an AI Test Case Generation System

- How AI Generates Test Cases

- Key Capabilities of AI Test Case Generation Agents

- Productivity and ROI Benefits

- The Future of AI in Software Testing

- Advance Your Career with GSDC’s Agentic AI Certification

- Conclusion

Software development has evolved rapidly over the past decade, with faster release cycles, complex architectures, and increasing customer expectations. However, one area that often slows down development is software testing. Traditional testing processes rely heavily on manual effort, domain expertise, and repetitive work, making them time-consuming and error-prone.

With the rise of Artificial Intelligence (AI) and intelligent automation, organizations are now exploring AI agents for automated test case generation. These AI-driven systems analyze code, requirements, and development workflows to automatically generate test cases, improving efficiency and software reliability. Many modern solutions leverage open ai agents to enhance testing intelligence and streamline workflows.

This blog explores how AI agents transform the software testing process, their architecture, key capabilities, benefits, and how organizations can implement them effectively. It also highlights how businesses are using ai for automation to modernize software testing, improve delivery speed, and support ai automated business operations through every automated agent involved in the development lifecycle.

The Testing Challenge in Modern Software Engineering

Software testing is essential to ensure that applications function correctly and deliver reliable user experiences. However, modern development teams face several testing challenges.

One major issue is that many bugs are discovered only after deployment. Studies suggest that nearly 80% of production bugs could have been detected earlier during testing. When defects reach production, they not only impact customers but also increase the cost of fixing issues, leading many AI automated business teams to adopt smarter testing strategies.

Another challenge is the significant time developers spend debugging and testing. In traditional workflows, developers may spend up to 60% of their time on debugging and validation instead of building new features. This slows down innovation and productivity, which is why organizations are increasingly using AI for automation in development environments.

Manual testing also creates slow release cycles. Even if a feature is developed quickly, it cannot be deployed until the entire test suite is written, executed, and validated. To address this, many companies are implementing open AI agents and other automated agent solutions to accelerate testing and improve software delivery speed.

Common pain points in manual testing include:

- Repetitive and slow test case creation

- Human errors in writing test scenarios

- Limited test coverage

- QA bottlenecks that delay release timelines

- Dependence on a small group of QA experts

These challenges have led organizations to explore AI-powered testing solutions.

What is an AI Agent for Automated Test Case Generation?

An AI test case generation agent is an intelligent system that automatically creates test cases by analyzing software code, requirements, and user stories. Many modern AI software testing tools now use this approach to improve testing speed and accuracy.

Instead of relying entirely on manual QA work, the AI agent performs several tasks:

- Analyzes code changes from repositories such as GitHub.

- Understands the context from user stories or issue trackers like Jira.

- Generates test cases, including unit, integration, and end-to-end tests, using automated test case generation tools.

- Validates test quality and ensures coverage.

- Commits generated tests directly to the code repository.

This allows testing to become an automated and integrated part of the development workflow, often powered by a generative AI agent designed to support development teams efficiently.

For example, when a developer submits a pull request, the AI agent can automatically analyze the code changes and generate appropriate test cases before merging the update, making it a valuable addition to the growing generative AI tools list used in modern software engineering.

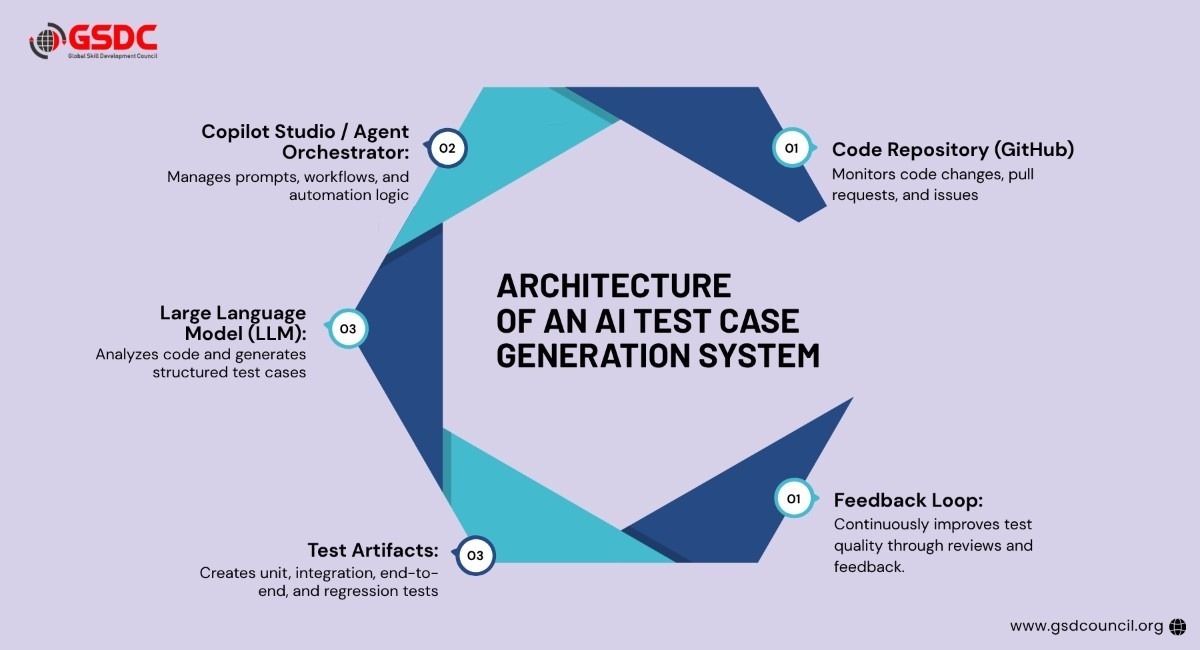

Architecture of an AI Test Case Generation System

A typical AI-based test automation architecture integrates several tools and technologies.

1. Code Repository (GitHub)

The codebase, pull requests, and issues are stored in GitHub repositories. The AI agent monitors changes and triggers testing processes when updates occur.

2. Copilot Studio or Agent Orchestrator

This layer manages the agentic logic and prompts used to generate test cases. It orchestrates workflows and coordinates the entire automation pipeline.

3. Large Language Model (LLM)

LLMs such as GPT-4 analyze the code context and generate structured test plans and scripts.

4. Test Artifacts

The output includes various types of tests:

- Unit tests

- Integration tests

- End-to-end tests

- Regression tests

These artifacts are automatically committed back into the repository for review.

5. Feedback Loop

Generated tests are reviewed and improved continuously through automated workflows and developer feedback.

This architecture ensures seamless integration between development, testing, and deployment processes.

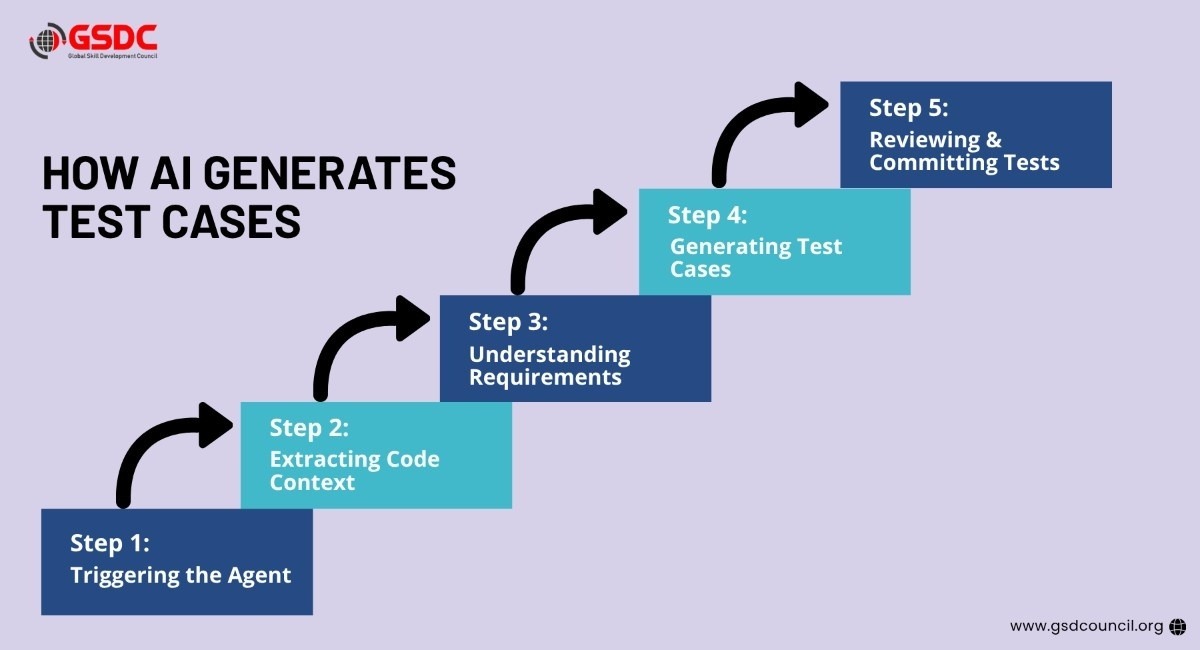

How AI Generates Test Cases

The process typically begins when a developer creates a pull request in a repository.

Step 1: Triggering the Agent

A GitHub webhook detects the pull request event and triggers the AI agent.

Step 2: Extracting Code Context

The agent analyzes the changed files, functions, and documentation strings to understand the code modifications.

Step 3: Understanding Requirements

The AI reads related Jira tickets or user stories, including acceptance criteria, to understand the expected functionality.

Step 4: Generating Test Cases

Using the extracted context, the AI generates structured test cases in formats compatible with testing frameworks such as:

- PyTest

- JUnit

- Cypress

- Selenium

Step 5: Reviewing and Committing Tests

The generated test cases are added to the repository through an automated workflow and reviewed before merging.

Key Capabilities of AI Test Case Generation Agents

AI-powered testing agents offer several advanced capabilities that significantly improve the testing process.

1. Multi-Language Support

AI agents can generate tests for applications written in multiple programming languages, including:

- Python

- Java

- TypeScript

- Go

This makes them suitable for diverse technology stacks.

2. Multiple Test Types

The AI agent can generate various testing scenarios, such as:

- Unit testing

- Integration testing

- End-to-end testing

- Regression testing

It can also identify edge cases and unusual input conditions that developers might overlook.

3. Security and Input Validation

AI-generated tests can include checks for:

- Input validation errors

- Security vulnerabilities

- Invalid data handling

These tests help improve application reliability and safety.

4. Real-Time Test Coverage Monitoring

Modern AI testing systems provide dashboards that display:

- Code coverage percentage

- Test coverage gaps

- Test health trends

This allows teams to quickly identify areas that need improvement.

Productivity and ROI Benefits

Implementing AI agents for test case generation can significantly improve productivity and cost efficiency.

1. Reduced Manual Testing Effort

Organizations can reduce manual test writing time by up to 70%, allowing developers to focus on building new features.

2. Faster Release Cycles

Automated testing ensures that code changes are validated quickly, enabling faster deployment and continuous delivery.

3. Improved Test Coverage

AI-generated tests typically achieve 90% or higher code coverage, reducing the risk of undetected bugs.

4. Cost Savings

Automating repetitive testing tasks reduces the need for extensive manual QA efforts, saving operational costs.

5. Developer Empowerment

Developers without deep QA expertise can still generate production-quality test suites using AI tools.

The Future of AI in Software Testing

AI-powered test automation is rapidly evolving. In the future, AI agents may:

- Predict potential bugs before development begins

- Automatically fix failing tests

- Perform advanced performance and security testing

- Continuously learn from historical defects

These capabilities will further reduce development friction and improve software quality.

Advance Your Career with GSDC’s Agentic AI Certification

Gain future-ready expertise in intelligent automation with GSDC’s Agentic AI Foundation Certification, designed for professionals looking to master the next generation of AI-powered systems.

Agentic AI Foundation certification provides in-depth knowledge of agentic AI concepts, autonomous decision-making, workflow automation, multi-agent systems, and real-world enterprise applications. Professionals learn how AI agents operate, collaborate, and solve complex business challenges across industries.

Whether you are a developer, business leader, analyst, or technology enthusiast, the program helps you build practical skills to implement agentic AI strategies effectively. Strengthen your professional profile, validate your expertise, and stay ahead in the rapidly evolving world of artificial intelligence innovation.

Conclusion

AI agents for automated test case generation are revolutionizing the way software testing is performed. By combining code analysis, natural language understanding, and intelligent automation, these systems help development teams detect issues earlier, improve test coverage, and accelerate release cycles.

As organizations continue to adopt AI-driven development workflows, automated testing agents will play a critical role in ensuring high-quality, reliable, and scalable software systems.

Related Certifications

Frequently Asked Questions

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!