Designing Guardrails That Keep Agentic AI Safe, Predictable, & Aligned

Written by Emily Hilton

- The Shift from Automation to Agentic AI

- What Are AI Guardrails?

- A Practical Example: AI in Customer Support

- The Three Layers of AI Guardrails

- The ALIGN Framework for Implementing Guardrails

- Human Oversight Still Matters

- Start Simple, Then Scale

- Why Guardrails Are Essential for the Future of AI-Driven Enterprises

- GSDC’s Agentic AI Certification: Empowering the Autonomous AI Era

- Conclusion

- FAQs

As organizations move from simple automation to agentic AI systems that can act, decide, and execute tasks autonomously, a new question has emerged:

How do we ensure AI remains safe, reliable, and aligned with business intent while operating with increasing independence?

This was the central theme of the webinar, “Designing Guardrails That Keep Agentic AI Safe, Predictable, and Aligned.” The session emphasized that adopting AI is no longer just a technical decision; it is a governance, risk, and organizational design challenge. As organizations increasingly deploy multi-agent systems and multi-AI agent systems to automate complex workflows, the need for structured oversight becomes critical.

To unlock AI’s full potential while maintaining control, companies must establish a robust AI governance framework supported by a clear AI governance maturity model that guides how AI systems are designed, monitored, and continuously improved without stifling innovation.

The Shift from Automation to Agentic AI

Traditional automation follows predefined rules. Agentic AI, however, can:

- Interpret context

- Make recommendations or decisions

- Execute actions dynamically

- Learn and adapt over time

This evolution allows businesses to deploy AI in areas such as customer service, operations, HR workflows, analytics, and product support. But with this autonomy comes risk. Without control mechanisms, AI may:

- Provide incorrect or inappropriate responses

- Make unauthorized decisions (e.g., issuing refunds)

- Expose sensitive data

- Act outside business policy

- Reduce trust among employees and customers

If AI is the vehicle, guardrails are the road infrastructure that keeps it moving safely.

What Are AI Guardrails?

AI guardrails are structured safety mechanisms, rules, validation layers, and oversight processes that ensure AI systems operate within defined boundaries. As organizations increasingly deploy Agentic AI and autonomous AI agents capable of making independent decisions, these guardrails become essential to maintain control, accountability, and reliability.

They serve three key purposes:

1. Keep AI on Track

Guardrails provide direction, ensuring Agentic AI and autonomous AI agents stay aligned with organizational objectives rather than drifting into unintended or harmful behavior.

2. Prevent Operational Risks

They detect anomalies early, preventing actions taken by autonomous AI agents from leading to reputational, financial, or compliance damage.

When employees and customers understand that Agentic AI operates within controlled limits and clear governance structures, adoption becomes smoother and resistance decreases.

A Practical Example: AI in Customer Support

Consider an organization deploying AI to manage support tickets. The system can:

- Read incoming queries

- Draft responses

- Resolve common issues

- Issue refunds within limits

- Escalate complex cases

Without guardrails, the AI might:

- Respond insensitively to complaints

- Promise services the company cannot deliver

- Issue refunds incorrectly

- Process fraudulent requests

Guardrails ensure the AI performs efficiently without compromising judgment, policy, or accountability.

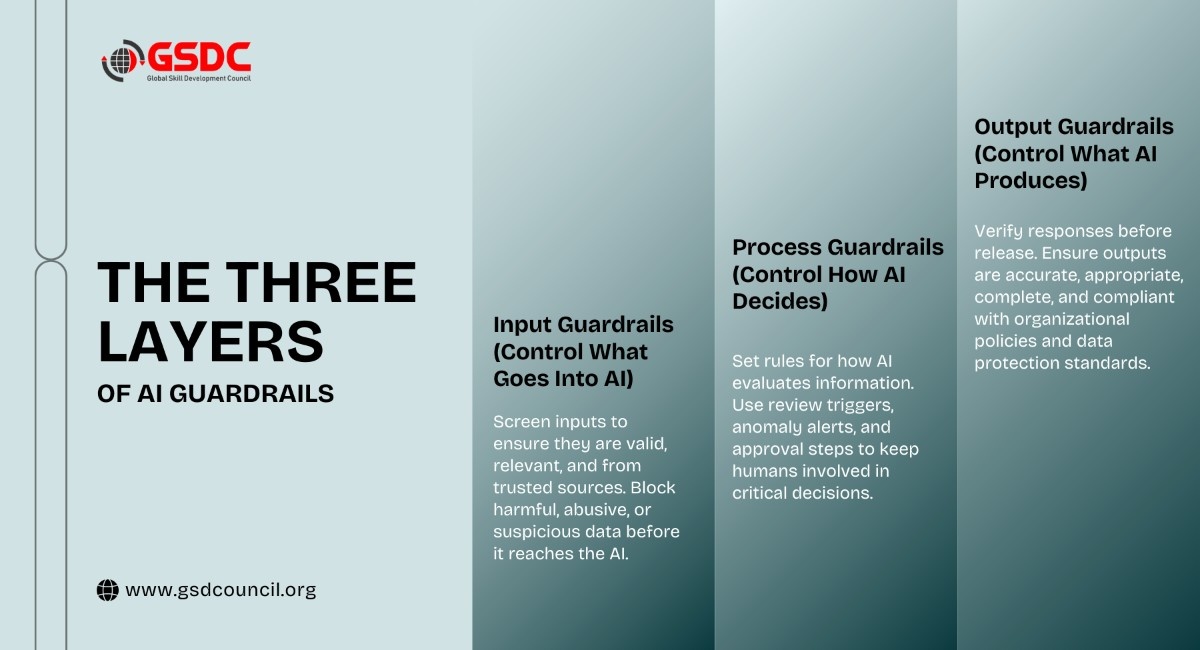

The Three Layers of AI Guardrails

The session highlighted that effective governance requires multi-layered guardrails, not a single checkpoint.

1. Input Guardrails (Control What Goes Into AI)

These filters validate incoming data before the AI processes it.

Key validation questions include:

- Is the data valid?

- Is the request appropriate?

- Is it within the AI’s scope?

- Is the source trustworthy?

For example, AI should not respond to abusive messages in the same way as legitimate customer queries. Similarly, it should ignore unverified or fraudulent inputs.

2. Process Guardrails (Control How AI Decides)

These govern how the AI interprets information and when it must pause or escalate decisions.

Examples include:

- Confidence thresholds that trigger human review

- Exception alerts for unusual patterns

- Approval workflows for high-impact actions

This ensures AI supports decision-making rather than replacing accountability.

3. Output Guardrails (Control What AI Produces)

Every AI-generated output must be validated before reaching customers or stakeholders.

Organizations should check for:

- Accuracy – Is the information correct?

- Tone – Is it professional and appropriate?

- Completeness – Does it truly answer the request?

- Compliance – Does it follow company policies?

The ALIGN Framework for Implementing Guardrails

To make guardrails actionable, the webinar introduced a practical model: ALIGN

A – Articulate Goals

Clearly define what the AI is meant to achieve and what success looks like.

L – Limit Capabilities

Set boundaries on what AI can and cannot do.

I – Inspect Regularly

Continuously monitor decisions and outputs rather than deploying AI and forgetting it.

G – Guide Through Feedback

Use errors as training signals to improve system performance.

N – Notify Humans When Needed

Ensure escalation paths exist for sensitive or high-risk scenarios.

This framework ensures AI remains aligned with business strategy rather than becoming an uncontrolled automation layer.

Human Oversight Still Matters

One of the strongest messages from the session was:

Higher stakes require greater human involvement.

Guardrails are not meant to eliminate humans; they are designed to create a human-AI collaboration model.

Organizations should implement:

- Approval checkpoints for high-risk decisions

- Confidence-based escalation when AI is uncertain

- Exception alerts for abnormal scenarios

- Regular audits and reviews of AI behavior

This hybrid governance model ensures AI accelerates operations without weakening accountability.

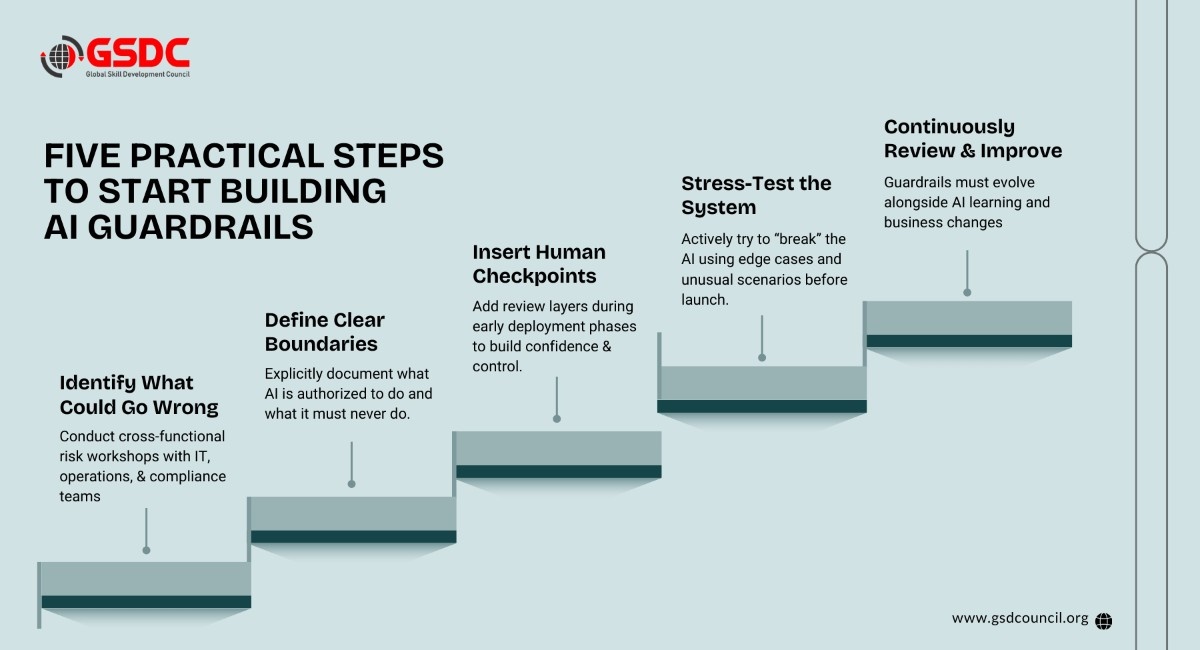

Start Simple, Then Scale

A key takeaway was that organizations should not delay AI adoption, waiting for perfect governance.

Instead:

- Begin with basic guardrails

- Deploy in controlled environments

- Monitor behavior

- Refine iteratively

AI systems improve over time; guardrails must do the same.

Why Guardrails Are Essential for the Future of AI-Driven Enterprises

As businesses adopt AI-powered workflows across HR, finance, customer experience, and product development, success will depend less on AI capability and more on AI governance maturity.

Organizations that invest in guardrails will gain:

- Safer AI adoption

- Faster employee trust

- Stronger regulatory readiness

- Better customer experiences

- Sustainable scalability of AI initiatives

In contrast, companies that deploy AI without structured oversight risk operational chaos, compliance failures, and erosion of trust.

GSDC’s Agentic AI Certification: Empowering the Autonomous AI Era

The Agentic AI Certification of GSDC is designed to help professionals understand the foundations, architecture, and governance of agentic systems. The program covers key concepts such as multi-agent systems, decision-making frameworks, AI orchestration, and the implementation of guardrails to ensure safe and predictable AI behavior. Participants gain practical knowledge of how autonomous agents operate, interact, and scale within modern enterprise environments.

This Agentic AI Certification also emphasizes building a strong AI governance framework and understanding the AI governance maturity model, enabling professionals to align AI initiatives with organizational policies, compliance requirements, and ethical standards.

Conclusion

Agentic AI is transforming how organizations operate, but its success depends on safe and responsible deployment. As autonomous AI agents begin making decisions and automating workflows, organizations must ensure these systems remain aligned with business goals and human intent. Guardrails such as input, process, and output controls help reduce risks and ensure predictable AI behavior.

By applying structured approaches like the ALIGN framework and implementing a strong AI governance framework, businesses can manage complex multi-agent systems effectively. With the right governance and an evolving AI governance maturity model, organizations can confidently scale multi AI agent systems while maintaining trust, control, and long-term value.

FAQs

1. What are AI guardrails in simple terms?

AI guardrails are rules, validations, and monitoring mechanisms that ensure AI systems operate safely, ethically, and within defined business boundaries.

2. Why are guardrails important for agentic AI?

Because agentic AI can make decisions and take actions independently, guardrails prevent errors, policy violations, and unintended outcomes while maintaining trust and compliance.

3. Do guardrails slow down AI innovation?

No. They enable safe scaling. Guardrails create a structured environment where AI can be deployed confidently without introducing unmanaged risks.

4. How often should AI guardrails be reviewed?

Guardrails should be monitored continuously and formally reviewed at regular intervals, especially as AI systems learn, evolve, or expand into new use cases.

5. Where should organizations start when implementing guardrails?

Start by identifying risks in a single AI use case, define clear limits, add human checkpoints, test thoroughly, and refine governance as adoption grows.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!