Using Agentic AI for Workforce Decisions with Human Oversight

Written by Rajeswari Uthirapathy

Artificial intelligence is no longer a future concept in workforce management; it is already reshaping how organizations hire, manage, and retain talent. Today, artificial intelligence in human resources is transforming HR operations. From résumé screening and interview scheduling to performance insights and compensation benchmarking, HR artificial intelligence is increasingly trusted with decisions that directly affect people’s careers and livelihoods.

Among these advancements, Agentic AI represents a significant shift. Unlike traditional AI tools that merely assist users, agentic systems can take actions independently, execute autonomous AI workflows, and make recommendations at scale. These capabilities are enabled through different types of AI agents designed to automate and manage complex processes. While this capability unlocks speed, consistency, and efficiency, it also raises an important question:

How can organizations use Agentic AI for workforce decisions while maintaining essential human oversight?

This article explores how AI has evolved in workforce management, what can go wrong without proper controls, and how organizations can strike the right balance between automation and human judgment through responsible AI implementation and effective enterprise AI governance.

The Evolution of AI in Workforce Decisions

Earlier AI tools in HR acted like intelligent assistants. They generated suggestions, summarized information, or highlighted patterns, but humans remained responsible for every decision. For example, AI could help draft interview questions or shortlist résumés using keywords, yet recruiters still manually review and act on those results.

Today’s AI operates very differently.

Modern Agentic AI systems can:

- Screen and rank candidates based on defined criteria

- Coordinate interview schedules by syncing calendars

- Send reminders to candidates and interviewers

- Aggregate and summarize interview feedback

- Recommend salary ranges using real-time market data

In practical terms, this means AI can manage entire segments of the hiring process autonomously. Organizations facing high application volumes can now process thousands of résumés in hours instead of weeks. However, this autonomy also shifts human roles from execution to oversight, judgment, and accountability.

Where AI Can Fail Without Human Oversight

Despite its intelligence, AI does not understand context the way humans do. When allowed to operate unchecked, even advanced systems can create unintended consequences.

Despite its intelligence, AI does not understand context the way humans do. When allowed to operate unchecked, even advanced systems can create unintended consequences.

1. Misinterpreting Human Context

In artificial intelligence in human resources, AI often treats patterns as risk signals. For instance, a résumé with a career gap may be flagged as undesirable, even if the break was due to caregiving, health, or education. While HR artificial intelligence can analyze data quickly through autonomous AI workflows, a human reviewer can understand this context; AI cannot unless explicitly guided through proper responsible AI implementation.

2. Reinforcing Hidden Biases

If AI systems used in artificial intelligence in human resources are trained on historical hiring data, they may learn and amplify past biases. For example, if previous successful hires came predominantly from a few institutions or backgrounds, HR artificial intelligence may rank similar profiles higher while unintentionally excluding diverse talent. Without strong enterprise AI governance, different types of AI agents involved in hiring automation can replicate these biases at scale.

This is not hypothetical. Several organizations have publicly acknowledged abandoning AI hiring tools after discovering biased outcomes rooted in historical data.

3. Errors at Scale

When humans make mistakes, those mistakes are usually isolated and corrected quickly. However, when HR artificial intelligence operating through autonomous AI workflows makes a mistake, it can repeat the same error hundreds or thousands of times before anyone notices. In artificial intelligence in human resources, a misinterpreted job requirement can result in large pools of qualified candidates being automatically rejected. This is why responsible AI implementation and strong enterprise AI governance are essential when deploying different types of AI agents in HR processes.

The paradox is clear:

The more powerful AI becomes, the more important human involvement becomes not less.

A Helpful Analogy: AI as Autopilot, Humans as Pilots

A useful way to understand this balance in artificial intelligence in human resources is through the analogy of modern aviation.

Commercial airplanes use sophisticated autopilot systems that handle routine flying tasks with extreme precision. Yet pilots are always present. Why? Autopilot systems cannot manage unexpected situations, make ethical decisions, or take accountability when things go wrong.

In workforce management powered by HR artificial intelligence and autonomous AI workflows:

- AI is the autopilot handling volume, consistency, and speed through automated processes and different types of AI agents.

- Humans are the pilots making judgment calls, managing exceptions, and taking responsibility through responsible AI implementation and strong enterprise AI governance.

AI can screen thousands of candidates efficiently using artificial intelligence in human resources, but humans must decide whether a non-traditional background, career shift, or unique skill set makes someone right for the role.

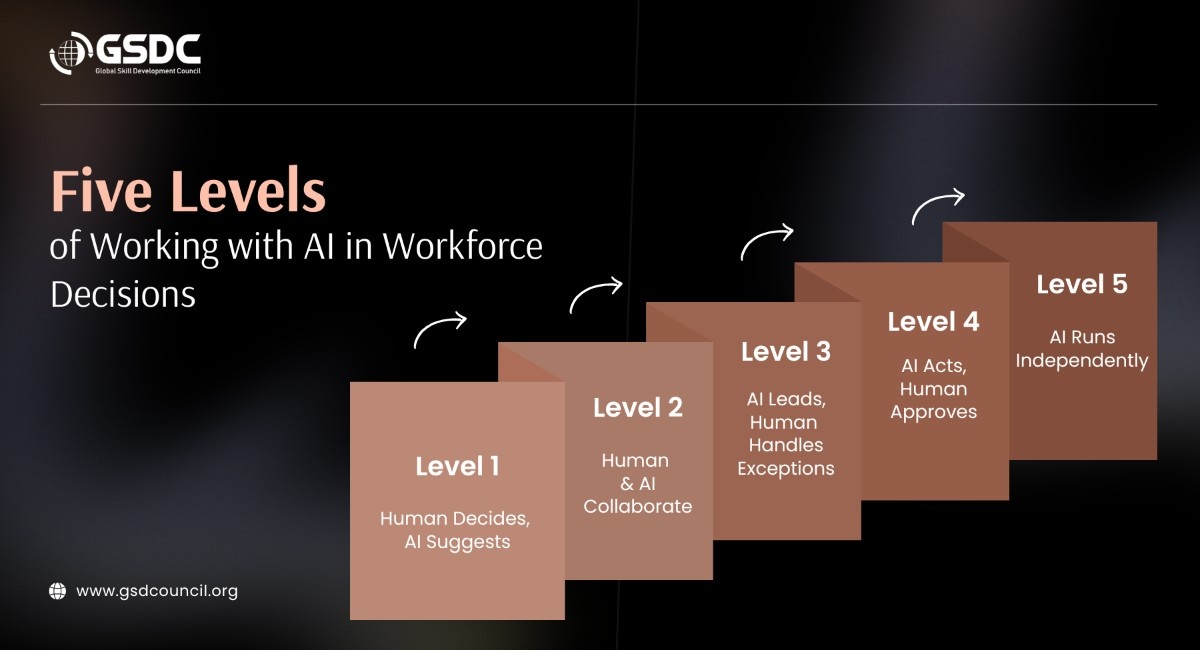

Five Levels of Working with AI in Workforce Decisions

Not all decisions require the same level of automation or oversight. A practical approach is to think in five levels, ranging from maximum human control to full AI autonomy.

Not all decisions require the same level of automation or oversight. A practical approach is to think in five levels, ranging from maximum human control to full AI autonomy.

Level 1: Human Decides, AI Suggests

AI provides insights, analysis, or data, but humans make every decision.

Example: AI analyzes market compensation trends, but HR decides the final offer.

Level 2: Human and AI Collaborate

AI drafts or proposes options, and humans review, refine, and finalize.

Example: AI drafts job descriptions, which are then adjusted to reflect company culture and role nuance.

Level 3: AI Leads, Human Handles Exceptions

AI completes tasks but flags uncertain or complex cases for review.

Example: AI shortlists candidates but highlights profiles with unconventional experience for human evaluation.

Level 4: AI Acts, Human Approves

AI executes actions, but nothing is finalized without human approval.

Example: Interview schedules are prepared by AI, but sent only after review.

Level 5: AI Runs Independently

Reserved for low-risk, high-volume tasks with minimal impact.

Example: Automated interview reminders and status updates.

The guiding principle is simple:

Higher stakes require higher human involvement.

Mapping AI Across the Hiring Lifecycle

When applied thoughtfully, AI can support each stage of hiring differently:

- Job postings and application collection: Low-risk, high-volume AI can run autonomously

- Résumé screening: Medium risk AI leads, humans review edge cases

- Interview scheduling: Coordination-heavy AI acts with approval checkpoints

- Candidate evaluation: High-stakes AI summarizes data, humans decide

- Offer decisions: Highest stakes AI suggests, humans finalize

This approach ensures efficiency without sacrificing fairness, accountability, or trust.

Practical Ways to Maintain Human Control

To use Agentic AI responsibly, organizations can implement four practical control mechanisms:

- Approval Checkpoints: AI prepares actions, but humans must approve before execution.

- Confidence-Based Escalation: AI proceeds when confident and alerts humans only when uncertain.

- Exception Alerts: Humans intervene only for outliers, not routine cases.

- Regular Audits and Reviews: Periodic reviews ensure AI decisions remain fair and aligned with organizational values.

Combining these methods allows organizations to scale AI usage safely while maintaining oversight.

Common Mistakes to Avoid

Organizations adopting AI often stumble in predictable ways:

- Blind approvals: Clicking “approve” without reviewing undermines oversight

- No override mechanism: Automatically rejected candidates cannot be recovered

- Lack of audit trails: Inability to explain AI decisions increases legal and ethical risk

Maintaining logs, keeping rejected profiles searchable, and documenting decision criteria are essential best practices.

How to Get Started with Agentic AI

For organizations beginning their AI journey:

- Start with one step, not the entire process

- Assign a clear owner for AI oversight

- Use simple checklists for reviews

- Enable decision logging and transparency

- Expand gradually as confidence grows

Starting small allows teams to learn quickly and reduce risk.

GSDC Agentic AI Certification

The Agentic AI Certification program trains professionals to create, operate, and control autonomous artificial intelligence systems. The program covers key concepts such as types of AI agents, autonomous AI workflows, and responsible AI implementation.

GSDC brings the program that teaches AI governance for enterprises while showing professionals how to use artificial intelligence across human resources and business operations with transparency, accountability, and human supervision.

Conclusion

The decision-making process at work gains better results through agentic AI because it improves three factors: decision speed, decision consistency, and decision-making capacity. Human beings possess the exclusive ability to handle fairness matters, accountability issues, and ethical decision-making processes. The future of workforce management will develop through AI-human collaboration as both groups work together to achieve their respective strengths.

FAQs

1. What is Agentic AI in workforce management?

Agentic AI refers to AI systems that can independently take actions such as screening candidates or scheduling interviews rather than simply providing recommendations.

2. Can AI replace human decision-making in hiring?

No. While AI can handle volume and consistency, humans are essential for judgment, ethical reasoning, and accountability in high-stakes decisions.

3. How can organizations prevent bias in AI hiring tools?

By auditing outcomes regularly, reviewing edge cases, diversifying training data, and maintaining human oversight in critical decisions.

4. Which hiring tasks are safest to automate fully?

Low-risk, high-volume tasks such as interview reminders, application acknowledgments, and scheduling confirmations.

5. What is the biggest risk of using AI without oversight?

Errors and biases can scale rapidly, leading to unfair hiring outcomes and loss of qualified candidates without anyone noticing.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!