AI Testing 101: A Practical Guide to Skills, Basics & Getting Started

Written by Matthew Hale

We interact with AI far more often than we realise. From banking alerts and online shopping suggestions to healthcare systems, HR tools, and cybersecurity platforms, AI now plays a major role in the decisions and experiences we rely on every day.

As AI becomes a core part of these digital systems, ensuring its accuracy, fairness, and safety has become essential. That’s why AI testing, AI quality assurance, and AI model evaluation are quickly emerging as critical skills for QA professionals.

This blog walks you through the basics of AI testing in a clear and approachable way - covering what it is, why it matters, the skills it requires, and how testers can begin building expertise in this evolving area.

What Is AI Testing?

AI testing is the process of ensuring that an AI system works the way it should - not just once, but consistently and responsibly. It involves checking whether an AI model:

- Produces accurate and reliable outputs

- Responds consistently across different types of inputs

- Treats all users fairly

- Performs safely in real-world conditions

- Provides transparent and understandable results

- Adjusts appropriately as data and environments change

While traditional systems follow fixed, rule-based logic, AI systems learn from data. This makes their behaviour probabilistic - meaning outcomes can vary based on patterns, inputs, and model training.

In simple terms:

Traditional testing checks predefined logic.

AI testing evaluates evolving behaviour.

Because of this, AI testing requires a different approach and is more complex than traditional QA.

Why AI Testing Is Important

AI now influences critical decisions across finance, healthcare, hiring, security, and customer-facing systems. When AI behaves incorrectly or unfairly, the impact can be serious for both users and organisations.

AI testing makes sure that:

- Accuracy: AI outputs are correct, make sense, and are in line with the expected results.

- Fairness: The decisions are free from bias and fair to all user groups.

- Reliability: The model is able to function in the same way with different scenarios and inputs.

- Safety: The AI does not perform any action that is harmful or even indirectly leads to situations it is not designed for.

- Transparency: The reasons for the system's decisions can be communicated and understood by the stakeholders.

- Readiness in real life: The AI system can withstand disturbances in data, changes of conditions, or situations of heavy use.

In high-stakes environments, untested AI creates operational, ethical, and regulatory risks. This is why AI software testing and AI performance testing have become essential capabilities for modern QA teams.

How AI Testing Differs From Traditional Testing

AI systems behave very differently from traditional software, which means the testing approach must also change. The comparison below highlights the key differences:

Because of these differences, AI testing requires evaluating accuracy metrics, patterns, model confidence, drift, and fairness-rather than relying solely on predefined expected results.

AI testing has also introduced new categories, such as:

- AI model testing

- AI robustness testing

- Testing machine learning models

- AI validation and verification

In short, AI testing expands the tester’s role from checking outputs to evaluating behaviour, risk, and long-term model performance.

Key Areas of AI Testing

AI testing can be understood through four core pillars. Each area addresses risks commonly seen in real-world AI deployments.

1. Data Testing

High-quality data is the foundation of any AI system.

Industry analyses show that 70–85% of AI projects fail due to poor or imbalanced data.

To avoid these risks, testers examine:

- Completeness

- Representation

- Accuracy

- Balance

- Bias risks

Strong data testing prevents unreliable predictions and unfair outcomes.

2. Model Behaviour Testing

AI is non-deterministic, so testers assess how the model behaves rather than expecting fixed outputs. This includes:

- Accuracy

- Error patterns

- Consistency

- Confidence levels

- Edge-case responses

Studies show AI accuracy can drop 20–30% when evaluated outside ideal conditions.

Effective behaviour testing ensures the model performs as expected in practical scenarios.

3. Fairness & Explainability Testing

AI may unintentionally disadvantage certain user groups due to biased or incomplete training data.

Research shows accuracy drops of 25–35% for underrepresented demographics in several models.

Fairness and explainability testing ensure that:

- Fair and equitable outcomes

- Compliance with ethical and regulatory standards

- Clear and transparent explanations

This helps organisations deliver responsible and trustworthy AI systems.

4. Real-World Performance Testing

AI must remain stable as data, environments, and user behaviour change. Testers examine:

- Handling of noisy or incomplete inputs

- Load performance

- Drift detection

- Adaptability to new patterns

Industry surveys show model drift is a leading cause of AI failures in production.

Real-world performance testing ensures long-term stability and operational reliability.

These four pillars form the foundation of effective AI software testing, and professionals looking to strengthen these capabilities can benefit from the Certified AI Testing Professional (CAITP) program to build job-ready, globally aligned AI testing skills.

Download the checklist for the following benefits:

🧰 Grab this guide and discover practical techniques you can start using today.

📥 Download now and level up your AI QA workflow!

Skills Required for AI Testing

AI testing does not demand deep machine learning expertise. Most QA professionals can transition into this area using their existing foundations and targeted upskilling.

Key competencies include:

- Strong QA fundamentals

- Understanding of basic data concepts

- Critical thinking and analytical ability

- Familiarity with AI testing tools and platforms

- Skill in identifying patterns, anomalies, and model behaviour

- Awareness of bias, fairness, and explainability considerations

Many testers already have a significant portion of these skills, making AI testing a natural and achievable progression for QA professionals.

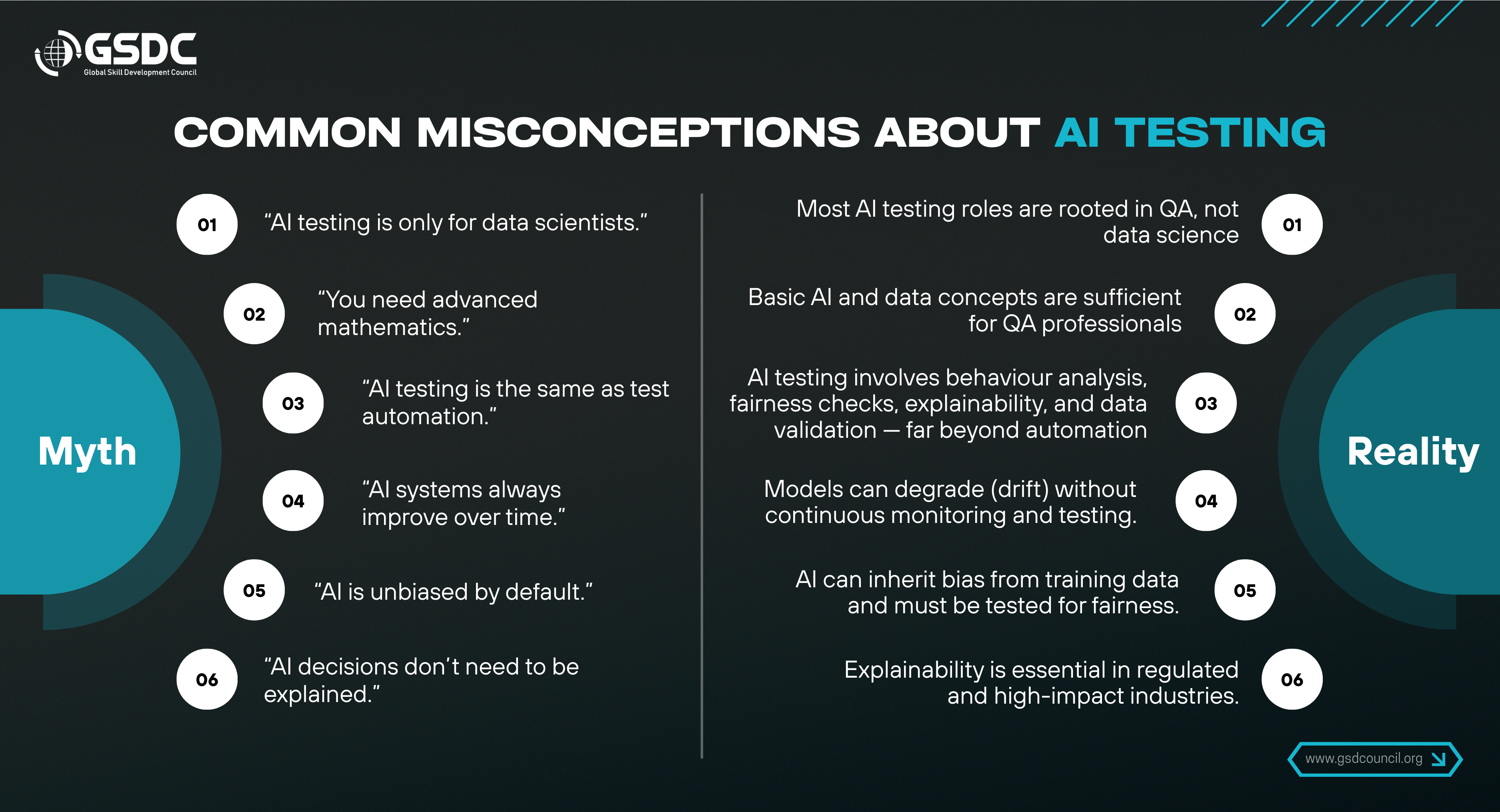

Common Misconceptions About AI Testing

Many testers hesitate to explore AI testing because of a few common misconceptions.

A clear understanding of these misconceptions helps testers adopt AI testing with confidence and the right expectations.

How to Start Learning AI Testing

A clear four-step approach can help testers begin their journey into AI testing.

Step 1: Learn Basic AI Concepts

Get familiar with core terms such as model, dataset, training, accuracy, precision, bias, drift, and prediction.

Step 2: Explore AI Testing Tools

Use tools that support AI-assisted testing, AI-driven automation, defect prediction, and intelligent analysis.

This builds foundational experience in AI test automation.

Step 3: Practice With Simple AI Features

Start testing AI-driven components such as:

- Chatbots

- Recommendation engines

- Sentiment analysis models

- Image recognition models

Hands-on exploration helps you understand AI model behaviour more effectively.

Step 4: Follow a Structured Learning Path

Webinars, workshops, professional communities, and certification programs from bodies such as the Global Skill Development Council (GSDC) provide structured learning and globally aligned competence in AI testing.

AI Testing Best Practices

- Evaluate data quality at the earliest stage

- Test for fairness across different user segments

- Use diverse and representative datasets for validation

- Assess explainability when required by stakeholders or regulations

- Combine manual testing, automation, and AI-powered testing techniques

- Monitor accuracy, stability, and model drift over time

- Document known limitations, risks, and assumptions clearly

These practices help ensure reliable, responsible, and long-term performance of AI systems.

Your Next Step in AI Testing Competency

For professionals seeking structured, globally recognised credentials in AI testing, the Certified AI Testing Professional (CAITP) program offers a comprehensive pathway to develop real-world competence in this domain. It equips learners with practical frameworks for model evaluation, fairness assessment, explainability testing, robustness checks, and end-to-end AI validation.

Delivered through the Global Skill Development Council (GSDC), the CAITP program enables testers to build industry-aligned expertise and transition confidently into specialised AI QA and model testing roles.

Conclusion

As AI becomes embedded across industries, AI testing has emerged as a critical discipline within modern software quality assurance. It plays a central role in ensuring that AI systems remain accurate, fair, transparent, and dependable throughout their lifecycle.

Testers do not need deep machine learning expertise to begin. With strong QA fundamentals, an understanding of data-driven behaviour, and a structured approach to learning, any QA professional can transition into AI testing with confidence.

Building AI testing skills today places professionals at the forefront of responsible, future-ready innovation and positions them for high-impact roles in the evolving technology landscape.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!