Who Is Responsible When Agentic AI Makes Decisions?

Written by Akshad Modi

- What Makes Agentic AI Different?

- Can AI Be Held Responsible?

- Why Accountability Is So Important

- Shared Responsibility: Who Is Involved?

- Planning for Mistakes: Foreseeable Risks

- Making AI Decisions Easier to Understand

- Controlling What AI Agents Are Allowed to Do

- Working With AI Vendors and Tools

- Skills Needed to Manage Agentic AI Responsibly

- Final Thoughts: Trust Comes From Accountability

Remember the viral case where an airline had to honor incorrect fare terms shared by its chatbot? Even though the mistake was made by AI, the company was held responsible.

As AI agents take on bigger roles and carry out tasks on their own, mistakes like this raise an important question: when AI acts independently, who is responsible?

Agentic AI refers to artificial intelligence systems that can plan, decide, and take actions on their own, with limited human involvement. In simple terms, what does agentic AI mean? It means AI systems that can work independently.

Unlike basic chatbots or traditional automation tools, agentic AI systems can make decisions and trigger real business actions. This difference is often highlighted when comparing gen AI vs agentic AI.

As more organizations adopt agentic AI frameworks, accountability in autonomous AI systems becomes critical.

What Makes Agentic AI Different?

In order to grasp why accountability is a key factor, one should first understand how agentic AI differs from traditional software or previous AI systems.

|

Aspect |

Traditional Software |

Agentic AI |

|

Decision-making |

Follows strict rules set by humans |

chooses actions by itself to reach the goal |

|

Learning ability |

Does not change behavior unless manually updated |

Gets knowledge from data and past results |

|

Level of autonomy |

Human input is required at all times |

Self-sufficient with little need for human intervention |

|

System interaction |

Limited to one system only |

Works across several systems |

|

Task execution |

Can only perform predefined tasks |

Does human tasks without asking for human permission every time |

|

Real-world examples |

Simple automation scripts |

Approving refunds, reordering inventory, scheduling updates, shortlisting candidates, triggering transactions |

Because agentic AI systems can act on their own, these real-world agentic AI use cases also carry a higher risk. When something goes wrong, errors can have serious business, legal, or financial consequences, making accountability in autonomous AI systems critical.

Can AI Be Held Responsible?

The simple answer is: No.

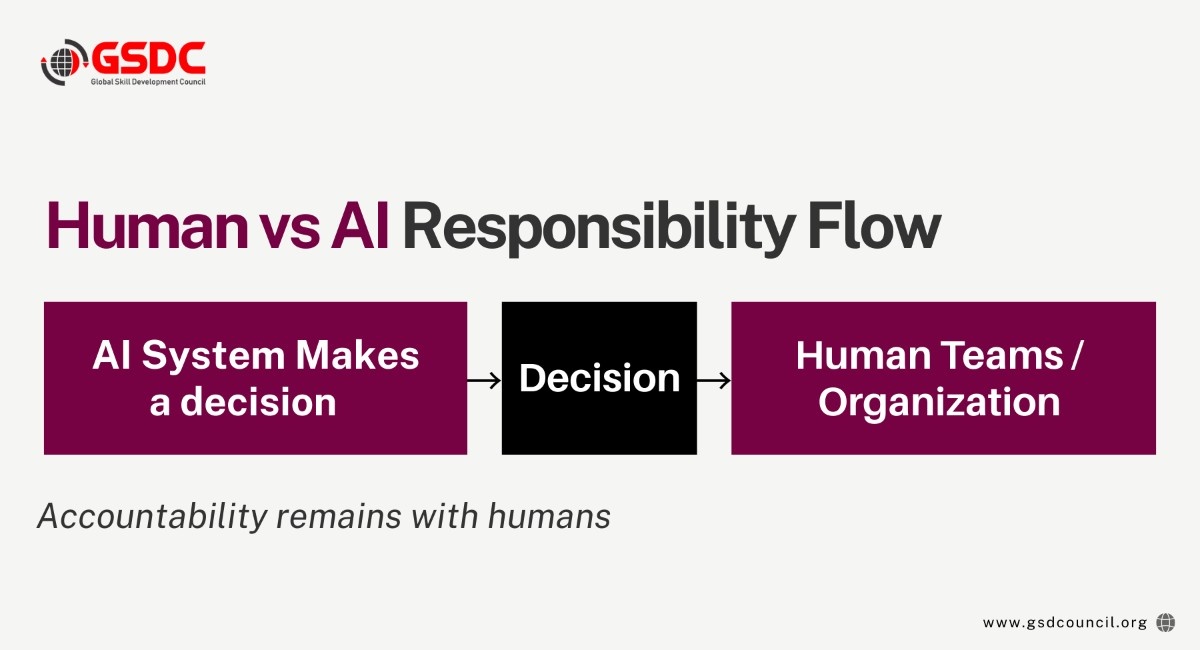

AI systems, regardless of their capability level, legally or ethically cannot be held responsible for their actions because they lack intention and legal personality. Ultimately, it is people and organizations who bear the responsibility.

In practice, accountability for agentic AI is shared across key roles:

- An organization implementing AI is ultimately responsible for the results

- Technical teams, who create, configure, and supervise the system

- Business executives, who give the green light for the use of AI and set its purposes

- Governance, risk, and compliance (GRC) bodies, which check if the policies and regulations are complied with

Even when agentic AI systems make decisions on their own, humans remain responsible for the results. AI may act independently, but accountability never shifts away from the people who deploy it.

This principle is also reflected in global skills and governance discussions supported by bodies such as the Global Skill Development Council, which emphasize human responsibility and oversight as essential elements of responsible AI use.

Why Accountability Is So Important

It is hardly surprising that the agentic AI news keeps on highlighting the point that, without proper governance, autonomous AI systems can definitely cause real issues.

One of the cases was when Air Canada was blamed for their chatbot giving wrong fare information to a customer. It was the AI that made the mistake, yet the airline, not the machine, was the one that had to answer for the situation.

These illustrations reveal typical dangers, such as:

- An AI agent that approves something it should not

- An AI system that utilizes erroneous or prejudiced data

- An AI tool that misunderstands its objective

- An AI agent that takes actions beyond what was intended

When it is not clear who is accountable, the problems for the organization include:

- Learning from mistakes

- Explaining AI decisions to regulators and auditors

- Gaining and maintaining customer confidence

- Stopping the problems from recurring

Planning for Mistakes: Foreseeable Risks

Responsible organizations prepare hazard scenario situations in advance in case they use agentic AI. Such systems could potentially cause harm when they act independently, which is why it is crucial to consider the risks beforehand.

Key questions organizations should ask include:

- What errors can this AI system make?

- If the AI system misinterprets instructions or objectives, what will happen?

- Is it possible for the AI to cause financial, legal, or reputational harm?

- What should be the course of action if the AI behaves in an out-of-character manner?

By forecasting the above examples, organizations would:

- Place human scrutiny in the areas where this is indispensable

- Decide on strict boundaries of agentic AI's power

- Draw up human intervention rules when humans must step in, and escalation paths

Thinking ahead helps organizations reduce risk, respond faster when issues arise, and use autonomous AI systems more responsibly.

Making AI Decisions Easier to Understand

Transparency is a prerequisite for accountability. In cases where decisions by agentic AI impact individuals, finances, or operations, it is vital for the company to comprehend and explain those decisions.

Organizations should be able to explain, and thus understand, the decisions made by agentic AI:

- What data was used by the agentic AI to make the decision

- What goals, rules, or constraints helped determine the outcome

- Whether a human can review, question, and explain the result

Explainability makes it easier to:

- Internal review of AI decisions

- Supporting audits and compliance reviews

- Communicating with regulators and external bodies

- Gaining the trust of autonomous AI systems

With a better understanding of AI decisions, companies will be able to handle risks more efficiently and stay accountable even when AI systems become more autonomous.

Developing these explainability and oversight skills is a key focus of learning programs such as the Agentic AI Professional Certification, which emphasize practical decision review and accountability in real-world AI deployments.

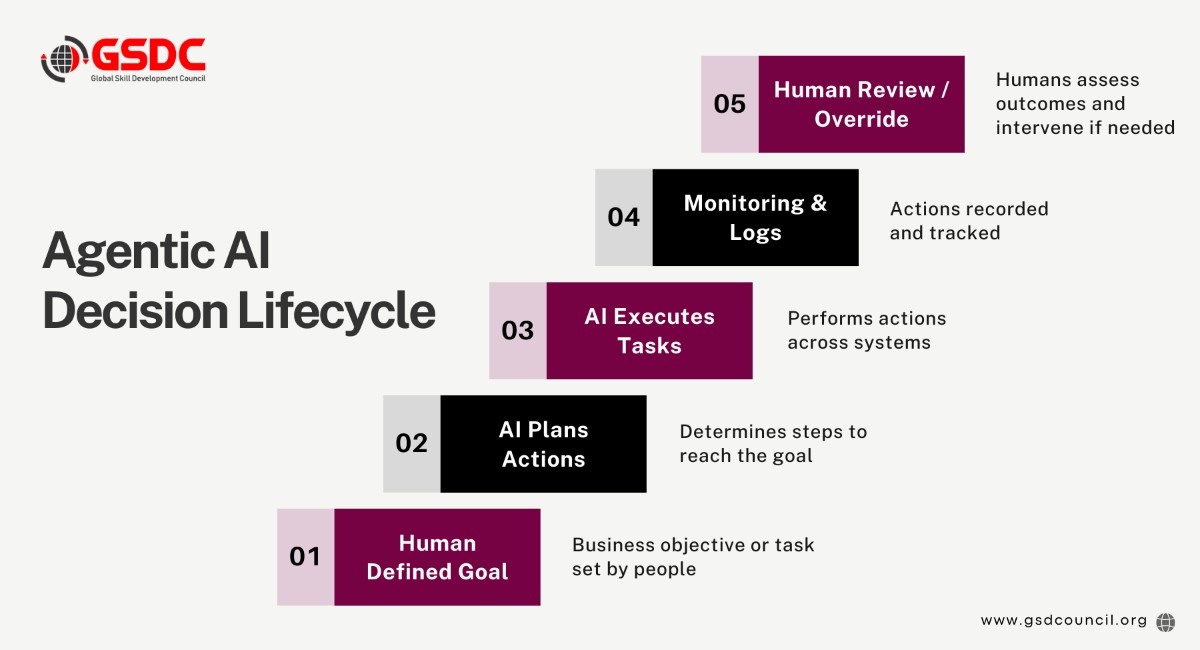

Controlling What AI Agents Are Allowed to Do

Agentic AI systems must have strict boundaries if they are to operate without the direct intervention of humans. Although AI may have the ability to make decisions and take actions autonomously, the organizations behind AI technologies must always maintain their sovereignty.

Good practice includes:

- Doing a thorough job in specifying the range of activities AI agents may decide to initiate and carry out on their own

- Restricting AI's access to sensitive systems and data

- Giving each AI agent the responsibility of one single human owner

- Keeping the record of all AI activities and also the surveillance of AI behavior

Such measures not only keep the organizations responsible but also ensure that the organizations always have the final say on the behavior of agentic AI, even at a time when AI systems become more and more autonomous.

Working With AI Vendors and Tools

Many organizations rely on third-party agentic AI frameworks or tools instead of building systems in-house. However, using external vendors does not transfer responsibility. Accountability usually remains with the organization that deploys the AI.

Consider IBM, for instance, where healthcare organizations complained about the inconsistent recommendations from IBM Watson for Health. After that, the system was in IBM’s control, but healthcare organizations and professionals were still responsible for using the system and making decisions based on the output.

To manage vendor-related risks, organizations should:

- Understand what AI systems can and cannot do

- Review contracts carefully, paying attention to what each party does and who is responsible

- Monitor third-party agentic AI behavior in real use

- Ensure that systems are consistent with in-house security, data, and compliance policies

- Avoid assuming vendors will take responsibility for mistakes

This highlights the importance of effective vendor management-and the fact that organizations are still accountable for the autonomous AI systems they decide to use.

Skills Needed to Manage Agentic AI Responsibly

Agentic AI is becoming increasingly popular, and companies will require professionals who know how to utilize it in a responsible manner. Some important skills required to use agentic AI responsibly are:

- Understanding how agentic AI functions

- Setting parameters and controls, as well as maintaining human supervision over systems

- Being able to articulate how AI makes decisions

- Managing compliance and risks associated with AI solutions

Global Skill Development Council (GSDC) assists with global skill development by offering training and certification programs aligned with each industry.

The GSDC’s Agentic AI Professional Certification Program provides professionals with the knowledge and experience necessary to effectively govern artificial intelligence agents and promote responsible autonomous agents within their business environment.

Final Thoughts: Trust Comes From Accountability

Agentic AI has the potential to create value on the real scale, but this will be the case only when clear and well-defined accountability is present.

With AI systems becoming increasingly autonomous, it is the responsibility of organizations to ensure that decisions are made and actions taken are properly governed. Trust can be built in autonomous AI systems when there is clear ownership, established controls, human oversight, and trained professionals involved.

When organizations are accountable, they can react more quickly to problems, comply with regulatory standards, and keep the trust of customers and stakeholders.

As the agentic AI develops further, accountability is no longer just a matter of choice, but it is a very important base for trust, risk lowering, and value generation in the long run.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!