AI Guardrails: The Foundation of Safe and Responsible AI in Business

Written by Matthew Hale

- What Are AI Guardrails?

- Why Guardrails AI Is Critical in Business Environments

- Types of AI Guardrails Used in Enterprises

- The Core Layers of Guardrails in AI

- Practical Responsible AI Tools Used as Guardrails

- Governing Agentic AI with Guardrails AI and Responsible AI Principles

- Building Capability for Responsible AI Governance

- Conclusion: Guardrails Are the Backbone of Responsible AI

Autonomous systems are no longer a vision of the future but are already changing the way business organizations function. Today, intelligent agents are capable of autonomous reasoning, decision-making, and execution. In fact, 78% of all organizations worldwide are already utilizing AI in at least one business function, and 23% are scaling autonomous AI systems, signaling a rapid shift toward autonomous decision-making in business environments.

Yet as autonomy grows, trust is what comprises the biggest threat. This is where Guardrails AI provides a crucial starting point for each successful AI approach.

In modern business environments, responsible AI is not achieved by chance. It is created by embedding AI guardrails into every layer of the system so that autonomous decisions remain safe, compliant, and aligned with business values.

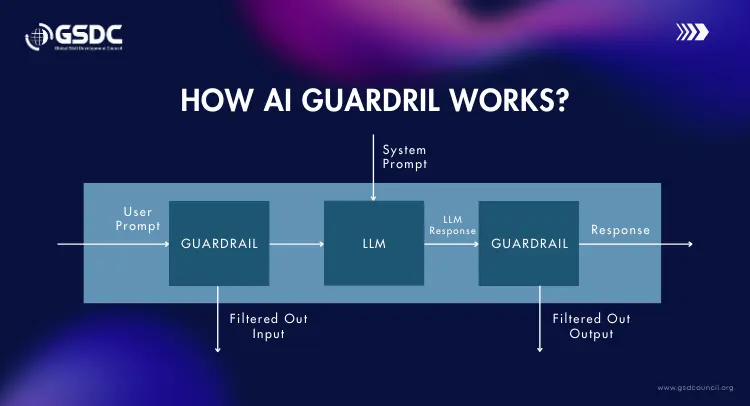

What Are AI Guardrails?

With the advancement in autonomous systems, there is a growing question about what are AI guardrails and why they are important to organizations.

Simply defined, AI guardrails refer to the safety nets that ensure artificial intelligence stays within defined business, ethical, and regulatory lines.

Guardrails in AI are designed to:

- Prevent harmful, biased, or misleading outputs

- Enforce ethical and regulatory standards

- Protect sensitive enterprise data

- Maintain consistent business behavior across systems

These protections make guardrails AI a core requirement for effective responsible AI governance.

Why Guardrails AI Is Critical in Business Environments

AI has become the primary driver of business automation, where it now performs tasks, adjusts settings in enterprise platforms, and, most importantly, directly influences business outcomes by multiplying across departments such as finance, HR, operations, and customer service.

However, more than 60% of organizations state that the absence of AI safety controls like AI guardrails is the main reason for their reluctance to extend the use of autonomous systems to the whole enterprise. Without strong AI guardrails, these systems introduce significant business risk.

- Unintentional Exposure of Confidential Information: Autonomous agents may, through an error in the logic of their programs, expose the sensitive customer, employee, or financial data that they were supposed to keep confidential, thus leading to privacy breaches and resulting in the loss of trust.

- Regulatory violations: In cases when AI outputs neglect or violate compliance rules, legal penalties and operational disruptions are the outcomes for the organizations that have to face the consequences.

- Reputational damage: These kinds of situations can get really out of hand pretty fast, as digitally connected channels get rapidly infiltrated by the harmful or biased content, which later undoubtedly leads to a decline in one's brand credibility.

- Poor customer trust: Users' trust in AI-powered technologies is fragile and is easily diminished by situations in which AI behaves inconsistently or even worse, in an unsafe manner.

These risks are why responsible AI governance has become a board-level priority. The Global Skill Development Council (GSDC) emphasizes building guardrail-driven AI capabilities to ensure enterprises scale autonomy safely while protecting compliance, ethics, and customer trust.

Types of AI Guardrails Used in Enterprises

As enterprises shift from automation to autonomy, the cost of failure is no longer purely technical; it is legal, financial, and reputational. This explains the strategic significance of AI guardrails as a mandatory requirement for every organization deploying agentic systems.

There are different forms of AI guardrails put in place by the enterprise to ensure that the autonomous systems are safe, ethical, and within responsible AI governance frameworks.

- Preventive Guardrails: These guardrails are useful for preventing the functionalities in the systems by specifying the systems that can access particular information or perform particular functions. This prevents penalties, breaches,

- Detective Guardrails: These measures constantly oversee the behavior of systems so that in case of hallucinations, bias, policy violations, or abnormal activity, they can quickly identify such issues and thus prevent silent failures from spreading across the enterprise.

- Corrective Guardrails: Taking action right away, these measures block unsafe actions, change outputs, or raise the level of decisions, thus preventing a small mistake from turning into a public scandal.

- Adaptive Guardrails: These guardrails are continuously adapting themselves according to changes in the regulatory environment, business models, or trends, thereby ensuring that the guardrails on AI are effective not just during retraining but also during expansion into a new market.

- Governance Guardrails: The best way for responsible AI governance to be implemented is with these measures, such as accountability structures, audit trails, documentation standards, and review cycles that can even survive a government review.

Organizations that fail to institutionalize these types of guardrails in AI are not just risking system errors; they are risking regulatory penalties, loss of trust, and long-term competitiveness.

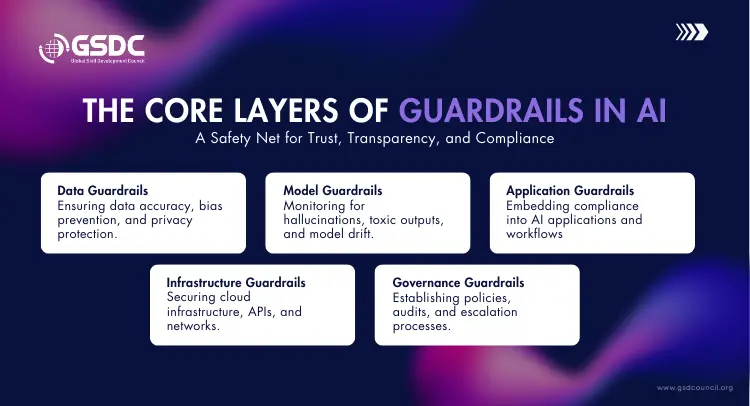

The Core Layers of Guardrails in AI

Guardrails that work for AI mean that the whole enterprise ecosystem is covered, providing a framework that acts as a "safety net” which enables autonomous systems and functionalities to be deployed at scale and without compromising trust, transparency, and compliance.

- Data Guardrails: Data guardrails ensure that training and operating data is correct, free from bias, and respectful of privacy laws. Guardrails can protect data by preventing biased data or predictive models from being used in ways that would cause harm or perpetuate inequalities.

- Model Guardrails: Constantly monitor model behavior for hallucinations, toxic language, or other unethical patterns of decisions. They ensure predictability in the behavior of systems even when models are retraining or adapting.

- Application Guardrails: Embed the compliance mechanisms inside business tools such as chatbots, copilots, and decision support systems so that every AI-driven interaction aligns with the responsible AI standards.

- Infrastructure Guardrails: Secure cloud infrastructure, APIs, data flows, and networks supporting autonomous agents against any kind of misuse or hacking.

- Governance Guardrails: Establish ownership patterns, escalation procedures, documentation mandates, and audit trails to enforce the principles of responsible AI governance into actionable enterprise behavior.

All these layers work together in allowing organizations to implement guardrails in their AI that scale with innovation and ensure that autonomy never results in loss of control.

Practical Responsible AI Tools Used as Guardrails

Organizations adopt responsible AI solutions to ensure responsible AI governance is in practice and to integrate AI safety practices in business operations. In doing so, they assure the autonomous systems are safe, compliant, and business-goal aligned.

- Automatic Content Filtering: It blocks any harmful, deceptive, or non-compliant content from reaching both external customers and internal users in an effort to avoid any harm to business reputations.

- Bias Remediation and Detection: Eliminates biased decision-making behavior across various business domains, such as recruitment, finance, and customer service. Ensures safety and integrity for your company by preventing unethical events and actions.

- Real-time Behavioral Observation: Monitors patient for signs of hallucination or unusual/unsafe behavior as it is happening so that issues can be addressed promptly.

- Enforcement of policies across workflows: It involves enforcing policies, ethics, and organizational best practices across all artificial intelligence workflows to enforce the guardrails for artificial intelligence successfully.

- Human-in-the-loop review: This methodology enables the escalation of highly risky or sensitive decisions to subject matter experts. This ensures that accountability is central to the use of AI.

These methods ensure that AI systems act responsibly, even if there is direct human control involved, meaning that there is human micro-management involved. It is a highlight that is central to the framework that is created by the Agentic AI Foundation certificate for professionals, so that they can work effectively with autonomous systems.

Download the checklist for the following benefits:

🛡 Learn how to keep autonomous AI agents safe, compliant, and explainable

📥 Download now and start governing your agentic AI systems with confidence

Governing Agentic AI with Guardrails AI and Responsible AI Principles

Agentic systems are now able to learn, plan, act independently, but the achievement of these actions relies on the principles of guardrails AI combined with embedded AI guardrails.

- Self-directed decisions are in line with business ethics: The decision is made on the principle of control, with actions being aligned with business values and equity principles of fairness.

- Risky behaviors are prevented or escalated: “High-risk or non-compliant actions are intercepted before having an impact on outcomes,” which helps to enforce guardrails within AI systems.

- The behavior of the AI system can be explained: This is due to the presence of monitoring mechanisms that ensure the explainability of AI, which forms a key pillar in the good governance of AI

- Compliance is maintained across geographies: The presence of monitoring and documentation mechanisms supports explainability, the mainstay of responsible AI governance.

This mindset defines the Agentic AI Foundation as a framework for building autonomous systems that are powerful, scalable, and safe by design through responsible AI principles.

Building Capability for Responsible AI Governance

As machines become more autonomous, human professionals must understand and control the governance aspects rather than the technical tools only. The successful scaling of intelligent systems is getting more and more dependent on the understanding of AI guardrails’ creation, operation, and management within the enterprise.

The Global Skill Development Council (GSDC) provides the Agentic AI Foundation certificate, which encompasses the following:

- Guardrail, driven AI design

- Responsible AI governance frameworks

- Ethical risk management

- Safe deployment of autonomous AI

This prepares leaders to create systems that are intelligent, yet accountable, compliant, and in consonance with the principles of responsible AI.

Conclusion: Guardrails Are the Backbone of Responsible AI

Innovation without limitations leads to risk. Guardrails AI should not be considered a supplement to existing systems, but rather a core element of responsible AI, which guarantees that the use of autonomous systems is done in a safe, ethical, and business-driven manner.

The integration of AI guardrails at the data level, model level, application level, and governance level will help organizations transition from proof-of-concept automation to enterprise automation.

In the agentic era, organizations that can preserve their position are those that view AI guardrails as a strategic discipline rather than a compliance task.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!