How Enterprises Can Adopt Agentic AI Safely, Gradually, and Strategically

Written by Amar Saurabh

- The real enterprise problem is not AI capability. It’s AI control systems.

- What changed: the shift from chat as advice to chat as execution

- Why enterprises pause: the trust question

- Agentic AI is a spectrum: Assist -> Recommend -> Act

- Where enterprise ROI starts: pick the right first use case

- Safe-by-design: success depends on guardrails, not demos

- The 6 non-negotiable guardrails for enterprise agentic AI

- A gradual adoption model: how to move from zero autonomy to scaled autonomy

- Measuring real success: adoption, trust, outcomes

- Agentic AI is not a tool rollout. It is an operating model rollout

- A practical way to start next week

- And how Agentic AI will help you here?

Agentic AI is the shift enterprises have been waiting for, and it is one they should approach with the utmost care. As organizations accelerate agentic AI adoption, they must ensure it is supported by a strong enterprise AI strategy and a practical AI adoption framework.

We have used automation for decades. We have used chatbots for years. We have used recommendation systems for even longer. But generative agents and agentic AI change the ‘shape’ of work because they do something those systems typically don’t: they execute. They plan steps, take actions inside real business tools, and iterate until an outcome is achieved.

That is the upside. It is also where risk enters.

A useful way to frame this is simple: when AI advises, the worst case is bad advice. When AI executes, the worst case is irreversible outcomes at scale, customer data changed incorrectly, payments triggered, policies violated, and mistakes multiplied across thousands of records in minutes.

So the enterprise question is no longer, “Is the model smart enough?”

It is: Can we trust AI to act safely, within policy, and know when not to act?

This blog lays out a practical approach for answering that question through a staged agentic AI implementation model, the control systems enterprises actually need, and the guardrails that make autonomy sustainable.

The real enterprise problem is not AI capability. It’s AI control systems.

Here is the uncomfortable truth: most enterprises already have access to highly capable models. They have talent. They have a budget. They have vendors. They have pilots. They are also exploring AI business use cases and evaluating where generative agents can create the most operational value.

- What they do not have, at least not in a mature, operational form, is enterprise AI governance and AI control systems. Agentic AI fails most often not because models are weak, but because actions are uncontrolled. This is where responsible AI implementation becomes critical, especially as organizations expand into more advanced enterprise AI use cases.

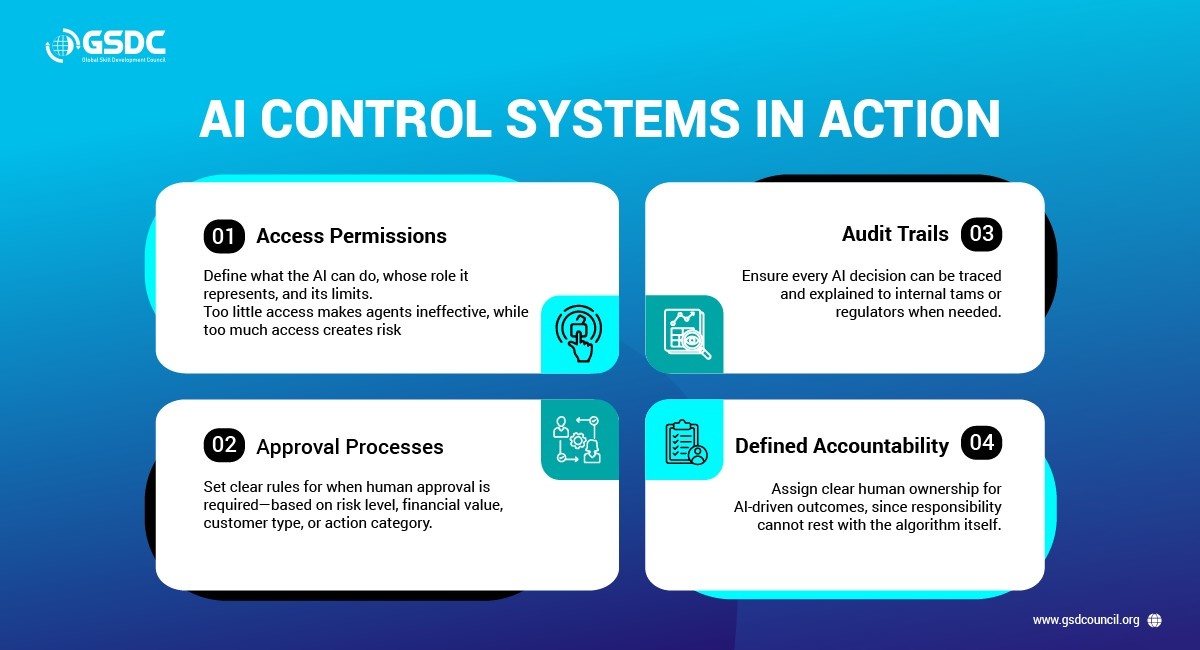

- Control systems show up in very specific ways:

- Permissioning: Who can the AI act on behalf of? What role is it wearing when it uses tools? What are its boundaries? Many companies either give agents no permissions (making them useless) or broad permissions (making them dangerous).

- Approval flows: When does a human need to sign off? Is it based on risk, dollar amount, customer segment, or action type? “Trust and hope” is not an approval flow.

- Auditability: Can you reconstruct why the AI did what it did? Can you show a decision trail to internal reviewers or regulators? If not, you are building a compliance time bomb.

- Clear accountability: When something goes wrong, who owns the outcome? You cannot hold an algorithm accountable. Enterprises must define human accountability for AI-driven actions.

If you want one sentence to anchor the entire strategy, it is this:

Agentic AI fails when actions are uncontrolled, not when models are weak.

What changed: the shift from chat as advice to chat as execution

Why is 'agentic' different this time?

Because the endpoint moved.

In the old world, chat ended in advice. You asked a system what to do, and it told you. Then your employee opened your CRM, updated the record, drafted the email, created the ticket, triggered the workflow, and followed through.

In the new world, chat ends in execution.

An agentic system can:

- create tickets

- update customer records

- send messages

- trigger workflows

- and in some cases, move money

The difference is not subtle. A chatbot might say, 'You should file a warranty claim, here’s the link.' An agentic system can validate eligibility, create the claim, schedule the technician, and notify stakeholders without requiring you to manually perform these steps.

That is why productivity can jump dramatically. That is also why the risk profile changes dramatically.

Why enterprises pause: the trust question

Enterprises are not tech companies. They optimize for different outcomes.

When something breaks in a consumer product, you can roll back, apologize, and iterate. Enterprises do not get that luxury. They operate inside constraints where mistakes can trigger regulatory issues, reputational damage, customer churn, or operational disruption. This is especially important as organizations expand into more complex enterprise AI use cases and begin integrating generative agents into critical workflows.

That is why many enterprise AI initiatives stall, not because leaders are uninterested, but because they are asking the right question:

Can we trust AI to act safely within policy and know when not to act?

Trust is not a belief. It is an earned result of systems, evidence, and operational discipline. That is why strong AI governance, enterprise AI governance, and responsible AI implementation are essential for sustainable scale. Which leads to the most important adoption principle: treat agentic AI as a spectrum, not a switch, and support it with a practical AI adoption framework.

Agentic AI is a spectrum: Assist -> Recommend -> Act

Enterprises should not think of autonomy as a one-step leap. It is a maturity journey. There are three stages, and you should move through them sequentially.

Stage 1: Assist (low risk, high usability)

AI supports human work but does not execute actions autonomously. It drafts emails, retrieves information, suggests responses, and helps fill in forms, while humans remain the decision-makers. This stage is about building adoption and familiarity. The risk is low because the human is still 'holding the keyboard.'

Stage 2: Recommend (medium risk, most critical)

AI proposes actions but requires human approval before execution.

It shows what it wants to do and why:

- 'I want to update these fields on this customer record.'

- 'I want to send this message to this customer.'

- 'I want to create this ticket with this priority.'

Humans review, edit if needed, reject if needed, and approve.

This stage is where enterprises:

- Learn what the agent gets right

- See where humans intervene

- Discover what guardrails are required in practice

Stage 3: Act (high value, high risk)

AI executes tasks autonomously within defined boundaries. This is where you get transformational efficiency: 24/7 execution, instant resolution, high throughput. It is also where mistakes become expensive if the system is not designed to contain them. The act is not a starting point. It is an earned capability.

Most enterprise failures happen when teams skip the recommendation. Because if you go straight from Assist to Act, the first meaningful failure breaks confidence and triggers shutdowns. Recommend is the 'passenger seat' period, the supervised driving phase, where you build real evidence for autonomy.

Where enterprise ROI starts: pick the right first use case

Agentic AI can touch many functions, support, sales ops, HR, finance, legal, IT, and supply chain. The temptation is to start with whatever looks impressive in a demo.

Don’t.

The best early use cases share three traits:

- High frequency

- Value compounds when tasks happen repeatedly.

- Clear success criteria

You need measurable definitions of 'good,' such as:

- 'Ticket assigned to the right team within 2 minutes.'

- 'Record updated with accurate fields within 3 hours.'

- Low downside risk

- If it goes wrong, it is fixable, not catastrophic (reassign a ticket, edit a field, send a follow-up).

A strong starting point that naturally fits all three: support workflows, triage, drafting, and knowledge retrieval. They are measurable, repetitive, and safe with approvals.

This is your beachhead. Prove value there, then expand.

A second filter: avoid two predictable mistakes

When selecting use cases, avoid:

- Random demos: unprioritized experiments that aren’t aligned to business value

- Waiting for perfection: the mythical 'zero risk, maximum ROI, guaranteed success' use case Instead, run every candidate through four filters:

- Impact: Does it move the needle?

- Risk: how bad is failure, and can you recover?

- Feasibility: Can you build it with available data and system access?

- Frequency: Does it happen often enough to matter?

Safe-by-design: success depends on guardrails, not demos

Enterprises often over-index on the 'engine' and under-invest in the 'brakes.'

The faster a car can go, the better the brakes need to be. The more autonomy you give an agent, the more control systems you need around it.

So don’t ask, 'What can it do?'

Ask: 'What stops it from doing the wrong thing?'

That question leads directly to the six guardrails.

The 6 non-negotiable guardrails for enterprise agentic AI

These are not 'features.' They are a system. Each one reduces risk on its own; together, they enable safe autonomy at scale.

1) Input validation

Before the AI does anything, validate what it received:

- Is the request legitimate?

- Is it within scope?

- Is it consistent with policy?

This is also where you protect against prompt injection, spoofing, and out-of-policy requests.

2) Action constraints

Limit what the AI is allowed to do, like employee permissions.

For example, if someone requests a $50,000 refund but their purchase history is a $29.99 subscription, the system should block the action.

The principle: narrow the action space. No broad tool permissions 'just in case.'

3) Human-in-the-loop for high-risk actions

For high-risk actions, a human must approve. No shortcuts.

Example: an AI wants to send a pricing change notification to 10,000 customers. 'Mass communication' triggers manual review, and the human catches that marketing coordination hasn’t happened yet.

4) Rollback mechanisms

When something goes wrong, you need to undo it quickly and confidently.

Some actions are reversible; others are not. Designing rollback is part of the product, not an afterthought. Without rollback, every mistake becomes permanent.

5) Audit trails

Log every decision, action, and override.

Why:

- debugging (reconstruct events)

- compliance (decision trails)

- improvement (measure and refine)

6) Monitoring and alerts

You need real-time visibility into anomalies so you can intervene early.

Without monitoring, problems compound silently and you find out only when customers complain or regulators call.

A gradual adoption model: how to move from zero autonomy to scaled autonomy

A practical path looks like this:

Phase 1: Assisted (3-6 months, sometimes longer)

AI helps; humans do.

The point is not speed. The point is habits, adoption, and early evidence.

Phase 2: Supervised autonomy (Recommend) (6-12 months)

AI proposes; humans approve.

This is typically the longest phase because it is where you learn everything:

- consistent vs inconsistent recommendations

- policy edge cases

- what guardrails need tightening

- what exceptions require escalation

Phase 3: Limited autonomy (6-12 months)

AI acts in constrained scenarios with oversight.

You define narrow scenarios where autonomy is permitted, then expand carefully based on evidence and performance.

Phase 4: Scaled autonomy (ongoing)

Most actions are autonomous. Only truly high-risk scenarios require human approval. At this stage, the team shifts from 'doing the work' to 'managing the AI that does the work.' End-to-end, this journey can take 18-30 months (sometimes longer). The way to move faster is not skipping steps; it is building trust faster by choosing the right use cases, proving value early, and learning transparently.

Measuring real success: adoption, trust, outcomes

Most teams talk about ROI too early and measure too narrowly. You need three categories of metrics.

1) Adoption

If people don’t use it, value cannot materialize.

Track:

- weekly active users

- repeat usage

- usage frequency

2) Trust

Trust predicts whether you will ever reach autonomy.

Track:

- recommendation acceptance rate

- override rate (rejects or significant edits)

3) Outcomes

Outcomes are what executives care about and what scales investment.

Track:

- time saved

- cost per task

- operational throughput improvements

And one more important reminder: you don’t need perfection. Even 25% faster resolution or 30% less admin work is enough to unlock the next phase. Compound several 20–30% improvements, and the transformation becomes meaningful over time.

Agentic AI is not a tool rollout. It is an operating model rollout

At the end of the day, agentic AI changes:

- how decisions are made (which decisions can be delegated)

- how risk is governed (what guardrails, what thresholds)

- how teams work (from execution to supervision)

- how accountability is defined (humans remain accountable)

The future is not AI replacing humans. It is AI handling high-frequency execution while humans provide oversight, judgment, and policy context, with clear boundaries and strong controls.

A practical way to start next week

If you want a simple starting point that aligns with everything above:

- Pick one support workflow (triage + drafting is usually a strong first step).

- Define success criteria you can measure.

- Start in Assist, then move into Recommend with explicit approvals.

- Implement the six guardrails as a system, not optional toggles.

- Track adoption, trust, and outcomes weekly.

- Expand autonomy only when evidence supports it.

The question is not whether enterprises will adopt agentic AI. They will. The question is whether they will adopt it safely, gradually, and strategically.

And how Agentic AI will help you here?

Agentic AI helps professionals and organizations move beyond passive AI tools by enabling systems that can plan, decide, and execute tasks with greater autonomy. It can improve productivity, accelerate decision-making, streamline operations, and support more intelligent business workflows across functions.

To build the right expertise in this evolving space, the GSDC Certified Agentic AI Professional certification helps professionals understand the practical application of agentic AI, governance considerations, implementation strategies, and real-world enterprise use cases.

It is a valuable credential of GSDC for those looking to stay relevant, lead AI transformation, and build future-ready skills in 2026.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!