How To Build Safe & Ethical AI Conversations?

Written by Emily Hilton

With AI advancing at lightning speed, ethics isn’t just an afterthought anymore, it’s the foundation of every responsible AI system. As AI becomes deeply integrated into our workplaces, classrooms, and personal productivity systems, ensuring ethical AI practices is essential to maintaining trust and transparency.

The recent AI tool challenge webinar, “How to Build Safe & Ethical AI Conversations,” shed light on how to practically implement ethical and responsible AI frameworks while using tools like Notion AI, ChatGPT, and integrated data assistants. It was not just about what AI can do but about what it should do, and how we can use it safely.

Understanding What Is Ethical AI

Before diving into tools and workflows, it’s important to clarify what is ethical AI.

Ethical AI refers to the design, development, and deployment of AI systems that align with principles of fairness, accountability, transparency, and respect for user privacy. These systems must minimize bias, prevent harm, and promote equitable outcomes for all users.

AI ethical issues often arise when algorithms make decisions that affect people’s opportunities, such as job screening, loan approvals, or academic evaluations. The webinar emphasized that ethical AI isn’t about adding restrictions; it’s about building trust through responsible choices.

Ethical Use of AI in Everyday Tools

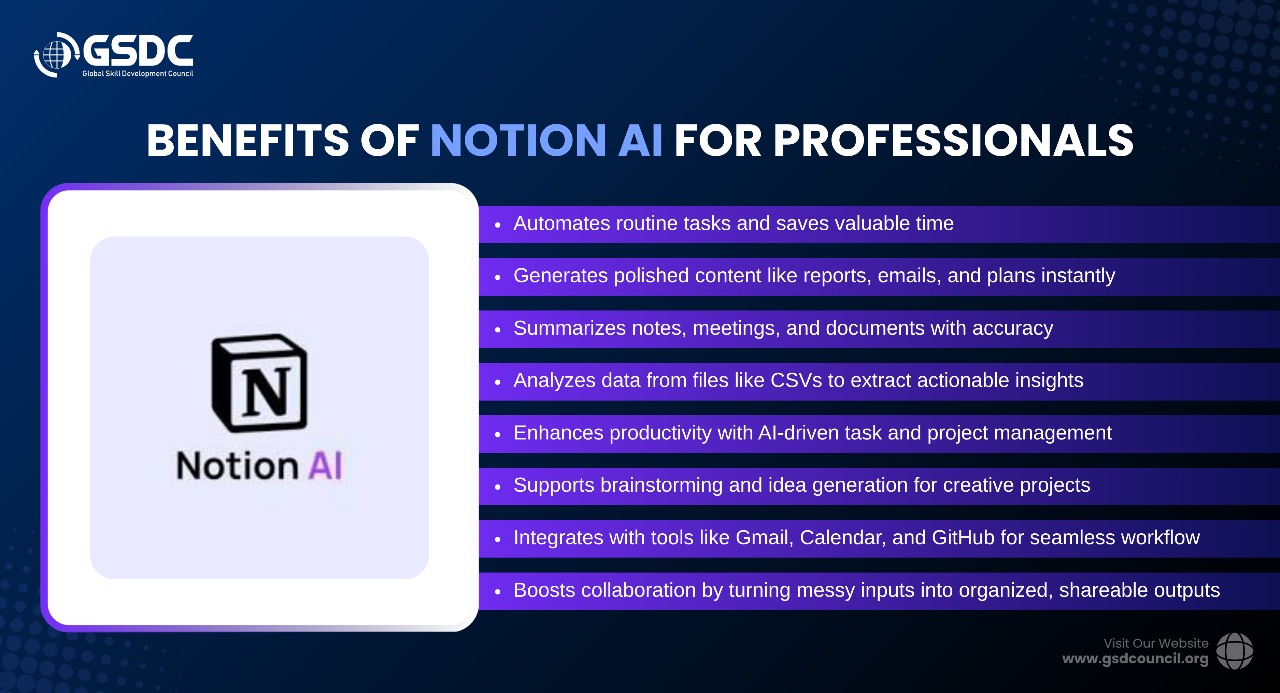

The session highlighted how tools like Notion AI are transforming the way we summarize data, draft ideas, and create structured outputs. However, the ethical use of AI requires users to understand the implications of every automated action.

For instance, when importing a CSV file for AI analysis, we must ensure the data does not include personal identifiers or sensitive information. The AI can summarize and present statistical insights, but it should never be fed confidential datasets without proper consent.

Similarly, when extracting data from images using AI-powered optical character recognition (OCR), one must be cautious of intellectual property and copyright concerns. This reflects the ethical implications of AI even seemingly small tasks, like converting an image to text, can raise issues around data ownership and privacy.

The key takeaway? AI tools amplify human capabilities, but users remain accountable for how they deploy them.

Using AI for Business and Planning: With Ethics in Mind

Another core segment of the webinar showcased the use of AI for creating business plans and structured workflows. For example, generating a “Business Analyst Plan in the AI field” using a step-by-step (Chain of Thought) prompting technique.

This method breaks complex ideas into smaller, logical steps that make the AI’s reasoning transparent an excellent practice for ethical and responsible AI use. By guiding the AI through each reasoning stage, users can monitor assumptions, validate context, and prevent bias in outcomes.

The ethical use of AI in such scenarios is about clarity and traceability, ensuring that every decision or recommendation produced by the model can be explained. Whether AI is used for creating a business plan, designing a learning roadmap, or evaluating a product strategy, the user should always question:

- Where did this data come from?

- Is it free from bias?

- Could it unintentionally disadvantage someone?

These questions form the foundation of ethical AI practice.

Integrating AI Tools Responsibly

During the session, several practical integrations were discussed: Notion AI with Gmail, Google Calendar, and GitHub, enabling seamless collaboration. Each integration, however, carries its own ethical implications of AI.

For instance, connecting AI with your email can streamline communication summaries and task extraction, but it also exposes sensitive personal data. The solution? Apply access controls, encrypt shared data, and avoid using AI models for processing private correspondence unless explicitly authorized.

In software and product development, tools like GitHub’s Copilot or Notion’s project boards use AI to generate documentation or code snippets. Developers must ensure that no proprietary code or client-sensitive data is fed into public AI systems. This forms part of the ethical and responsible AI framework that prioritizes both innovation and integrity.

Ethical Use of AI in Education

The webinar also discussed how AI tools are increasingly being adopted in academic and learning environments, from summarizing research papers to generating quizzes and personalized learning paths.

While these applications are highly beneficial, they also bring to light the ethical use of AI in education. For instance:

- Students should disclose when AI has assisted in their assignments.

- Teachers must verify the originality of AI-generated content.

- Institutions should set clear guidelines on how AI may be used responsibly.

The goal is not to ban AI, but to promote AI literacy ensuring learners understand both its power and its pitfalls. An ethical classroom embraces AI as a teaching partner, not a shortcut to learning.

Addressing Common AI Ethical Issues

Every stage of AI interaction brings potential challenges: bias, misinformation, dependency, and data misuse. The webinar encouraged users to adopt a “question-before-trust” mindset.

For example:

- If AI provides a summary of a dataset, cross-check it with the source.

- If it generates content, verify factual accuracy before publishing.

- If it analyzes customer data, confirm consent and anonymization.

These are not just technical checks; they are moral actions that define the ethical use of AI in the real world.

By combining human judgment with machine efficiency, organizations can build systems that are not only intelligent but also just and humane.

The Future of Ethical and Responsible AI

As AI capabilities expand from language models like ChatGPT to workplace assistants like Notion AI, the boundaries of responsibility must also expand. The future of ethical and responsible AI lies in shared accountability between developers, users, and policymakers.

The webinar concluded with a simple but powerful insight:

“AI doesn’t make ethical decisions, humans do.”

That means every time you use AI to automate, summarize, or predict, you’re shaping a digital environment that either upholds fairness or amplifies bias. Ethical AI starts with intentional use, choosing transparency over convenience, and context over speed.

The coming years will likely bring stronger AI regulations, clearer transparency standards, and ethical auditing frameworks. But until then, every user has the power and duty to make AI safer.

Master AI Tools with GSDC’s Expert Certification

Boost your AI proficiency with GSDC’s AI Tool Expert Certification, designed to provide hands-on experience with platforms like ChatGPT, Jasper, and Google AI Studio. Gain practical skills to implement AI in real-world projects while enhancing your professional credibility.

GSDC empowers professionals with industry-recognized certifications and training programs to advance careers in emerging technologies.

Moving Forward

Building safe and ethical AI conversations is not just about limiting what AI can do; it’s about teaching it, and ourselves, to think responsibly. Tools like Notion AI, ChatGPT, and GitHub Copilot can supercharge creativity and efficiency, but their impact depends entirely on how ethically we apply them.

From data privacy to educational integrity, and from business transparency to algorithmic fairness, each action we take defines what responsible AI looks like in practice.

When we align innovation with integrity, AI becomes more than a technology it becomes a trusted partner in building a more informed, inclusive, and ethical world.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!