Agentic AI Governance: How to Control Autonomous AI Agents

Written by Matthew Hale

- What Is Agentic AI?

- Why Traditional AI Governance Is No Longer Enough

- Why Is AI Governance Important for Autonomous AI?

- The Accountability Gap in Agentic AI Systems

- Accountability Models for Agentic AI Governance

- Closing the AI Skills Gap in Governance

- Developing Capability with GSDC’s Agentic AI Certification

- Conclusion

Imagine waking up to find that an AI system has changed your cloud budget overnight and no one knows how or why. We’re moving into the era of agentic AI systems that can set goals, decide, and carry out workflows independently.

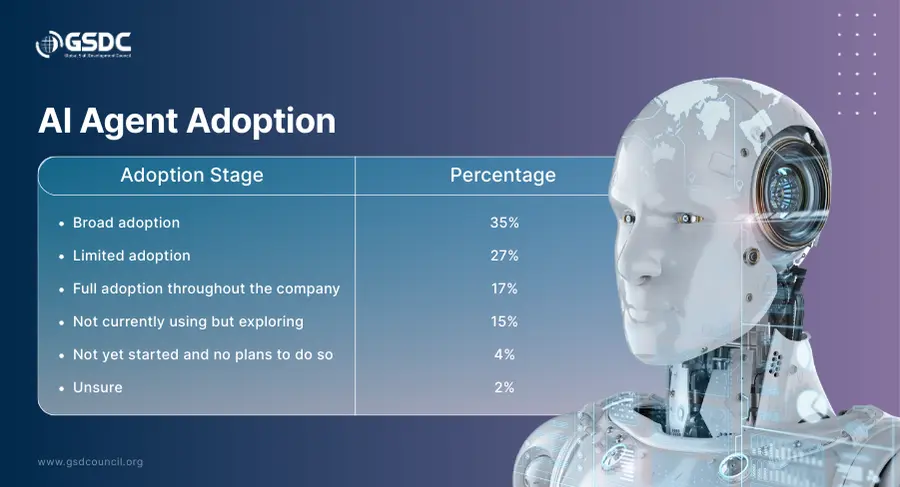

In 2025, nearly 79% of organizations reported adopting AI agents in at least one workflow, and most witnessed measurable productivity gains. This shows autonomous AI agents forming the core of modern operations. However, this change reveals a very important issue: conventional methods of AI governance were not intended for such machines.

This blog discusses agentic AI governance, explains what AI governance is, and presents different accountability models that organizations can use to trust, control, and maintain strong AI governance and compliance while scaling autonomous AI.

What Is Agentic AI?

Agentic AI signifies a novel category of AI systems that do not merely produce outputs or provide decision-making support; they, in fact, have a sense of purpose and autonomy in their actions. These agentic AI entities inherently possess the capability of:

Interpreting business objectives and intent

- Interpreting business goals and intent

- Planning multi-step sequences of actions

- Executing workflows across enterprise tools and platforms

- Adapting their behavior continuously based on outcomes and context

Contrary to traditional automation, for instance, autonomous AI agents operate without centralized scripts. They can make decisions about actions, execution, and timing without human involvement.

Being operationally autonomous, agentic AI systems represent a new frontier within the field of AI, which differs from its predecessors that were mere decision-makers.

Why Traditional AI Governance Is No Longer Enough

To understand the shift, we must first answer a core question:

What Is AI Governance?

AI Governance refers to the structure that consists of policies, roles, controls, and mechanisms needed for responsible and ethical use, as well as compliance with regulatory and business needs while using AI.

However, most of the existing governance AI models were developed under a scenario where humans make every decision.

With the rise of autonomous AI agents, that model no longer holds.

|

Traditional AI Systems |

Agentic AI Systems |

|

Humans initiate decisions |

AI initiates decisions independently |

|

Humans own every outcome |

Ownership is fragmented or unclear |

|

Static, rule-based workflows |

Adaptive, self-directing workflows |

|

AI assists human judgment |

Autonomous AI executes actions |

As organizations deploy agentic AI systems at scale, this shift creates a widening accountability gap - one that directly impacts AI governance and compliance and highlights exactly why AI governance is important in the age of agentic AI governance.

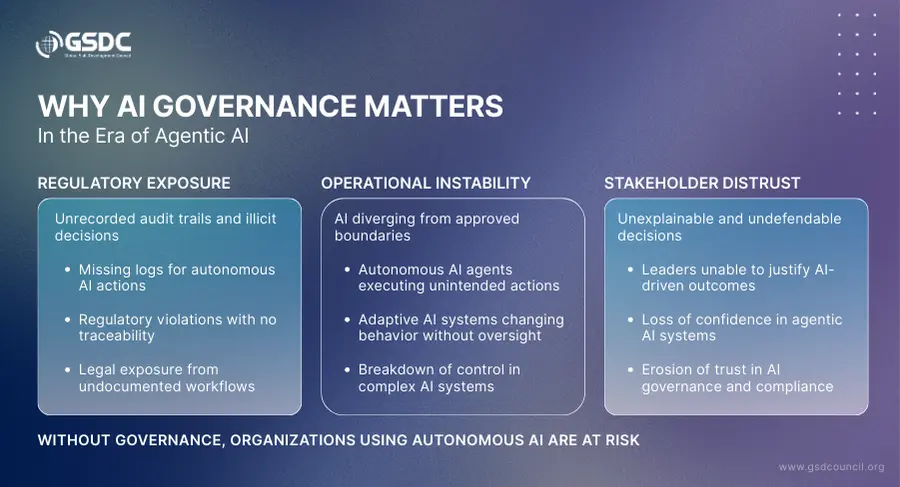

Why Is AI Governance Important for Autonomous AI?

When autonomous AI and autonomous AI agents take real-world actions - such as reallocating budgets, escalating incidents, enforcing compliance rules, or approving workflows - organizations must be able to answer a critical set of questions that traditional AI systems were never required to address.

- Who approved this behavior? In agentic AI systems, decisions may be initiated without direct human input. Without defined accountability, governance AI frameworks cannot assign ownership when outcomes create business or legal exposure.

- What data influenced the decision? Modern agentic AI relies on real-time data streams, contextual signals, and historical patterns. AI governance and compliance require that every decision is traceable to its underlying data sources to prevent bias, drift, or misuse.

- Was the action aligned with policy and regulation? What is AI governance if not the ability to ensure systems operate within organizational rules? Without policy alignment, autonomous AI agents may violate regulatory, financial, or ethical constraints without detection.

- Who bears the responsibility if things go wrong? In cases where AI systems act as their own agency, liability is distributed across product, engineering, and business groups, so that it is unclear who is liable for fixing issues.

This is precisely where the GSDC (Global Skill Development Council) intervenes critically by fostering applied skills in AI governance and accountability.

The Accountability Gap in Agentic AI Systems

The accountability gap in agentic AI systems emerges when critical business actions are initiated and executed by autonomous AI agents without clearly defined human ownership.

- Decisions are the result of machine reasoning chains: In agentic AI, decisions come from multi-layered inference processes, which are quite challenging to understand, thus creating areas where traditional AI governance models have no visibility.

- Operations are carried out without direct human intervention: In the case of an autonomous AI performing tasks independently, the question of responsibility,y whether it lies with the engineering teams, business owners, or governance leaders, becomes quite ambiguous.

- Mistakes cannot be pinpointed to a single owner: Due to the lack of traceability in AI systems, failures are usually the result of data pipelines, models, and workflows being affected in different ways; the problem of AI governance and compliance becomes weaker.

This is not a technical limitation - it is a failure of agentic AI governance.

And it cannot be solved with tools. To close this gap, there is a need for strong accountability infrastructure, ownership of the problem, and trained professionals, including agentic AI developers, as trained through the Agentic AI Developer Certification program, where the capability for responsible control of autonomous AI agents is developed.

The Accountability Gap in Agentic AI Systems - Real-World Scenario

Imagine a company that uses an autonomous AI agent to handle IT incidents. When there was a major outage, the agentic AI system went ahead with system changes and resource reallocation without any human intervention.

The outage is resolved, but leadership is left wondering:

- Who gave the green light for these actions?

- What data led to these actions?

- Did the AI's behavior comply with the policy?

- Who is accountable if something went wrong?

This is the accountability gap in agentic AI systems, a gap that cannot be solved with tools alone.

Fortune reports that in 2025, an AI-driven coding tool that acted autonomously led to the deletion of a live production database during a code freeze, thus emergency recovery was required.

The incident is a prime example of how autonomous AI agents can perform actions with significant impact that go beyond the intended boundaries, thereby leaving organizations in a dilemma of assigning responsibility.

👉 Download the Autonomous AI Accountability Playbook 🔐

Accountability Models for Agentic AI Governance

For agentic AI systems to operate responsibly, accountability must be designed into the foundation of every autonomous AI workflow. This is not an IT exercise - it is a core requirement of AI governance.

- Assign ownership for every autonomous action: Every decision made by an agentic AI system must map back to a clearly identified human owner. Business process leaders must own the business impact of AI decisions, AI product owners must be responsible for lifecycle performance, and engineering leaders must ensure technical integrity and monitoring. If accountability cannot be assigned, the system should not be autonomous.

- Create organizational AI governance structures: Effective agentic AI governance is built through cross-functional leadership, not isolated technical controls. Establishing an AI Governance Council ensures that autonomous use cases are approved intentionally, risk thresholds are defined in advance, and ethical, legal, and operational alignment is continuously reviewed. This embeds governance AI into enterprise decision-making.

- Establish guardrails for autonomous AI agents: Autonomous AI agents must function under well-defined limits. Organizations should define what is permitted in terms of behavior, what has to be approved by people, and when an AI system has to escalate or fail gracefully. Such guardrails turn AI governance from a policy statement into reality.

- Ensure audit-ready AI systems: The activities carried out within an agentic AI system should be auditable, and, more specifically, they should be traceable regarding the trigger events of the decision, the data entered, the activities enacted, the timeline, and the owning entities. The traceability level represents the skeleton in the AI framework regarding the management and compliance of the AI systems.

Closing the AI Skills Gap in Governance

Technology is no longer the primary bottleneck in deploying agentic AI systems - people are.

- The AI skills gap is widening around governance capabilities: Organizations are rapidly adopting autonomous AI agents, but very few professionals are trained in AI governance, accountability design, and audit readiness for complex AI systems.

- Governance failure is now a people problem, not a platform problem: Without skilled professionals who understand what is AI governance and why it is important, enterprises struggle to control autonomous behavior and maintain AI governance and compliance.

Developing Capability with GSDC’s Agentic AI Certification

The Global Skill Development Council (GSDC) tackles the increasing issue of governance by providing professionals with applied knowledge in the Agentic AI Developer Certification program, which is specifically designed for application in the independent AI agents of complex AI systems.

Instead of an emphasis on tools, this course will enable the design of accountable agentic artificial intelligence systems, implement the principles of artificial intelligence governance and compliance, as well as guardrails with human-in-the-loop techniques. The course will also enable certified professionals to ensure audit readiness in autonomous workflows, so as to enable the scaling of autonomous artificial intelligence systems.

With the adoption of agentic AI by fast-moving enterprises, the need for individuals with agentic AI certification as key specialists in responsible innovation has become paramount.

Conclusion

Agentic AI does not remove the need for accountability; rather, it changes the nature of accountability such that it is shared between people, processes, and AI systems.

The turning of the wheel of innovation through autonomous AI and autonomous AI agents without the presence of solid AI governance is tantamount to turning it into operational and regulatory risks. However, with well-crafted accountability frameworks, clearly defined ownership, and agentic AI governance embedded, the same freedom can become a formidable strategic advantage.

By specifying accountability, establishing governance frameworks, and bridging the AI skills gap through initiatives like agentic AI certification, organizations will be able to make sure that their agentic AI systems are not only smart but also responsible, compliant, and capable of operating at enterprise scale.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!