How GenAI-Driven QA Experts Will Shape the Future of Quality Software?

Written by Matthew Hale

This article is an analysis of a transcript of one of our current GSDC AI Tools Challenge Webinar 2025 sessions. The speaker presented a roadmap that is clear to follow: the quality engineering is moving beyond the manual execution of tests to the practice enriched with AI.

GenAI and predictive methods will not oust the QA experts, but will transform the quality work. The following is a synthesized guide based on the session on what teams should embrace, how to guide the testing using AI, and what results to quantify.

The evolving role of QA in an AI-driven world

QA experts will move from finding defects to designing and governing AI-backed systems that detect, prioritize, and sometimes propose fixes.

The session emphasized that future QA work mixes domain knowledge with model oversight, data hygiene, and ethical guardrails.

This shift creates career growth: QA specialists become architects of intelligent test systems rather than only executors of test cases.

Key role changes discussed:

- QA will own the ai workflow for test selection, anomaly detection, and triage.

- Responsibility shifts to validating models and data quality used by ai-driven test suites.

Why adopt ai tools for software testing now

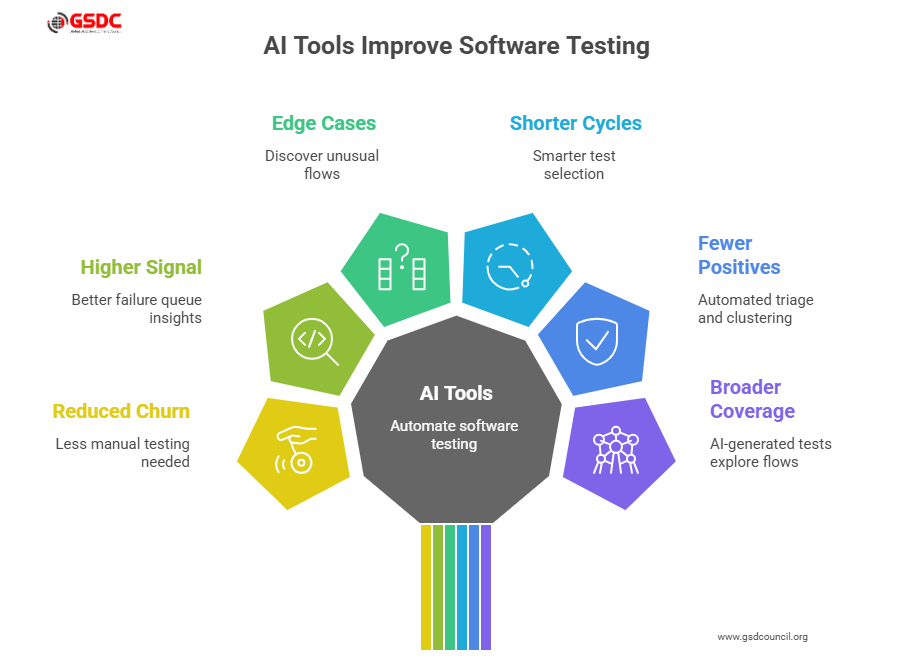

Practical examples from the webinar showed immediate wins when teams introduce ai tools for software testing: reduced manual churn, higher signal in failure queues, and discovery of edge-case behaviors that manual tests miss.

Measured benefits:

- Shorter regression cycles through smarter test selection.

- Fewer false positives from automated triage and clustering.

- Broader coverage via AI-generated tests that explore unusual flows.

When scoped to a clear metric CI time, defect escape rate, or mean time to detect ai tools for software testing produce fast, measurable returns.

What test automation with AI looks like in practice

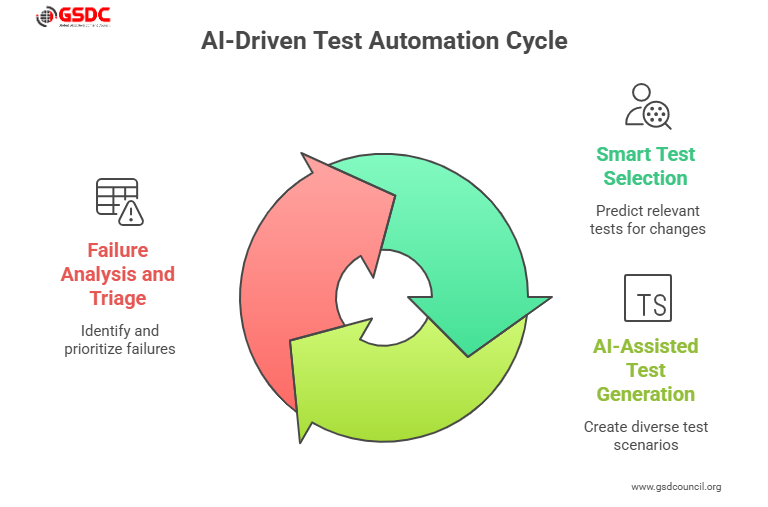

Test automation with AI is a collection of capabilities that plug into the delivery pipeline. The session grouped these into three practical patterns:

- Smart test selection

- Models predict which tests matter for a given change, reducing CI run time while preserving coverage.

- Models predict which tests matter for a given change, reducing CI run time while preserving coverage.

- AI-assisted test generation

- Generate inputs, edge-case scenarios, and test scripts from contracts, traces, or user flows.

- Generate inputs, edge-case scenarios, and test scripts from contracts, traces, or user flows.

- Failure analysis and triage

- Cluster failures, suggest root causes, and prioritize remediation steps automatically.

These are concrete examples of test automation with AI enabling teams to run better tests more often.

Autonomous testing tools: strengths and necessary guardrails

Autonomous testing tools can explore applications, run suites, and report anomalies without continuous oversight. The session framed them as accelerators that must be paired with governance.

Best-practice guardrails:

- Deploy autonomous testing tools in supervised mode first flag and recommend rather than auto-apply fixes.

- Keep auditable logs of autonomous runs for post-mortem and compliance.

- Begin in non-critical services or exploratory testing before expanding to core production flows.

Used correctly, autonomous testing tools shorten feedback loops and uncover problems human testers might miss.

Predictive QA: what is predictive analytics and why it matters

A core session topic was predictive quality work. To be clear, what is predictive analytics in QA: it’s the use of historical test, build, and production data to forecast where failures are likely to occur and which modules carry the most risk after a change.

How predictive analytics improves quality:

- Prioritizes tests and engineering attention on high-risk modules.

- Forecasts likely failure windows after releases so teams can plan mitigations.

- Allows smarter resource allocation focus on the tests and fixes that reduce the most risk.

Predictive analytics turns QA from a reactive gatekeeper into a proactive risk manager.

Detection and explanation: how does AI detection work in QA?

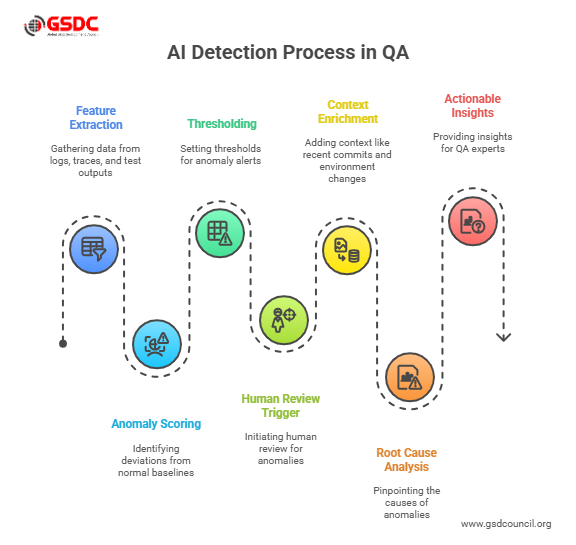

A recurring practical question was how does AI detection work?

In QA, detection models learn normal baselines from logs, traces, and historical test results; they flag deviations latency spikes, error clusters, or abnormal trace patterns based on statistical or learned representations.

Core building blocks of detection:

- Feature extraction from traces, metrics, and test outputs.

- Anomaly scoring and thresholding with human-review triggers.

- Context enrichment (recent commits, environment changes) to help pinpoint root causes.

Design detection systems to produce actionable insights rather than noise. Explainable signals let QA experts validate and trust the results.

Designing an ai workflow for quality

The session proposed a repeatable ai workflow that teams can adopt:

- Collect: centralize test runs, logs, traces, and historical failures.

- Model: build predictive and anomaly-detection models focused on test flakiness and failure risk.

- Act: integrate models into CI to select tests, create tickets, or trigger deeper diagnostics.

- Validate: QA experts review model suggestions; feedback improves models.

- Govern: keep audit trails, monitor drift, and enforce explainability.

This ai workflow keeps humans in control while letting AI operate at scale.

Picking tools: which AI is the best?

There is no universal answer to which AI is the best. Tool selection should be use-case-driven: test-data generation, anomaly detection, triage, or CI integration.

Selection checklist:

- Match the tool’s strengths to the need generation vs. detection vs. triage.

- Prefer tools with explainability, clear CI/CD hooks, and a track record of integration.

- Pilot multiple options and measure impact on coverage, CI time, and mean time to detect.

Prioritize fit and evidence over hype when deciding which AI is best for the team’s needs.

People, careers, and the impact of AI in jobs

The session emphasized opportunity: ai in jobs will change job content rather than eliminate roles. QA professionals who learn data literacy, model validation, and automation design will lead the next wave of quality engineering.

Career implications:

- New roles: quality data steward, AI validation lead, and test architect.

- Skill growth: model explainability, dataset audits, and CI integration experience.

- Cross-functional teams: QA, SRE, and data scientists collaborating on ai-driven quality.

This evolution rewards QA experts who move from test execution to system design and governance.

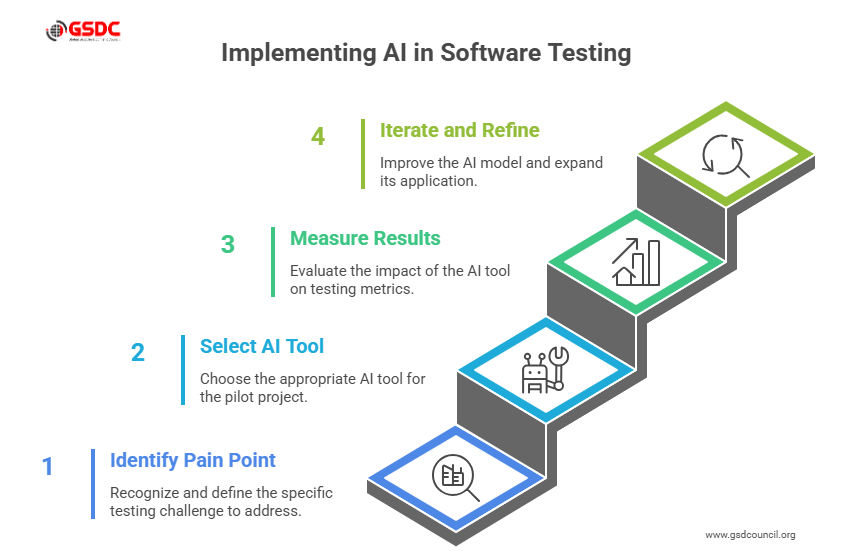

Practical pilot plan: start small, show results

Run a tightly scoped pilot to validate impact quickly and limit risk. The aim is to prove measurable gains shorter CI cycles, fewer false positives, or faster triage within a 2–4 week window.

Keep scope narrow, assign a single owner to manage integration and tuning, and capture baseline metrics before the first run.

- Pick a target pain point: flaky tests or long regressions.

- Select one AI tool for software testing and scope a 2–4 week pilot.

- Measure CI time saved, false positive rate, and triage time reduction.

- Iterate: refine models, broaden scope, and add governance checks.

A short pilot proves value and surfaces integration and data-quality issues early.

Speaking of a plan of action, if you think you have what it takes to lead with AI, then check out our GSDC AI Tool Experts Certification for getting the validation your skills deserve and lead ahead

Ai-driven quality, guided by QA expertise

GenAI and predictive analytics expand test coverage and accelerate detection, but gains come when AI-driven capabilities are paired with QA judgment.

Adopt AI tools for software testing and autonomous testing tools thoughtfully, design clear ai workflows, and keep QA experts as the final arbiters of quality.

That combination of automation plus governance will define reliable software in the years ahead.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!