Deploying and Scaling AI Agents in Azure AI Foundry

Written by Matthew Hale

- What is Microsoft Azure?

- The Shift from Chatbots to Agentic Systems

- Why Pre-Trained Models Fail in Enterprise Contexts

- Retrieval-Augmented Generation as a Capability, Not a Feature

- Designing Enterprise-Grade AI Agent Ecosystems with Azure AI Foundry

- Key Benefits of Azure AI Foundry for Enterprise AI Deployment

- The Future of AI Agent Development with Azure AI Foundry

- The Agent Lifecycle Framework

- Observability and Governance as Core Pillars

- Building Real-World Agents

- Closing the Capability Gap

- Conclusion

Enterprise adoption of AI is moving into a phase where experimentation alone is no longer sufficient. Organisations are increasingly required to deploy AI systems that can operate reliably at scale using platforms such as Azure AI Foundry. Although investments in generative AI continue to rise, many organisations remain unable to translate innovation into sustained operational impact.

Fragmented implementations, disconnected tools, and short-lived projects are not signs of limited technology, but rather of a growing capability gap in designing, managing, and expanding intelligent AI agents.

One of the ways this issue was dealt with is a session held as part of the GSDC Certified Learning Holiday AI Bootcamp, where attendees experimented with real-world methods of AI agent deployment and scaling using Azure AI Foundry.

What is Microsoft Azure?

Microsoft Azure is a worldwide cloud platform that offers infrastructure and platform services enabling the development, deployment, and management of enterprise applications.

Azure AI Foundry is a part of the ecosystem, which acts as a specially tailored setting for the development, deployment, and administration of AI agents through combining foundation models, retrieval pipelines, monitoring, and responsible AI controls into one seamless platform.

The Shift from Chatbots to Agentic Systems

Traditional generative AI is essentially about prompt-response interactions, which is not adequate for complicated business requirements. The systems of tomorrow are supposed to be those that can access internal data, adhere to organisational logic, and be real workflow-friendly.

Consequently, the sector is shifting from simple conversational agents to AI agents capable of full operation on enterprise platforms like Azure AI Foundry. Latest figures indicate that 78% of organisations have implemented AI in at least one business function, while 62% are involved in AI agent pilot projects, with a considerable majority already moving to production.

|

Traditional AI Usage |

Agentic AI Systems |

|

Prompt-response chatbots |

Goal-driven AI agents |

|

Static knowledge |

Real-time, retrieved enterprise data |

|

Model experimentation |

Production-ready workflows |

|

Isolated outputs |

Orchestrated actions across systems |

Unlike models that only produce text, AI agents that are put into action via Azure AI Foundry can think, get the relevant data from the enterprise sources, decide, and carry out the business workflow changes; thus, AI becomes a necessary part of the enterprise operations.

Why Pre-Trained Models Fail in Enterprise Contexts

Foundation models are basically trained on public datasets and have a historical cut-off date. So they lack an understanding of:

- Organisation-specific knowledge: Internal business rules, processes, and confidential information are definitely not the model's training data.

- Internal policies, documents, or databases: Models cannot access privately held enterprise resources unless a connection is made through a retrieval system explicitly.

- Real-time operational data: Any data that is produced after the model's training cut-off is totally unknown to the model.

The above restrictions lead to unreliable outputs and limit the scope of meaningful automation. Adding more prompts is not the way out. The way out is -Augmented Generation (RAG), which allows models to securely retrieve and ground responses in live enterprise data via Azure AI Foundry.

Retrieval-Augmented Generation as a Capability, Not a Feature

Retrieval-Augmented Generation (RAG) enables AI agents to retrieve enterprise knowledge dynamically before generating responses. Within Azure AI Foundry, RAG connects foundation models with live organisational data sources such as Azure SQL, Cosmos DB, Azure Search, and document repositories, ensuring outputs are grounded in the enterprise context rather than static training data.

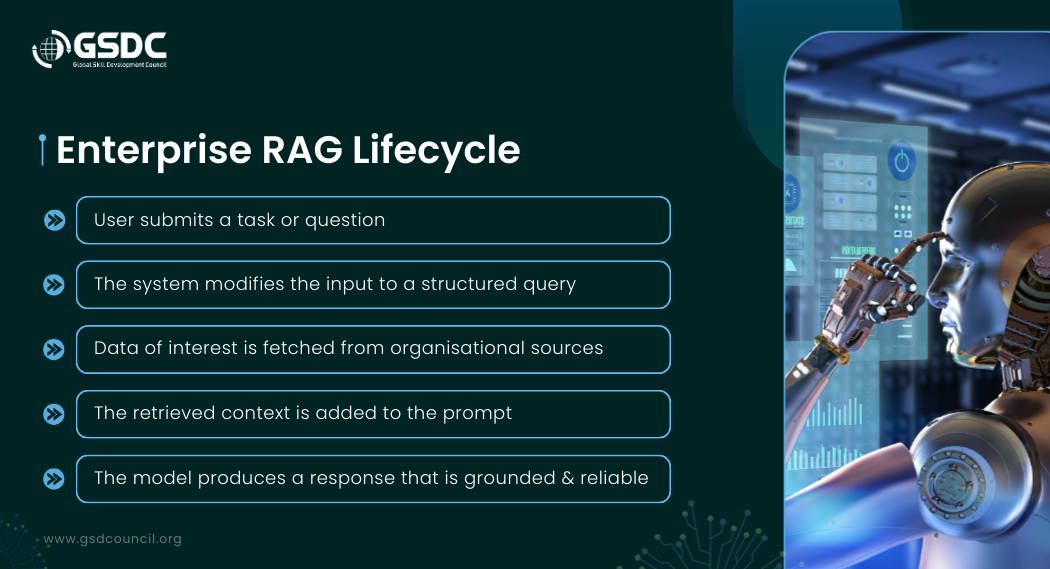

Enterprise RAG Lifecycle:

- User submits a task or question: The interaction starts with a business-driven request, e.g., a question about internal policies or operational data.

- The system modifies the input to a structured query: The input is changed to a format that local retrieval engines and enterprise search services can handle.

- Data of interest is fetched from organisational sources: The information is obtained from structured databases, semi-structured files, or unstructured documents that are saved in various enterprise systems.

- The retrieved context is added to the prompt: The retrieved data is combined with the system directives so that the model receives the right organisational context.

- The model produces a response that is grounded and reliable: The final output is derived from the real enterprise data; therefore, the chances of hallucinations are reduced, and trust is increased.

By means of this lifecycle, RAG converts generic language models to enterprise-aware AI systems that can realistically support business workflows.

Designing Enterprise-Grade AI Agent Ecosystems with Azure AI Foundry

Azure AI Foundry demonstrates how agent-based systems can be architected with enterprise rigour. It is not merely a deployment environment; it represents a complete AI agent lifecycle framework that spans model selection, knowledge integration, deployment, monitoring, and governance.

It supports:

- Multi-model selection and benchmarking: Teams have access to a broad catalog of models and may compare models in terms of quality, cost, throughput, and latency before deciding on the best option.

- Secure data grounding pipelines: Retrieval, augmented architectures that link AI agents with internal documents, databases, and enterprise systems can be done while data isolation and encryption are still maintained.

- Responsible AI enforcement: The product comes with safety features, bias mitigation, and policy controls that ensure models meet ethical and regulatory standards.

- Observability and lifecycle governance: It allows continuous evaluation, performance monitoring, drift detection, and audit trails for long-term operational stability.

- Low, code and pro, code development workflows: One can use visual interfaces to create agents for a fast prototype, or SDK-based methods for a thorough customisation.

- As a result, Azure AI Foundry is a major referential platform for companies that are designing AI agent ecosystems that are scalable, governed, and production-ready.

Key Benefits of Azure AI Foundry for Enterprise AI Deployment

Azure AI Foundry is a major contributor to corporate success throughout the entire AI agent lifecycle. It, therefore, enables organisations to move from experimentation to scalable production environments.

- Faster agent development: Foundry offers pre-integrated tools, model catalogs, and SDKs that very significantly shorten the builder and deployment stages of AI agents.

- Improved response reliability through RAG: By enabling agents to interact with real-time enterprise data sources, Foundry guarantees that the results are based on the actual organisational context; thus, the number of hallucinations is reduced, and trust is enhanced.

- Enterprise-grade security and compliance: As part of its security framework built on Azure, Foundry has features like data isolation, encryption, and governance that comply with the regulations imposed by authorities.

- Operational visibility and performance monitoring: The deeply integrated observability that is always available enables the system to be continuously reviewed, its performance tracked, and drift identified, thus ensuring the system's stability over time.

- Flexible development workflows: By providing the means for a business user, a data scientist, and a software engineer to operate as a single unit, the encouragement of both low-code and pro-code techniques is an excellent collaborative tool.

The Future of AI Agent Development with Azure AI Foundry

With the widespread use of agentic systems by enterprises, Azure AI Foundry is set to become a fundamental platform for intelligent automation.

Next, door developments are anticipated to unravel:

- Deeper multi-agent orchestration: Allowing several AI agents to interact and share tasks across complex enterprise workflows.

- Advanced lifecycle automation: The testing, deployment, and monitoring stages of the pipeline that supports large, scale AI operations are automated further.

- Expanded model ecosystems: More domain, specific, SPecific, industry-optimised, and multimodal foundation models are being made accessible to a wider user base.

- Stronger governance and compliance tooling: Enhanced auditability, explainability, and policy enforcement for tightly regulated industries.

- Greater business integration: Higher integration with enterprise applications, analytics platforms, and operational systems to enable the embedding of AI agents directly into the core business processes.

These innovations will, in effect, make Azure AI Foundry a major contributor to the development of trusted, scalable, and enterprise-ready AI agent ecosystems.

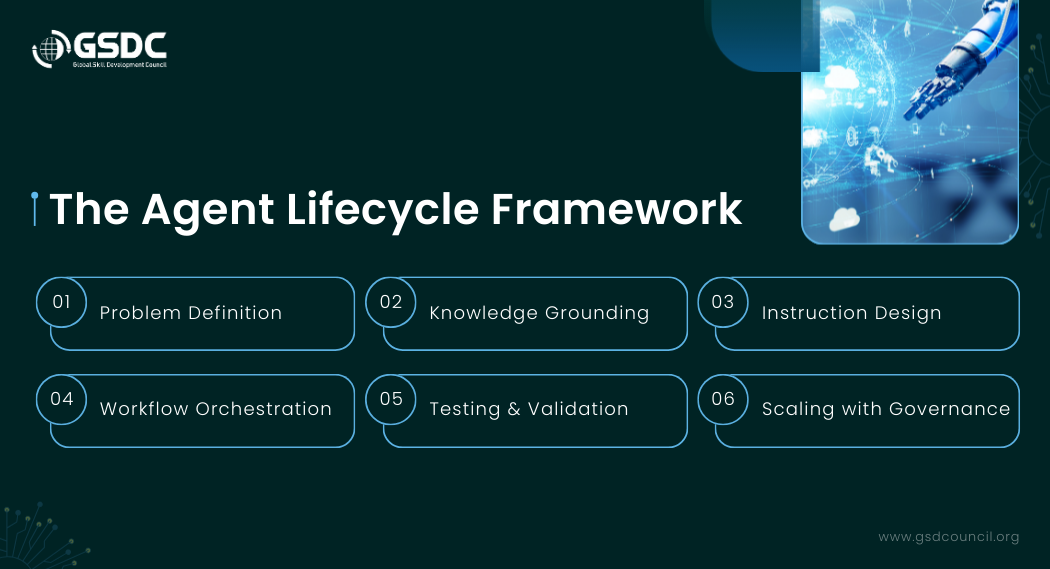

The Agent Lifecycle Framework

Six structured stages characterize a mature agent deployment strategy:

- Problem Definition: Recognize decision-making or automation areas where there are missing business processes that need to be addressed.

- Knowledge Grounding: Linking enterprise data sources such as documents, databases, and operational systems.

- Instruction Design: Defining the agent's behavior, reasoning, and answer by the system prompts and restrictions.

- Workflow Orchestration: Delivering the capabilities for the agent to carry out the task through the integration with the firm's internal applications and the use of automation tools.

- Testing & Validation: This includes monitoring model behaviour, quality of the response, and the safety features.

- Scaling with Governance: It refers to the evaluation of the system's performance, the detection of drift, and the confirmation of compliance with the organization's policies.

This lifecycle allows companies to progress from isolated experiments to a fully operational AI infrastructure.

Observability and Governance as Core Pillars

Production AI systems cannot operate reliably without strong governance and visibility controls. Azure AI Foundry operationalises this requirement through four foundational pillars that ensure trust, accountability, and long-term stability.

Operational Pillars of Azure AI Foundry

|

Pillar |

Function |

|

Evaluation |

Enables systematic model benchmarking, quality testing, and comparison of cost, accuracy, and throughput before deployment. |

|

Customisation |

Supports Retrieval-Augmented Generation (RAG), prompt and instruction design, and alignment of agent behaviour with business rules. |

|

Governance |

Ensures data lineage tracking, compliance with regulatory requirements, access control, and maintenance of audit trails. |

|

Monitoring |

Provides continuous tracking of model performance, response quality, latency, and detection of operational drift in production environments. |

Together, these pillars ensure that AI systems remain transparent, explainable, and accountable throughout their lifecycle, reducing operational risk and strengthening organisational trust in AI-driven decisions.

Building Real-World Agents

One example of a healthcare support agent is a system that can be used to solve a common operational problem: provide medical guidance quickly and in a reliable way in places where direct access to healthcare professionals is limited.

The agent may be set up to:

- Collect user symptoms: Organize health inputs in a structured manner, for example, by asking the user to confirm if they have a fever, cold, headache, and body pain.

- Retrieve relevant medical knowledge: Obtain up-to-date details from the on, premise and online knowledge stores that the enterprise is linked to.

- Create medicine guidance and referral information: Show the user the best options, based on the approximate medicines and a few words of the referral.

- Trigger workflow actions: Start follow-up activities, for example, finding a doctor nearby or giving a link to an external platform where the user can continue the consultation.

Such a scenario illustrates the transformation of AI agents from being just ways of delivering information to the creation of decision support systems, which, by their intelligence, enable users to get access to services, reduce the time they have to wait, and also keep up the level of operations.

Closing the Capability Gap

While AI tools change at a breakneck pace, capabilities remain stagnant. The true differentiating factor for companies is not which platform they choose, but whether their employees have the basic skills necessary to create, manage, and develop intelligent AI systems.

These skills cover:

- Agent architecture design

- Retrieval-Augmented Generation frameworks

- Responsible AI governance

- Prompt and instruction engineering

- AI lifecycle observability

At the Global Skill Development Council (GSDC), we aim to close the gap between avant-garde AI technologies and workforce readiness in the real world. The Certified AI Tool Expert program is one of such initiatives, which is intended to furnish professionals with practical know-how in the responsible use of AI tools, designing agent-based systems, and converting technical skills into quantifiable business results.

Such skills are not merely optional extras; they are what will determine the AI-ready workforce of tomorrow.

Conclusion

Using AI agents is not just a technical exercise anymore. It has become a strategic power that involves data engineering, governance, system design, and business alignment; thus, organisations have to revise their way of thinking about the embedding of AI into daily activities.

The Azure AI Foundry is an example of how companies can transition from disjointed experiments to scalable, controlled, and reliable agent systems by integrating retrieval pipelines, lifecycle observability, and responsible AI controls.

AI will gradually be integrated into different functions, and the question of survival will not be about the availability of the tools but the capability to create intelligent, ethical, and scalable AI ecosystems that will continue to generate business value.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!