Sovereign AI Tools: How Enterprises and Governments Regain AI Control

Written by Matthew Hale

- What Is Sovereign AI?

- What Is Data Sovereignty and Why It Matters

- Why Public AI Tools Create Data Privacy Risks

- Traditional AI vs Sovereign AI Tools: A Comparison

- Why Organizations Are Building Their Own Sovereign AI Tools

- Sovereign AI in Action: Securing Enterprises Against Cyber Threats

- From Tools to Autonomous AI Agents

- Enterprise AI Governance Starts With Sovereignty

- Preparing Talent for the Sovereign AI Future

- Conclusion: Why Sovereign AI Is the Future

AI is making decisions that once belonged only to humans, approving loans, detecting cyber threats, and automating government services. But here’s the uncomfortable truth: most organizations don’t actually control the AI systems they rely on.

This raises a serious question: What is sovereignty in a world powered by algorithms?

In the digital era, sovereignty has evolved into AI sovereignty, the ability to fully own and govern how AI systems are built, trained, deployed, and monitored. That is why, in the GSDC AI Tool Challenge, professionals explore why sovereign AI tools are quickly becoming a strategic necessity for both enterprises and governments.

What Is Sovereign AI?

Sovereign AI means using AI systems that you fully own and control.

Your data stays inside your own systems. You know how the AI is trained, how it works, and how it makes decisions. This is especially important for governments and regulated industries, where trust, transparency, and accountability are critical.

For example, a bank can use its own AI system to review loan applications. The data never goes to an outside platform, and the bank can clearly explain why the AI approved or rejected a loan. That is sovereign AI in action.

What Is Data Sovereignty and Why It Matters

Data sovereignty means that an organization’s data is stored, processed, and governed according to its own rules and the laws of its country.

For example, a government department may not be allowed to store citizen data on foreign servers. With sovereign AI tools, sensitive information always stays inside approved infrastructure, ensuring compliance and full ownership of data.

Why Public AI Tools Create Data Privacy Risks

Most AI tools today run on cloud platforms and are trained on data that users cannot see or control. This creates serious problems, especially for organizations that handle sensitive information.

- Unknown training data: You do not know where the AI learned from or whether that data is correct, biased, or outdated. This makes it difficult to trust the system’s answers.

- Lack of transparency: Many AI tools cannot explain how they reach a decision. For example, when an AI rejects a loan or flags a transaction, teams often cannot clearly understand the reason.

- Cloud dependency: Your business data is processed on external servers, which reduces control over where your information is stored and who can access it.

- Hallucinations: AI tools can confidently provide incorrect answers. In regulated environments, even a single mistake can cause legal or financial trouble.

- Vendor lock-in: Once you depend on one AI provider, changing systems becomes expensive and complex, making it harder to enforce your own governance rules.

Public AI tools are built for general use, not for regulated environments. While they are useful for everyday tasks, they introduce serious AI data privacy risks when used for financial decisions, healthcare records, or government services. Because of these risks, public AI tools are difficult to use safely in industries such as banking, healthcare, and government.

Traditional AI vs Sovereign AI Tools: A Comparison

|

Traditional AI |

Sovereign AI Tools |

|

One-size-fits-all systems designed to handle any type of task |

Purpose-built tools created for specific business functions |

|

Black-box behaviour with little visibility into how decisions are made |

Transparent decision logic that can be reviewed and explained |

|

High operating cost due to heavy cloud and compute requirements |

Cost-efficient models that can run on local or enterprise infrastructure |

|

Difficult to audit or trace decision paths |

Designed with built-in auditability and compliance features |

Why Organizations Are Building Their Own Sovereign AI Tools

Organizations are moving away from large cloud platforms and starting to build their own enterprise AI systems using small, domain-trained models. These tools are created for one clear purpose, which makes them easier to manage and trust.

- Built for a single task: Traditional AI tries to handle everything, writing, reasoning, coding, and more. Sovereign AI tools are trained only for a specific job, such as loan processing, compliance checks, or fraud review. This improves accuracy and reduces mistakes.

- Easy to explain: Because these tools are designed for one purpose, teams can clearly understand how decisions are made. This improves trust and makes audits simpler.

- Lower Running Cost: Small-scale models require less computational strength compared to cloud models. They can be executed on a local server or even a general-purpose laptop computer.

- Designed for compliance: Sovereign AI systems are created with regulations, logging, and reporting requirements in mind.

- Greater control and flexibility: Since the system runs inside the organization, teams can update it, fine-tune it, and secure it based on their own standards.

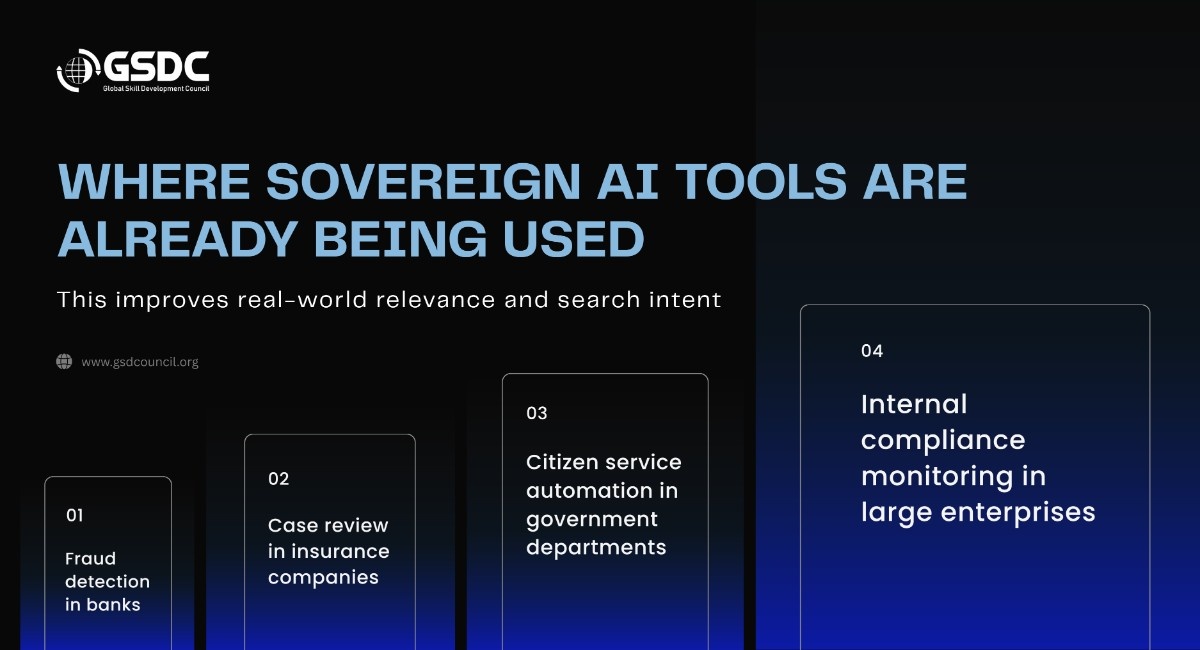

Sovereign AI in Action: Securing Enterprises Against Cyber Threats

One of the most valuable applications of AI in cybersecurity is using AI for threat detection inside enterprise networks. Sovereign AI tools make this possible without exposing sensitive data to external platforms.

- Security alert monitoring: AI directly connects with the existing security tools like SIEM platforms, firewall logs, endpoint protection systems, and network monitoring dashboards. It constantly revises alerts that have been thrown by these systems and identifies unusual patterns that may indicate an attack.

- Identifying the false positives: Security tools generate hundreds of low-risk alerts daily. The sovereign model learns safe patterns and those that require attention, helping teams focus on actual threats only.

- Supporting security analysts: The system works alongside analysts by pulling information from tools like ticketing systems, case management software, and internal knowledge bases. It explains why an alert matters and suggests the next best action.

- Enhancing the anti-fraud capability of AI systems: The AI, through the analysis of transaction logs, access records, and previous incident reports, enhances its ability to detect fraud, privilege misuse, and attacks by coordinated groups.

- Working in a closed ecosystem: All computations take place inside the organization’s servers and security system. This makes the product suitable for highly sensitive applications in the area of AI in the public sector, in financial institutions, and in critical infrastructure.

It is this shift from tools to intelligent agents that allows for automation in reality and not just answering questions.

From Tools to Autonomous AI Agents

Today, the use of AI by companies has gone beyond answering questions. They have started developing AI that can work independently on tasks.

Thus, what is an AI agent? An AI agent is an entity that can perceive a problem, act, and learn.

- Brain – understanding the task: This component of the system knows the task or the responsibility it has. For instance, it may know how to react to a security message, a customer inquiry, and an error message.

- Tools - action performed: The agent interacts with the company systems, such as ticketing systems, databases, or workflow applications. This enables it to open a ticket, update records, or notify the user without requiring human intervention.

- Memory - Improves over Time: This agent can remember its actions, together with the corresponding results. This agent tends to favor a method if the method has been successful before.

By integrating these three components, AI technology can shift from being only an advisory system to actually helping team members in completing everyday work more quickly and correctly.

Enterprise AI Governance Starts With Sovereignty

Without control, AI cannot be trusted. That is why enterprise AI governance is no longer just an IT concern it is now a board-level priority.

- AI compliance challenges: AI systems must follow industry regulations, data protection laws, and internal company policies. If the organization does not control the AI model, it becomes difficult to prove that rules are being followed.

- Regulatory accountability: In sectors like banking, healthcare, and government, leaders must explain how important decisions are made. If an AI system cannot show how it reached a result, the organization risks legal and regulatory issues.

- Ethical Usage of AI Agents: AI agents should not produce bias, discriminatory treatment, or harm others. There should be governance to ensure the application of AI agents for approved uses only.

- Explainability between systems: The team needs to understand not only the result of the decision made by the AI, but also the reasons why the decision was taken. This is especially important when the AI is used in the approval of customers, fraud analysis, and security.

Strong governance starts with sovereignty when the organization owns and controls its AI; it can build systems that are safe, compliant, and trustworthy.

Preparing Talent for the Sovereign AI Future

With the adoption of sovereign AI tools and self-governed systems by organizations and governments, there is an increasing need for professionals well-versed in secure, controlled, and compliant AI. The trend has shifted, where it’s not just about the use of technology by the organization to meet the required need, but the need for the organization to be equipped with professionals who are experts in the development of AI systems.

This process is made easy by the Global Skill Development Council (GSDC), whose certifications are industry-specific and role-specific. Initiatives like the Certified AI Tool Expert enable the building of effective skills in developing AI tools, governance framework management, and the application of trustworthy AI, functioning safely in the enterprise setup.

Conclusion: Why Sovereign AI Is the Future

Artificial Intelligence has moved from being a support system to a decision-maker for businesses, as well as for the governments that regulate them today. This has consequences, as using AI means that companies cannot depend on a system that they do not understand either.

Sovereign AI Provides the Way Ahead. By developing AI systems that function in trusted environments, are based on domain-specific models, and adhere to best practices for governance, the aim is to protect data, ensure compliance, and develop trustworthy, transparent, and proprietary AI systems.

Related Certifications

Stay up-to-date with the latest news, trends, and resources in GSDC

If you like this read then make sure to check out our previous blogs: Cracking Onboarding Challenges: Fresher Success Unveiled

Not sure which certification to pursue? Our advisors will help you decide!